Observability platform decisions often feel straightforward early on. When traffic is manageable and system boundaries are clear, almost any platform appears sufficient. But what works well for one company may fail for another, particularly when they are at different stages of growth.

As growth begins, telemetry volume increases non-linearly. Architectures fan out, and more engineers depend on the same data during incidents. At this point, platform behavior, cost mechanics, and ownership models matter far more than initial ease of use. Observability becomes a structural concern, instead of a tooling exercise.

This article examines how requirements evolve in the early stage, through active growth, and at scale. It outlines how to choose an observability platform that continues to function predictably as systems and teams mature.

Why Observability Decisions Change as Companies Grow

When your team is small, observability feels like a tooling decision. Pick something, ship dashboards, move on. When you grow, it turns into an operating model decision. The same stack that felt “fine” at 10 services can become the thing slowing you down at 100.

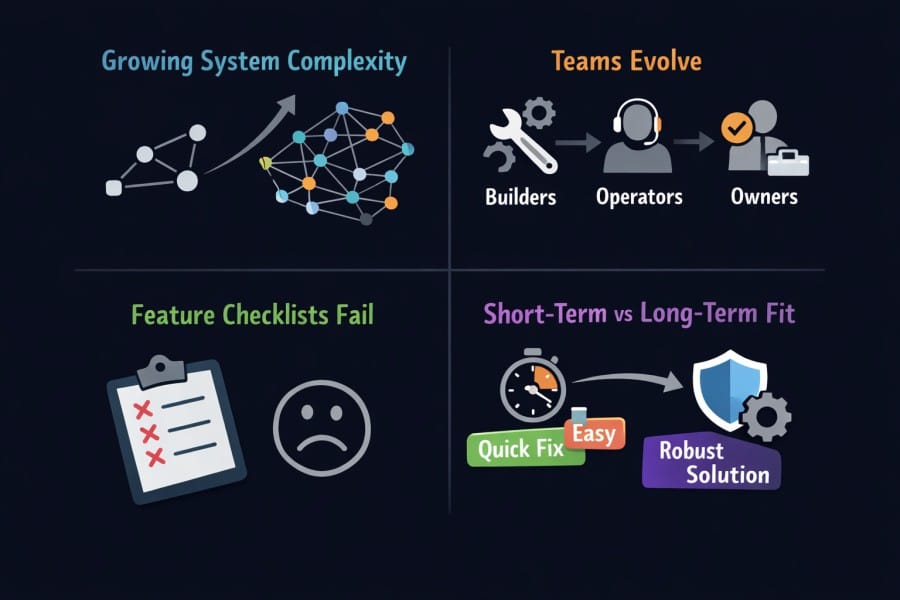

System complexity grows faster

Most teams underestimate how fast complexity compounds. One service fails in a simple way. Ten services fail in combinations. Fifty services fail in chains. Add async queues, caches, retries, feature flags, and third-party dependencies, and you now have failure modes that don’t show up in staging.

That’s why just adding more logs no longer works. More data does not equal more clarity. It often equals more noise, more cost, and slower answers.

Cloud native stacks also bring real operational complexity. In the CNCF’s Cloud Native 2024 survey, “too complex to understand or run” was cited as a top production challenge by 46% of respondents.

Teams evolve from builders to operators to accountability owners

Early on, engineers are builders. The goal is speed. You accept rough edges because everything changes weekly.

Then you hit the growth phase. Someone becomes “on-call” for real. Incidents start to affect revenue. You add SLOs and runbooks. You need consistent service naming and deployment markers. Then, you start caring about the mean time to detect.

At enterprise scale, the organization changes again.

- Platform teams own shared tooling

- Security wants audit trails

- Finance wants predictable spend

- App teams want autonomy but not surprise bills

Observability becomes part of accountability. It becomes more about who is responsible for what, and how to prove it. This is also where standardization matters. The Grafana Labs Observability Survey 2025 reports 67% of organizations use Prometheus and 41% use OpenTelemetry in production.

Feature checklists fail

Feature checklists fail because they ignore long-term platform behavior. They are easy to sell (for vendors) and easy to buy (platform teams). But they also age badly.

Most platforms look similar on day one, with all having dashboards, alerts, tracing, and logs. The differences show up later, under load and under stress:

- How does query performance hold up when everyone is refreshing during an incident

- What happens to the cost when traffic spikes and error paths explode in telemetry

- How painful it is to change sampling, retention, or tagging standards after teams have shipped their own conventions

- Whether you can enforce governance without turning every team into ticket submitters

In other words, you are buying the platform’s failure modes and trade-offs apart from features.

Short-term convenience vs long-term operational fit

Short-term convenience optimizes for setup speed:

- Minimal instrumentation effort

- Quick dashboards

- Low initial friction

Long-term operational fit optimizes for sustained use:

- Clear ownership and sane defaults

- Predictable cost mechanics as data scales

- Controls for sampling, retention, and cardinality

- The ability to standardize across teams without a rewrite

- Security and compliance that doesn’t bolt on awkwardly later

Teams could succeed the first month with a convenient setup, then lose the next two years to slow queries, messy tagging, and pricing mechanics that punish incident spikes. The real goal is reliability. It’s fast answers during incidents, stable operating costs, and a system people trust enough to use every day.

Structural Breakpoints in Observability Platforms

At some point, observability stops behaving the way it did when things were smaller. Nothing breaks all at once. Instead, pressure builds quietly until the platform no longer matches how the system actually behaves.

When telemetry volume stops scaling linearly

Early systems scale with traffic. Later systems scale with architecture. Logs, metrics, and traces do not grow at the same rate.

- In distributed systems, one request turns into ten spans. Ten services turn into hundreds. Add retries and background jobs, and trace volume explodes even when the request rate stays flat.

- Cardinality creates a similar effect. A single new label tied to user ID, tenant, or region can multiply metric series overnight. Teams usually notice only after query latency increases or costs spike.

This pattern is well documented. The Google SRE book notes that high cardinality is one of the most common causes of monitoring system instability, not raw metric count. The same principle applies to modern observability stacks.

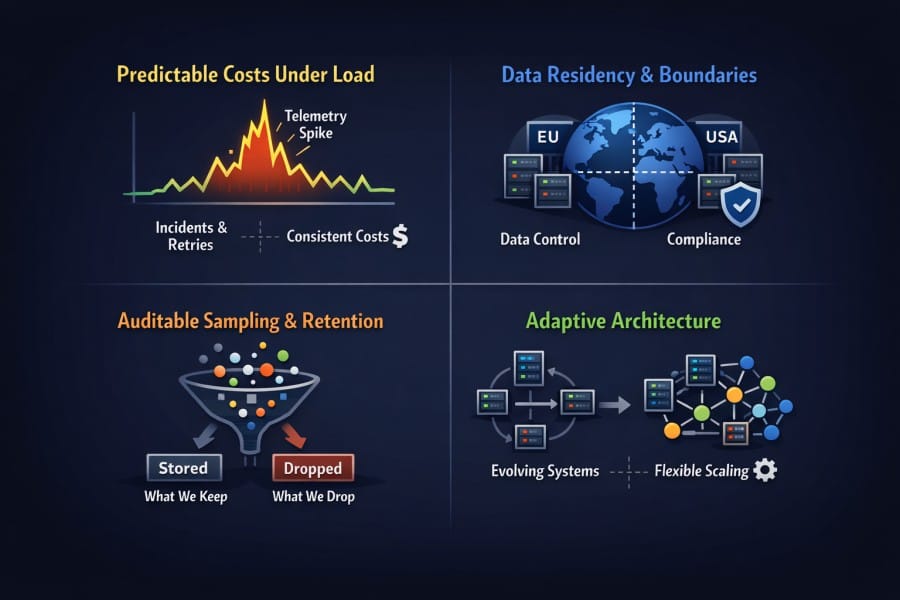

When pricing decouples from engineering value

Early on, pricing feels intuitive. More traffic means more cost. That mental model does not survive growth.

- As platforms mature, billing units drift away from what engineers actually control. Hosts, containers, spans, indexed events, custom metrics, queries, users, or compute credits all start to matter in different ways.

- Two systems with the same traffic can generate very different bills based on retries, error rates, or architectural choices. Incidents become expensive not because teams did something wrong, but because the system behaved differently under stress.

At this stage, predictability matters more than headline pricing. Finance teams want to forecast. Platform teams want to set guardrails. Engineers want to debug without worrying that every query makes things worse.

When control shifts from optional to mandatory

In early stages, control feels like overhead. Defaults feel safe. You trust the platform to do the right thing. That changes as risk increases.

- Sampling, routing, and retention start acting as risk controls.

- Teams need to decide what must never be dropped, what can be summarized, and what can expire quickly without harming investigations.

- Opaque defaults become dangerous here.

- If teams do not understand how sampling works, they cannot reason about missing data during incidents.

- If retention rules are unclear, postmortems suffer.

- If routing is fixed, sensitive data may cross boundaries it should not.

This is where many early choices fail. Platforms optimized for convenience often hide complexity. Platforms optimized for scale expose it, because hiding it creates blind spots when things go wrong.

Core Dimensions That Matter at Every Growth Stage

These dimensions exist from day one, but their impact changes as systems and teams mature. Teams that understand this shift tend to make fewer painful platform changes down the line.

Telemetry volume and data growth patterns

In early systems, telemetry volume feels predictable. Traffic goes up, data goes up. Engineers can roughly estimate what next month looks like.

That breaks as architecture evolves. Logs, metrics, and traces grow for different reasons.

- Logs expand with verbosity and error handling.

- Metrics scale with cardinality, not time.

- Traces scale with fan-out, retries, and asynchronous workflows.

As services multiply, each request fans out across more components, and trace size grows even if request rate stays flat. This is why volume becomes nonlinear. A modest increase in architectural complexity can double or triple telemetry without any visible change in user traffic.

Over time, different signals dominate different failure modes:

- Logs dominate storage and ingestion costs

- High-cardinality metrics dominate query latency and stability

- Traces dominate backend compute during incident response

Understanding which signal drives pressure at each stage matters more than raw volume numbers.

Cost mechanics and pricing behavior (small, medium, large)

Small scale

Pricing always looks reasonable at a small scale. Bills track usage closely. Surprises are rare.

Medium scale

As environments grow, pricing mechanics start to diverge from how engineers think about systems. New billing units appear. Costs spike during incidents. Optimization becomes reactive instead of planned.

In medium environments, teams often see:

- Cost jumps tied to retries and error storms

- Sudden overages caused by new services or labels

- Difficulty mapping spend back to specific teams or workloads

Large scale

At a large scale, the absolute price becomes less important than the cost curves. Leaders care about how spending behaves under stress.

84% of respondents report cost as a significant challenge alongside other operational hurdles when implementing observability platforms.

This is why mature teams ask different questions. Instead of going after cheaper tools, they ask if the tool can stay stable under heavy load.

Operational ownership and control

Observability starts as a convenience tool. It becomes an accountability system. In small teams, reliability is informal. Someone fixes things when they break. Ownership is shared and flexible.

As systems scale, ownership fragments:

- Application teams own service behavior

- Platform teams own shared tooling

- SREs own availability and error budgets

- Security and compliance teams own access and data boundaries

Observability sits at the intersection of all of them. Managed platforms reduce effort early. Defaults work. Setup is fast. That trade-off is often correct.

Later, teams need control. They need to enforce standards, limit blast radius, and explain behavior during incidents without guessing how the platform works internally. Opaque defaults that once felt helpful become liabilities.

Data retention, sampling, and signal quality

As volume increases, teams hit practical limits. Storage grows faster than budgets. Query latency increases. Systems slow down when engineers need answers most. At this stage, sampling and retention are core reliability mechanisms.

The CNCF Observability Whitepaper notes that uncontrolled data growth is one of the primary reasons observability systems fail during major incidents, precisely when they are needed most.

Weak platforms force blunt trade-offs, either dropping data blindly or paying for everything and hoping it holds.

On the other hand, strong platforms help teams preserve signal quality under pressure. That usually means:

- Keeping full fidelity for errors and critical paths

- Summarizing or reducing low-value traffic

Compliance, security, and data boundaries

Governance concerns emerge gradually. Teams ask:

- Where the data lives

- Who can access it

- How long it is retained

- Whether it can leave a region at all

As organizations grow, observability data becomes production data with legal, contractual, and security implications. This shift happens earlier in regulated industries, but it eventually affects most companies.

By the time teams reach enterprise scale, compliance and data residency often outweigh feature considerations. Platforms that treat governance as an afterthought struggle here. The ones that survive bake boundaries, auditability, and control into the platform itself.

Choosing an Observability Platform in the Early Stage

Early-stage observability is about momentum. You are still discovering what the system is, not optimizing what it has become. The right choice helps you move faster without creating traps you only notice later.

Primary goals at this stage

- Fast setup and immediate visibility: You want to know when something breaks and why, without spending weeks wiring dashboards or tuning queries. If it takes longer to observe the system than to change it, the tooling is getting in the way.

- Minimal operational overhead: Small teams do not have spare cycles to run and babysit infrastructure. Every hour spent maintaining observability is an hour not spent building the product.

- Rapid feedback during development: You want to see the impact of a deploy quickly. Errors, latency regressions, and failed assumptions should show up while the context is still fresh.

What matters most

- Time to value: If you cannot get useful signals in the first few days, the platform will not stick. Early teams abandon tools quickly when setup friction is high.

- Ease of instrumentation: Manual instrumentation does not scale when the codebase is changing weekly. Auto-instrumentation and sane defaults reduce friction and prevent gaps when new services appear.

- Low cognitive load: Dashboards should answer basic questions without training. Traces should make sense without explaining them. If engineers need a playbook just to understand what they are looking at, adoption drops fast.

At this stage, observability should feel invisible. It should support development, not demand attention.

How the cost typically behaves for small, medium, and large teams

Small teams

For small teams, costs are usually linear and predictable. Traffic is low. The architecture is simple. Bills scale roughly with usage and stay within expectations.

Medium-sized teams

As teams move toward the upper end of “early stage”, cost accelerates. New services fan out and distributed tracing becomes more common as teams try to understand cross-service behavior. Debug logging increases during active development. Cardinality creeps in through user IDs, feature flags, and environment tags. At this stage, observability spend often grows faster than traffic itself.

Large teams

Large teams are less common at this stage, but when they do exist, limitations appear quickly. What worked for a handful of services breaks once you deploy dozens. Teams begin relying heavily on custom metrics to track service-level objectives, feature behavior, and internal KPIs. This increases metric cardinality and storage. This makes cost controls, sampling, and retention important earlier than teams expect.

Common early-stage mistakes

- Rigid pricing: Pricing that looks simple at low volume can become restrictive once traffic patterns change. Teams underestimate how hard it is to switch later.

- Ignoring future telemetry growth: Even if you do not need advanced controls today, you should understand how the platform behaves when volume doubles or triples. Not planning for that usually leads to rushed decisions under pressure.

- Over-engineering observability before systems stabilize: Heavy dashboards, complex alerting, and custom pipelines add friction without delivering much value early on. At this stage, clarity beats completeness.

Choosing an Observability Platform in the Growth Stage

The growth stage is where observability platforms reveal their real behavior.

What changes at this stage

- Service sprawl: A few core services turn into dozens. Ownership spreads across teams. Requests fan out across APIs, queues, caches, and third-party services.

- Telemetry volume increases faster than traffic: New services add logs and traces. Retries multiply spans. Debug logging creeps back in during incidents. Even steady user growth can produce sudden data jumps.

- Incidents change shape: They happen more often, last longer, and involve more components. Root cause is rarely obvious. Engineers move from dashboards to ad-hoc queries and deep trace exploration under pressure.

According to the 2024 Google Cloud DevOps Research and Assessment (DORA) report, high-performing teams still experience frequent failures, but their differentiator is faster diagnosis and recovery. Observability quality is a key factor in that gap.

Why most early observability setups start breaking here

- Unpredictable costs: Bills spike during incidents or deploys even when baseline traffic looks stable. Teams struggle to explain why last month cost more than the one before.

- Opaque sampling decisions: Defaults that once felt safe now hide important trade-offs. Engineers cannot tell what was dropped, what was kept, or why a trace is missing during an investigation.

- Degraded correlation across logs, metrics, and traces: Inconsistent tagging, partial instrumentation, and volume pressure make it harder to move cleanly from a spike on a dashboard to the exact request or user affected.

This is where teams lose trust, mainy because they no longer know what they are looking at.

New decision criteria that emerge

- Clear cost control: Teams need to set budgets, limits, and guardrails before incidents happen, not after the bill arrives.

- Intelligent sampling strategies: Sampling must preserve important signals, not just reduce volume. Errors, high-latency paths, and unusual behavior must survive even when traffic spikes. Blind sampling leads to blind spots.

- Reliable cross-signal correlation under load: When everything is slow, teams need to pivot quickly between metrics, traces, and logs without guessing or re-running queries.

How cost behavior shifts for small, medium, and large teams

- Small teams feel volatility for the first time. A single bad deployment or incident can double monthly spending. Finance notices. Questions start.

- Medium teams hit sharp cost inflection points. Adding a few services or new dimensions triggers a step change in usage. Optimization becomes a regular task, not a one-off cleanup.

- Large teams are forced to rethink architecture and ownership. Platform teams emerge. Cost allocation and chargeback discussions start. Observability becomes a shared service with rules and standards.

Signals your current platform is no longer sufficient

When the below signals appear together, the issue is usually a sign that the platform no longer matches the stage the system has reached.

- Billing spikes appear without clear changes in traffic or releases. Engineers cannot easily explain where the data came from.

- Telemetry volume grows, but diagnostic value drops. More logs and traces exist, yet incidents take longer to resolve.

- Noise increases. Alerts fire more often, dashboards feel cluttered, and engineers spend more time filtering than understanding. Mean time to resolution slows instead of improving.

Choosing an Observability Platform at Scale

At scale, observability becomes infrastructure that the organization can depend on during its worst days.

Structural requirements at scale

Enterprises evaluating observability platforms increasingly rank data control and auditability alongside performance and cost, especially in regulated sectors.

- Predictable cost behavior under sustained load: At this stage, traffic patterns are known, but failure patterns are not. Incidents, retries, and cascading errors generate sudden telemetry spikes. The platform must behave consistently when this happens. A system that is affordable in a steady state but volatile under stress becomes a liability.

- Clear data boundaries and residency guarantees: Large organizations operate across regions, business units, and customers with different legal requirements. Observability data often contains sensitive identifiers, payloads, and operational metadata. Teams need to know exactly the data residency location and where it cannot go.

- Auditable sampling and retention policies: It must be possible to explain what was retained, what was dropped, and why. This matters for post-incident reviews, regulatory audits, and internal accountability. Opaque behavior is not acceptable.

Platform qualities that matter most

- Control: Control over ingestion and sampling without re-instrumentation is critical. At scale, you cannot ask hundreds of teams to redeploy just to adjust sampling rules. Control needs to exist at the platform layer, not buried in application code.

- Adaptive: The ability to adapt architecture over time matters more than static feature sets. Systems evolve. Services split. Regions multiply. A platform that assumes a fixed shape eventually fights the organization using it.

- Security and compliance: Alignment with security, compliance, and governance needs becomes a gating factor. Access controls, audit logs, data isolation, and policy enforcement must integrate cleanly with existing enterprise processes. Workarounds do not scale.

This is where many feature-rich platforms fall short. They work well until they are asked to behave predictably inside complex organizations.

How the cost behaves for medium vs large environments

Medium environments are still sensitive to runaway spend. Growth is ongoing. Architecture is changing. Cost controls need to be tight to prevent surprises caused by new services, retries, or cardinality explosions.

Large environments think differently. Optimization matters less than predictability. Finance teams want stable forecasts. Platform teams want guardrails that hold even during incidents. Engineers want freedom to investigate without triggering alarms about cost.

Why platform architecture matters more than features

At scale, every observability platform will claim feature parity. The real differences appear under failure.

Backend Stability

Backend scalability during failure conditions matters more than raw throughput.

- Can the system handle thousands of engineers querying wide time ranges during an outage?

- Does query latency degrade gracefully or collapse?

Observability system behavior during incidents is critical. When everything is slow, engineers rely on observability to be fast. If the platform itself becomes sluggish, teams lose their primary diagnostic tool.

Failure modes

- What happens when the observability backend is overloaded?

- Does it drop data silently, block ingestion, throttle queries, or fail open?

These behaviors determine whether teams trust the platform when it matters most. These are architectural questions. Feature lists do not answer them.

Where self-hosted and BYOC models enter the picture

At scale, observability platforms are judged less by how easy they are to start and more by how well they behave when everything else is failing. This is the stage where some teams move away from fully managed SaaS to self-hosted observability. The reasons are practical.

- Cost predictability

- Data residency

- Control over failure modes

- The ability to tune systems without waiting on vendor roadmaps

Bring-your-own-cloud and self-hosted models give organizations infrastructure-level control. That control allows teams to align observability behavior with internal reliability, security, and compliance standards.

A Practical Framework for Choosing the Right Platform

This framework is meant to be used, not admired. Teams can apply it regardless of vendor, deployment model, or current maturity. The goal is to reduce surprises later, when changing direction becomes expensive.

Step 1: Map current and expected telemetry growth

The CNCF Observability TAG notes that most production failures of observability systems are driven by unplanned data growth rather than raw traffic increases.

Teams often underestimate future growth because they model usage, not structure. Adding services, queues, and async workflows increases telemetry even if request rate stays flat.

Mapping this early helps avoid platforms that look affordable today but break under architectural complexity. So, start with what you generate today, then project how it changes when the system grows.

Step 2: Identify which costs must be predictable

This step forces clarity. Decide which parts of observability must be boring and predictable, and which can remain flexible.

Not all teams tolerate variability the same way.

- Small teams can absorb some noise. A spike during an incident is annoying but manageable. The focus is speed, not accounting precision.

- Medium teams feel volatility first. Finance starts asking questions. Engineers lose freedom when every investigation risks a cost spike. Predictability becomes as important as absolute price.

- Large teams demand stability. Forecasting matters. Guardrails matter. A platform that behaves unpredictably under stress creates organizational friction, even if the average spend looks reasonable.

Step 3: Decide where operational responsibility should live

Every observability platform sits somewhere on a responsibility spectrum.

- Vendor-managed models minimize operational effort. They work well when teams value speed and do not need deep control.

- Self-operated models maximize control. They suit organizations that need to align observability behavior with internal reliability, security, and cost policies.

- Hybrid and BYOC models sit in between. Vendors provide software and support, while teams control infrastructure, data boundaries, and failure modes.

Many teams drift into a model that does not match who is accountable when systems fail. To avoid that, decide where your data should be stored based on the type of data you deal with and the industry you operate under.

Step 4: Evaluate how sampling and retention are handled

Sampling and retention decisions shape what teams can see during incidents. Explicit control allows teams to decide what must be kept, what can be summarized, and what can be dropped. These controls can be audited, adjusted, and aligned with risk.

Opaque defaults hide trade-offs. Teams only notice them when data is missing at the worst possible time. At scale, that loss of trust is hard to recover.

So, look for platforms that make these decisions visible and reversible, without requiring application re-instrumentation or widespread redeploys.

Step 5: Assess long-term switching cost and lock-in

Telemetry pipelines, dashboards, alerts, and operational habits accumulate over time. The more teams depend on them, the harder it becomes to move.

- In medium environments, switching is painful but possible. Instrumentation changes are manageable. Data migration is limited.

- In large environments, switching becomes a multi-quarter effort. Historical data is hard to move. Teams resist change. Parallel systems run longer than planned.

Even if a platform fits today, teams should understand how hard it will be to leave tomorrow. The right choice minimizes lock-in without sacrificing operational clarity.

Choose an observability platform that supports growth without forcing teams to relearn how to see their systems.

Where Platforms Like CubeAPM Fit

Self-hosted and BYOC observability platforms usually appear when teams are no longer optimizing for convenience. They are responding to structural pressure. At this point, observability decisions are tied to architecture, cost behavior, and operational accountability.

When teams start evaluating this class of platforms

Teams that look at platforms like CubeAPM are typically past the exploratory phase. Common triggers include:

- Telemetry spend crossing thresholds that finance now tracks closely

- Repeated cost spikes during incidents or traffic anomalies

- Limited ability to explain or control why usage changed month to month

At this stage, predictability matters more than headline pricing. Teams want observability costs to behave like infrastructure costs, not like an open-ended variable.

Need for explicit control over sampling and retention

Sampling and retention decisions are not optional as systems scale. Teams need to:

- Guarantee full fidelity for errors and high-risk paths

- Apply different retention policies to different signals

- Understand exactly what was dropped and why during an incident

Opaque defaults create risk here. When data is missing, teams need to know whether it was never captured, sampled out, or expired.

In CubeAPM, sampling decisions remain inside the customer environment. This allows teams to audit and tune sampling behavior without relying on vendor-side black boxes.

Infrastructure-level observability becomes mandatory

Growth-stage and enterprise systems depend heavily on infrastructure primitives. Kubernetes scheduling behavior, Kafka consumer lag, queue backpressure, and resource contention often explain application symptoms long before code changes do.

Observability platforms evaluated at this stage need to see these signals as first-class data, not add-ons.

Running the observability backend closer to the infrastructure makes correlation easier and reduces blind spots. CubeAPM’s architecture is designed to operate within customer-controlled infrastructure, which supports this model.

Why this category is evaluated later, not early

Platforms like CubeAPM are rarely chosen during early-stage tooling decisions. They are usually considered during broader reviews that include:

- Deployment and hosting strategy

- Cost control and budgeting models

- Ownership boundaries between app teams, platform teams, and security

In other words, the evaluation is architectural, not feature-driven. Teams are deciding how much control they need, where responsibility should live, and how observability should behave under failure.

In that context, CubeAPM tends to be assessed alongside those structural questions. The platform choice follows the operating model, not the other way around.

When to Re-Evaluate Your Observability Platform

Re-evaluation rarely starts as a strategic exercise. Teams feel it during incidents, finance feels it in invoices, and security feels it during audits. The mistake is waiting until all three escalate at once.

Engineering triggers that signal misalignment

The 2024 Google DORA report shows that high-performing teams are not defined by fewer incidents, but by faster recovery. Observability quality directly affects that outcome.

Recurring blind spots are the clearest sign.

- Incidents happen, but teams cannot answer basic questions quickly.

- Traces are incomplete. Logs exist, but lack context. Metrics point to symptoms, not causes.

- Slow incident resolution follows. Mean time to resolution increases even as tooling volume grows.

- Engineers spend more time filtering noise, re-running queries, or arguing about missing data than fixing the problem itself.

First, teams stop opening the observability tool first. They fall back to ad-hoc logging, SSH access, or guesswork. Trust erodes quietly. This means the platform is no longer doing its job.

Business triggers that force the conversation

- Cost volatility is often the first non-engineering trigger. Bills fluctuate even when traffic looks stable. Incidents generate financial surprises. Finance teams ask for explanations that engineering cannot easily provide.

- Compliance pressure arrives next. Questions about data residency, access controls, and retention turn into requirements. Teams realize observability data is production data with legal implications.

At this point, observability becomes a cross-functional issue involving finance, security, and leadership.

Why re-evaluation is usually cheaper earlier than later

Early re-evaluation limits damage.

- When systems are smaller, switching cost is manageable. Instrumentation changes are limited. Dashboards and alerts can be rebuilt without disrupting dozens of teams. Historical data loss is inconvenient, not catastrophic.

- Later, switching becomes a program. Data gravity sets in. Teams depend on existing workflows. Parallel systems run longer than expected. Migration risk increases.

The key insight is timing. Re-evaluation does not mean immediate replacement, but validating whether the platform still matches the system’s stage. Doing that earlier gives teams options. Waiting removes them.

In addition, observability platforms should evolve with the system. When they stop doing that, the cost of staying quiet exceeds the cost of reassessing.

Conclusion

In observability decisions, what looks convenient early often locks in assumptions you later regret. Pricing models, data limits, and hidden defaults turn into constraints once traffic grows and incidents get real.

Good teams choose platforms that behave well under pressure, not by their feature lists. Ones where cost stays predictable, control stays with the team, and ownership is clear. That choice matters more than any dashboard you ship in week one.

If you’re evaluating observability today, do it with tomorrow in mind. Map how your system, team, and data will look a year from now, not just what hurts this quarter. The best time to make a calm, intentional platform decision is before growth forces your hand.

Disclaimer: The information in this article reflects the latest details available at the time of publication and may change as technologies and products evolve.

FAQs

1. How does the right observability platform change as a company grows?

Early on, teams need fast setup and basic visibility. As systems scale, the observability platform must handle higher data volume, more services, and more people using it. At later stages, cost behavior, control over data, and operational ownership matter more than ease of onboarding.

2. When should a team consider switching observability platforms?

Teams usually revisit their observability platform when costs become unpredictable, investigations feel incomplete, or platform limits force trade-offs during incidents. Growth in traffic, microservices, or compliance requirements are common trigger to re-evaluate.

3. What matters more than features when choosing an observability platform?

How the platform behaves under load matters more than its feature list. Pricing mechanics, sampling and retention controls, and who owns the infrastructure all have long-term impacts. These factors shape reliability and cost far more than individual dashboards or alerts.

4. Is a SaaS or self-hosted observability platform better at scale?

Neither is universally better. SaaS platforms optimize for speed and convenience early on. Self-hosted or hybrid models often offer more control and predictability as data volume and governance needs grow. The right choice depends on team maturity and operational goals.

5. How can teams future-proof an observability platform decision?

Teams should evaluate how the platform scales in cost, control, and ownership, not just current needs. Understanding data growth patterns, sampling flexibility, and exit complexity helps avoid painful migrations later as the system matures.