Graylog is best for centralized log management and SIEM-style investigations. Splunk Observability Cloud fits enterprises that need enterprise full-stack observability. CubeAPM is better for teams that want unified OpenTelemetry-native observability with predictable ingestion-based pricing.

Platform selection has moved well beyond feature checklists. Teams evaluating log management and observability in 2026 are asking harder questions: Who owns the data? What happens to the bill when traffic spikes? Does the platform scale with OpenTelemetry natively? Can we retain logs for compliance without dealing with surprise bills?

This guide compares three distinct platforms, Graylog, Splunk Observability Cloud, and CubeAPM, across architecture philosophy, MELT signal coverage, pricing behavior, sampling strategies, data retention, and real-world operational fit. The goal is to give engineering teams and platform architects an honest, vendor-neutral basis for evaluation rather than a marketing comparison.

Quick Comparison: Graylog vs Splunk Observability Cloud vs CubeAPM

| Dimension | Graylog | Splunk Observability Cloud | CubeAPM |

|---|---|---|---|

| Primary Focus | Log management & SIEM | Full-stack SaaS observability (APM, infra, RUM) | Unified MELT observability, OpenTelemetry-native |

| Deployment | Self-hosted, cloud, or hybrid | SaaS only | Self-hosted (vendor-managed ops) |

| Pricing Model | Plan-based / quote-based pricing | Host-based plus activity-based add-ons for products like RUM and synthetics | Ingestion-based ($0.15/GB), no per-user fees |

| MELT Coverage | Strong log management | Full MELT | Full MELT |

| OpenTelemetry | Strong support | Strong OTel support | Fully OTel-native |

| Data Ownership | Customer-controlled | Vendor-managed SaaS | Customer retains data in own environment |

| Retention | default new index sets use a 30–40 day | Raw traces: 8 daysSpans: 8 days | Unlimited |

| Sampling | Stream rules, pipelines, & pre-index filtering/routing. | Head + tail-based | Context-aware smart sampling |

| Best For | Log-centric ops, SIEM compliance | Fully managed SaaS, large enterprise | OTel-native teams needing cost control & data ownership |

How We Evaluated these Platforms

To keep this comparison grounded and reproducible, the platforms were evaluated against a consistent set of technical and commercial assumptions.

Test Architecture Assumptions

- Kubernetes-based microservices architecture

- JVM + Node.js services with distributed tracing enabled

- Centralized log ingestion from multiple sources

- 30, 125, and 250 engineer team models

Telemetry Assumptions

- Logs: 250–1,500 GB/month (scaled by team size)

- Traces: 20–200M spans/month

- Metrics: Standard infrastructure + application metrics

- Retention: 30–90 days baseline for cost modeling

This comparison focuses on architectural design and pricing behavior at scale. It does not evaluate entry-level free-tier experiences in isolation, since most of the meaningful cost and coverage differences emerge under real production workloads.

Architecture Philosophy

Deployment Model and Data Control

The most structurally important difference between these three platforms is not feature coverage; it is where the data lives and who controls the operational environment.

| Dimension | Graylog | Splunk Observability Cloud | CubeAPM |

|---|---|---|---|

| Deployment | Self-hosted, hybrid, or cloud (AWS) | SaaS only (cloud) | Self-hosted in customer environment, vendor-managed ops |

| Data location | Customer-controlled infra or Graylog Cloud | Splunk’s managed cloud regions | Inside customer’s own cloud or on-prem |

| Operational ownership | Customer manages self-hosted; Graylog manages cloud | Fully vendor-managed | Vendor manages ops; customer owns data and infra |

| Self-hosted option | Yes (Open and Enterprise) | No | Yes |

| Compliance readiness | Strong, full data control when self-hosted | Vendor-managed; compliance certifications available | Strong, data never leaves customer boundary |

For teams in regulated industries like healthcare, financial services, and government, the ability to keep telemetry data inside a defined perimeter is often not a preference but a requirement. CubeAPM and Graylog both support this. Splunk Observability Cloud, as a SaaS-only platform, handles compliance through its own certifications and data residency regions rather than through customer-controlled infrastructure.

Feature Evaluation

Core Focus

CubeAPM is built for teams that want full-stack observability without giving up control over where their telemetry data lives. Its core strength is bringing metrics, events, logs, and traces together in one OpenTelemetry-native platform while running inside the customer’s own cloud or on-prem environment. That makes it a strong fit for teams that care about unified MELT visibility, predictable ingestion-based pricing, and stronger data ownership as systems scale.

Splunk Observability Cloud is built for teams that want a fully managed SaaS observability platform with broad visibility across modern applications and infrastructure. Its core strength is full-stack monitoring across metrics, traces, infrastructure, real user monitoring, and synthetic monitoring, all delivered through a vendor-operated platform with deep analytics and service-level investigation tools. It is a strong fit for enterprises that want rich observability features and minimal platform management overhead.

Graylog is built primarily for centralized log management and SIEM-driven operations. Its core strength is collecting, processing, searching, and analyzing large volumes of log data for operational troubleshooting, security monitoring, and compliance workflows. It is a strong fit for teams whose main priority is log visibility and investigation rather than full-stack application observability with native tracing.

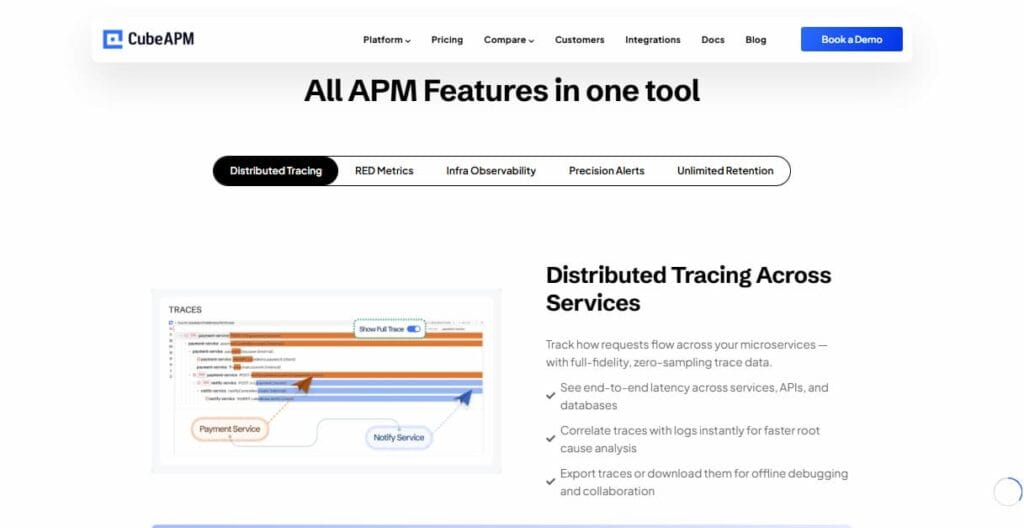

MELT Coverage

CubeAPM provides full MELT coverage across metrics, events, logs, and traces, with correlation built into the same platform. Its strength is not just collecting all four signals, but letting teams investigate them together inside their own environment without sending data to an external SaaS backend.

Graylog is strongest on the log side of observability. It gives teams powerful log ingestion, search, enrichment, alerting, and security analysis, but it is not a full native MELT platform in the same way as Splunk Observability Cloud or CubeAPM because distributed tracing and broader cross-signal observability are not its main design center.

Splunk Observability Cloud also delivers full MELT coverage, with strong support for metrics, traces, infrastructure monitoring, and connected log workflows, plus service maps and investigation tools. Its main advantage is broad full-stack visibility in a fully managed SaaS model built for enterprise-scale operations.

Sampling Strategy

CubeAPM implements context-aware smart sampling natively. The platform automatically prioritizes the retention of anomalous traces, high-latency spans, and error-path traces, while applying more aggressive sampling to healthy request flows. This means the data that matters most for debugging is disproportionately retained, while routine baseline traffic is sampled at a higher ratio, reducing ingestion costs without sacrificing visibility into rare production failures.

Graylog uses rule-based filtering and routing at the pipeline level. Teams can configure pipeline rules to route logs to active (indexed) storage, warm storage tiers, or the data lake based on source. severity, or content. This is not trace-aware sampling but log-level routing, useful for cost control on log volumes but not applicable to distributed tracing workloads.

Splunk Observability Cloud uses head-based sampling by default, where the sampling decision is made at the entry point of a trace. Teams can configure the optional Splunk OpenTelemetry Collector to apply tail-based sampling pipelines, where the decision is deferred until the complete trace is visible, allowing late-arriving error signals to influence what gets retained.

Real-World Debugging Scenario: Intermittent Checkout Latency

A payment service is intermittently spiking from 120ms to 2 seconds during peak traffic. The team receives an alert and begins an investigation.

Using CubeAPM: Engineers trace the latency spike to a slow database query

Smart sampling retained the slow-path traces in full fidelity while the healthy baseline was sampled. The trace view shows the exact database span (pg.query on orders table, 1.82 seconds) with full span attributes. The engineer pivots directly from the trace to correlated infrastructure metrics (CPU throttling on the database pod in Kubernetes) and correlated application logs (connection pool exhaustion warning from the ORM layer), all within the same platform, with no data crossing an external boundary. The self-hosted deployment means historical trace and log data beyond 30 days is available for trending analysis without additional retention charges.

Using Graylog: Logs reveal warning patterns, but not the exact delayed span

The investigation begins in the log search interface. The engineer filters logs by service name and time window, looking for error patterns or anomalous messages correlated with the latency spike. Structured log parsing through Graylog’s pipeline processor extracts relevant fields for filtering. The team can identify error messages and transaction patterns in logs, but trace-level span data, that is, the specific downstream call that introduced the delay, is not available natively. A separate APM tool would need to be queried to identify the slow span. Investigation time increases due to cross-tool context switching.

Using Splunk Observability Cloud: Service maps and traces identify the slow database span

The service map in Splunk APM highlights the checkout service and shows downstream dependency health. The engineer drills into the trace explorer and identifies a slow database span averaging 1.8 seconds during the spike window. Log Observer Connect is used to pull correlated log data from Splunk Cloud for the affected time range. Infrastructure Monitoring shows the database host CPU was saturated at 95% during the incident window. This gives the engineer access to traces, infrastructure metrics, and connected log workflows from within Splunk Observability Cloud, even though the log data is accessed through Splunk platform integration rather than stored natively in the same observability backend.

All three platforms arrive at a resolution, but the investigation workflow, signal availability, and data ownership model differ meaningfully, especially for teams with compliance requirements, long retention needs, or SLA pressure to reduce MTTR.

Pricing Behavior at Scale

Pricing differences between observability platforms tend to be modest at low volumes and significant at scale. Understanding how each model behaves as telemetry grows is essential for total cost of ownership projections.

Modeled Cost Overview

Disclaimer: The following estimates use standardized telemetry assumptions (logs, metrics, traces) scaled by team size, 30–90 day retention baseline, and published or publicly documented pricing. Actual costs will vary by contract, usage patterns, and negotiated discounts.

| Team Size | CubeAPM (est.) | Splunk Observability Cloud (est.) | Graylog Enterprise (est.) |

|---|---|---|---|

| ~30 engineers | $2,080/month | $7,896/month | $3,200/month |

| ~125 engineers | $7,200/month | $25,990/month | $11,400/month |

| ~250 engineers | $15,200/month | $57,970/month | $28,600/month |

Note: Graylog Enterprise pricing is not fully public; estimates above reflect consumption-based active data indexing at scale. Actual Graylog pricing requires direct engagement with the sales team. Splunk Observability Cloud pricing reflects host-based subscriptions for APM + Infrastructure tiers.

Key Pricing Dynamics to Watch

- Splunk Observability Cloud: Host-based pricing compounds when host counts grow (Kubernetes auto-scaling), and user seat licensing adds an additional dimension. A container allocation of 10–20 per host is included, with a la carte pricing for additional containers.

- Graylog: Cost aligns to indexed active data. The data lake tier allows archiving lower-priority logs without consuming the active allotment, a cost control mechanism that Graylog calls consumption-based pricing.

- CubeAPM: Flat per-GB pricing with no per-user, per-host, or per-module charges. All platform capabilities (APM, RUM, Synthetics, Infra, Logs, Traces) are included in the same price. Smart sampling reduces effective ingestion volume by up to 80% for trace data.

Data Retention

CubeAPM positions retention as a core architectural advantage. It offers unlimited data retention while also emphasizing full data control inside the customer’s environment. The significance of that model is that retention becomes primarily a storage-capacity decision inside infrastructure the customer already controls. For teams that need to revisit incidents months later, maintain longer audit windows, or avoid paying extra every time retention requirements grow, that can materially improve cost predictability.

Graylog uses configurable retention rather than a single fixed platform-wide limit. Its current docs show new index sets defaulting to a Time Size Optimizing window of about 30–40 days, but teams can extend retention through archive and tiered storage strategies depending on deployment and plan. That makes Graylog more flexible than fixed-retention SaaS models, though a longer lookback still depends on how the environment is configured and managed.

Splunk Observability Cloud applies retention by data type, and those defaults can become a real design constraint when teams need to investigate issues over longer periods. Splunk documents raw traces for 8 days by default, traces of interest up to 13 months with extended retention, and Monitoring MetricSets for 13 months. In practice, that means long-term historical access is not just a technical setting but often a licensing and retention-tier decision, which can add cost when teams need to keep more high-value data for compliance, audit trails, or recurring incident analysis.

For teams running compliance-driven workflows such as SOC 2 audit logging, HIPAA transaction records, or GDPR data processing logs, the difference between 30-day SaaS retention and unlimited self-hosted retention is not a cost footnote; it is a fundamental architectural requirement.

Best-Fit Scenarios and Trade-offs

CubeAPM

Best for: Engineering teams running Kubernetes-based microservices who need full OpenTelemetry-native observability with stronger data control, predictable ingestion-based pricing, and deployment inside their own cloud or on-premises environment. It is especially relevant for teams that want unified MELT visibility without moving telemetry into an external SaaS platform.

- Strengths: Full MELT coverage including logs, metrics, traces, and infrastructure visibility; OpenTelemetry-native ingestion; smart sampling for lower-noise debugging; data remains in the customer environment; vendor-managed operations model.

- Limitations: Not suited for teams that might require off-prem solutions. Strictly an observability platform and does not support cloud security management

Graylog

Best for: Teams that need powerful, centralized log management with strong SIEM capabilities and the flexibility to self-host or deploy on-premises. Well-suited for IT operations teams, security analysts, and DevOps engineers whose primary operational signal is logs, syslog, Windows events, application logs, and network device log, structured alerting, and security investigation workflows without the overhead of a full-stack observability suite.

- Strengths: Strong log search performance; SIEM capabilities through Graylog Security; active-data-oriented pricing model; flexible deployment across on-prem, hybrid, or cloud environments.

- Limitations: No native distributed tracing or APM; requires external tooling for trace-based debugging; more operational responsibility when self-hosted at scale.

Splunk Observability Cloud

Best for: Large enterprises that want a fully managed, vendor-operated full-stack observability platform with broad visibility across applications and infrastructure, AI-assisted troubleshooting, and minimal operational overhead. Teams already invested in Splunk Cloud Platform can also benefit from Log Observer Connect workflows that link log investigations to observability analysis without duplicating the whole troubleshooting experience across separate tools.

- Strengths: Full-stack observability across metrics, traces, logs, and events; strong service and infrastructure views; AI-assisted investigation workflows; fully managed SaaS delivery.

- Limitations: SaaS-only deployment model; cost can rise as host counts and product modules expand; log workflows may rely on connected Splunk platform integrations rather than a single self-hosted data layer.

Decision Framework

Teams evaluating these three platforms typically prioritize one of the following needs. The table below maps common requirements to the most likely architectural fit, along with the key trade-off to evaluate.

| Primary Priority | Likely Best Fit | Key Trade-off to Consider |

|---|---|---|

| Centralized log management + SIEM | Graylog | No native tracing, requires companion APM tool |

| Fully managed SaaS, minimal ops overhead | Splunk Observability Cloud | Cost scales with hosts and selected product tiers/modules |

| Full-stack observability + data ownership | CubeAPM | Not suited for teams looking for off-prem solutions |

| OpenTelemetry-native stack without re-instrumentation | CubeAPM or Splunk Observability Cloud | CubeAPM: customer-hosted deployment model. Splunk: SaaS-only platform model. |

| Out-of-box dashboards, broad integrations, enterprise support | Splunk Observability Cloud | Higher cost as host counts and selected observability products expand; less direct data control than customer-hosted options. |

| SIEM + security analytics | Graylog Security | Limited MELT coverage beyond logs |

| Predictable billing at scale, no per-user costs | CubeAPM | No cloud security management |

Conclusion

Graylog, Splunk Observability Cloud, and CubeAPM reflect three different priorities in observability.

Graylog is the strongest fit for teams focused on centralized log management and SIEM, especially when flexible deployment and active-data-oriented pricing matter most. The trade-off is that teams needing native tracing and deeper cross-signal correlation will likely need a companion APM tool.

Splunk Observability Cloud is best suited to organizations that want a fully managed SaaS observability platform with broad full-stack coverage and minimal operational overhead. The main trade-off is that cost can rise as host counts and selected observability products expand.

CubeAPM is best suited to teams that want OpenTelemetry-native observability, stronger data control, and predictable ingestion-based pricing inside their own environment. The trade-off is that it is not a SIEM platform and still requires customer-controlled infrastructure.

In practice, the decision comes down to three things: whether your priority is logs and security or full-stack observability, where your data needs to live, and how the pricing model behaves as telemetry volume grows.

Disclaimer: The information in this article reflects the latest details available at the time of publication and may change as technologies and products evolve.

FAQs

1. Is Graylog a full observability platform or just a log management tool?

Graylog is primarily a log management and SIEM platform. It is strong for log ingestion, search, alerting, and security workflows, but it is not a native full-stack observability platform with built-in tracing and APM like Splunk Observability Cloud or CubeAPM.

2. Can Splunk Observability Cloud be deployed on-premises?

No. Splunk Observability Cloud is a SaaS product. It can monitor on-prem and cloud systems through collectors and integrations, but the platform itself runs in Splunk’s managed cloud.

3. What makes CubeAPM different from Graylog and Splunk Observability Cloud?

CubeAPM stands out for combining OpenTelemetry-native observability, deployment inside the customer’s own environment, and ingestion-based pricing. Compared with Graylog, it adds full MELT observability. Compared with Splunk Observability Cloud, it emphasizes stronger data control and customer-hosted deployment.

4. How does OpenTelemetry support compare across these platforms?

CubeAPM is positioned as OpenTelemetry-native. Splunk Observability Cloud has strong OpenTelemetry support through OTLP and the Splunk Distribution of the OpenTelemetry Collector. Graylog supports OpenTelemetry log ingest, but its core design remains log-centric rather than full OTel-native MELT observability.

5. Which platform handles data retention better for compliance use cases?

For customer-controlled retention, Graylog and CubeAPM are the stronger fits. Graylog lets teams manage retention and archive behavior in self-hosted deployments, while CubeAPM’s pricing shows 3 months retention on Pro and unlimited retention on Enterprise. Splunk Observability Cloud uses data-type-specific retention, such as 8 days for raw traces and longer periods for some retained datasets.

6. Is Graylog really free?

Partly. Graylog Open is free and source-available, and Graylog Small Business is free up to 2 GB/day. Graylog Enterprise and Graylog Security are paid products.

7. What is the best platform for Kubernetes-based microservices?

If you need full-stack observability for Kubernetes-based microservices, Splunk Observability Cloud and CubeAPM are the stronger fits. Graylog works well for Kubernetes log visibility, but it is not built as a native tracing-first Kubernetes observability platform.