Coralogix has become one of the most frequently evaluated observability and log management platforms in 2026. Originally focused on log analytics, it has evolved into a full-stack observability platform covering logs, metrics, distributed traces, security (SIEM/CSPM), and AI telemetry monitoring, all under a single usage-based pricing model with no per-user or per-host fees.

This comprehensive Coralogix pricing and review provides an independent, vendor-neutral analysis of Coralogix in 2026, covering its current pricing structure, core product capabilities, real-world cost scenarios, verified user sentiment, and the alternatives that deserve evaluation before any commitment.

If you want to model your current Coralogix bill before reading further, the Coralogix pricing calculator breaks down every cost dimension: data ingest, log/trace/metric splits, AI evaluation costs, and data transfer costs that most teams overlook.

Try the Coralogix pricing calculator →What Is Coralogix?

Platform Overview

Coralogix was founded in 2015 and has grown from a log analytics tool into a full-stack observability platform. Its core architectural differentiator is an in-stream analysis model: data is processed as it is ingested through a Kafka Streams pipeline before being indexed. This allows alerting, ML-based log clustering, anomaly detection, and metric extraction to run on data that may never need to be stored in a high-cost search index, which is the foundational reason Coralogix can be priced lower than traditional index-first tools like Splunk.

Today, Coralogix covers:

- Log management and analytics

- Infrastructure monitoring (metrics)

- Distributed tracing (APM)

- Security information and event management (SIEM)

- Cloud Security Posture Management (CSPM)

- Real User Monitoring (RUM)

- AI observability and LLM evaluation

- Cost optimization via the TCO Optimizer

The platform serves three main audiences:

- Engineering teams at growth-stage and mid-market companies moving away from Datadog or Splunk due to cost

- Large enterprises that need infinite log retention without proportional cost growth

- Organizations with compliance requirements that need long-term archival at low cost

Key Features of Coralogix

Coralogix is a SaaS observability platform with telemetry stored in the customer’s cloud object storage. Its platform page says observability data is stored on the customer’s S3 bucket, supports remote index-free querying, and uses open standards such as OpenTelemetry and Prometheus.

Coralogix also supports OpenTelemetry for traces, logs, and metrics, with Kubernetes, ECS, Docker, and other deployment options through the OpenTelemetry Collector.

Coralogix processes telemetry in-stream before final storage decisions are made. Its docs say alerts are evaluated during the streaming process without requiring prior indexing, which lets teams alert on logs, metrics, and traces before deciding whether data should go to hot storage or lower-cost storage. This is one of Coralogix’s main architectural differentiators.

Coralogix’s TCO Optimizer routes logs and traces based on business value. Teams can send data to High / Frequent Search, Medium / Monitoring, Low / Compliance, or block it at ingestion. High-priority data can use hot storage for faster queries, while Monitoring and Compliance data are stored in customer-owned S3 and queried with DataPrime.

Coralogix supports querying logs from the index or from an Amazon S3 archive. Its docs say archive queries support Lucene or DataPrime, which helps teams query older data without a separate rehydration workflow.

DataPrime is Coralogix’s own piped query language for filtering, transforming, and aggregating telemetry. Coralogix also supports Lucene and PromQL in dashboards and query workflows, and its Metrics API can act as a PromQL-compatible query data source for tools such as Grafana.

Coralogix positions itself as a cross-stack observability platform covering logs, metrics, traces, security, and AI observability. Its platform page also lists APM, service catalog, database monitoring, service maps, serverless APM, RUM, error tracking, session replay, infrastructure monitoring, and more.

Coralogix supports ML-powered anomaly alerts across logs, metrics, and traces. Its docs say alerts can adapt to system behavior and identify deviations from the norm, while alerts are processed in-stream before storage decisions are made.

Coralogix has expanded into AI observability through AI Center, which includes monitoring, evaluations, guardrails, and AI security posture management. Its pricing page lists AI telemetry at $1.50 per 1M tokens.

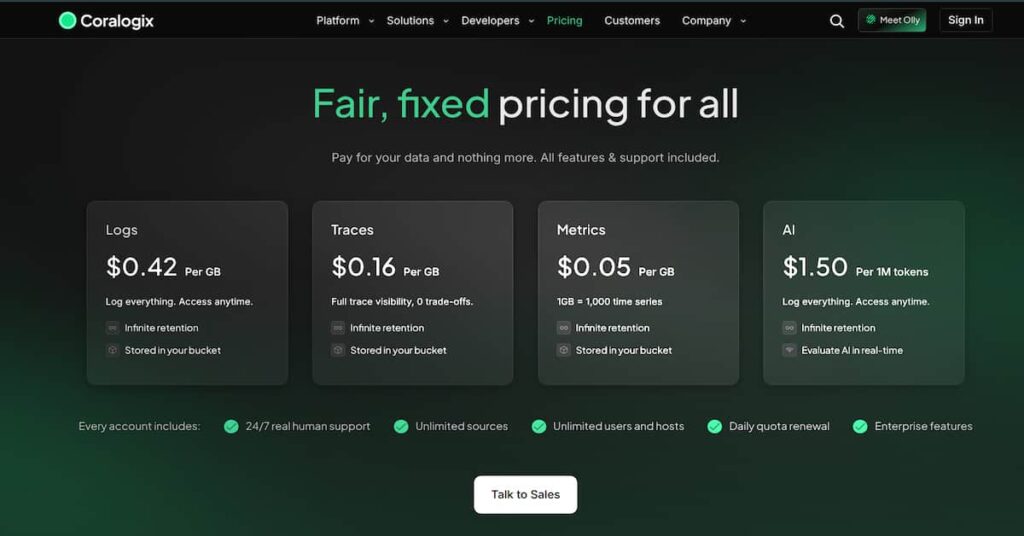

What Are Coralogix’s Pricing Options?

Coralogix uses a usage-based, per-GB pricing model. There are no per-user fees, no per-host fees, and no tiered feature gates; all features are included on every paid plan. Pricing is based on the volume of data ingested.

Published Per-GB Rates (2026)

| Data Type | Price | Notes |

|---|---|---|

| Logs | $0.42 / GB | Infinite retention; stored in your own bucket |

| Traces | $0.16 / GB | Full trace visibility; infinite retention |

| Metrics | $0.05 / GB | 1 GB ≈ 1,000 time series; infinite retention |

| AI Telemetry | $1.50 / 1M tokens | Real-time LLM evaluation and AI observability |

What is Included in Every Plan

Coralogix includes the following on all paid plans with no additional charge:

| Feature | Detail |

|---|---|

| 24/7 human support | Median 17-second response time; median 1-hour resolution time |

| Unlimited users | No per-seat fees for any team members |

| Unlimited hosts | No per-host charges at any scale |

| Unlimited data sources | Ingest from any number of sources |

| RBAC & SSO (SAML 2.0) | Enterprise identity management included |

| Audit trail & IP access control | Compliance and governance controls |

| SIEM & CSPM | Security monitoring included on the platform |

| TCO Optimizer | Built-in cost control tooling |

| Infinite retention | Data stored in your own S3 bucket; no extra retention fee |

What Does Coralogix Really Cost?

⚠️ Disclaimer

The scenarios below are directional editorial models, not official Coralogix quotes. They use assumed telemetry volumes, assumed log/trace/metric splits, and modeled effective rates based on public Coralogix pricing information. Actual costs can vary based on TCO Optimizer routing, pipeline priority, retention choices, S3 storage, usage patterns, enterprise discounts, support or services, and contract terms.

Coralogix pricing is simple, but it is also important to note that the final bill is still highly usage-dependent. Costs can change based on telemetry volume, log and trace priority, retention choices, pipeline routing, and how well teams configure the TCO Optimizer.

| Team Size | Hosts | Data Volume |

|---|---|---|

| Small Team | 10 hosts | ~1 TB/month |

| Growing Team | 50 hosts | ~5 TB/month |

| Mid-Market | 250 hosts | ~27 TB/month |

Assumptions Used in the Cost Scenarios

- Data volumes are directional, not measured customer benchmarks.

- The model uses Coralogix public pricing information available at the time of writing.

- Annual billing assumptions are used where public pricing makes that distinction.

- Scenarios assume a 70:20:10 split across logs, traces, and metrics.

- The “optimized” case assumes some data is routed away from high-priority search through the TCO Optimizer.

- Scenarios do not include negotiated enterprise discounts, professional services, custom retention pricing, or customer-managed S3 storage costs.

- Final costs may be higher or lower depending on data mix, routing policy quality, retention, and contract terms.

Scenario 1: Small Team – 10 Hosts, ~1 TB/month

Situation: A small engineering team runs 10 hosts (application services, a database, and a cache layer). They generate approximately 1 TB/month of telemetry across logs, traces, and metrics. The team has 2–3 engineers sharing an informal on-call rotation. They want centralized log search, basic alerting, and uptime visibility without a heavy operational overhead.

Why teams at this stage consider Coralogix

- They are already generating a meaningful volume of logs from containerized services.

- They need to search logs across multiple containers at the same time.

- Manual container-by-container log inspection has become too slow or impractical.

- They want one centralized place to investigate intermittent errors.

- They need faster troubleshooting when issues affect many services or containers.

- Coralogix becomes more useful when log volume and service complexity are high enough that basic logging tools no longer feel efficient.

Estimated profile:

| Configuration | Detail |

|---|---|

| Hosts | 10 hosts |

| Total telemetry volume | ~1 TB/month (700 GB logs, 200 GB traces, 100 GB metrics) |

| Pricing Basis | Public Coralogix rates |

| Cost Variable | Final cost may change based on pipeline routing, retention, and negotiated terms |

Estimated monthly cost:

Disclaimer: These are directional editorial estimates based on Coralogix’s public pricing. They are not official Coralogix quotes. Actual costs depend on pipeline allocation, data mix, retention, and negotiated terms.

| Component | Calculation | Monthly Cost |

|---|---|---|

| Logs (700 GB) | $0.42/GB × 700 GB | ~$294 |

| Traces (200 GB) | $0.16/GB × 200 GB | ~$32 |

| Metrics (100 GB) | $0.05/GB × 100 GB | ~$5 |

| Total Estimated Cost | ~$331/month |

What this scenario shows

For a small team, Coralogix can be affordable when estimated using its public rates. The main thing to watch is pipeline routing. If more logs need high-priority search, costs can rise. If more data can stay in lower-cost monitoring or archive-style pipelines, the effective rate can stay lower.

Coralogix is most attractive here when the team already has enough log volume to justify a paid platform but still wants more cost control than traditional indexing-heavy observability tools.

Scenario 2: Growing Team – 50 Hosts, ~5 TB/month

Situation: A growing SaaS company runs about 50 hosts across application services, Kubernetes workloads, databases, and frontend systems. The team generates approximately 5 TB/month of telemetry across logs, traces, and metrics. A 4–5 person engineering team shares on-call duties, and the platform is expected to cover centralized logging, distributed tracing, alerting, and basic infrastructure dashboards.

Why teams at this stage consider Coralogix

At this scale, Coralogix becomes useful when log volume is large enough that traditional indexing-heavy platforms start feeling expensive. The TCO Optimizer also matters here because pipeline routing can affect the final bill.

Estimated profile:

| Configuration | Detail |

|---|---|

| Hosts | 50 hosts |

| Total telemetry volume | ~5 TB/month (3.5 TB logs, 1 TB traces, 500 GB metrics) |

| Pricing Basis | Public Coralogix rates |

| Cost variable | Final cost may change based on pipeline routing, retention, usage patterns, and negotiated terms |

Estimated monthly cost:

Disclaimer: These are directional editorial estimates based on Coralogix’s public pricing. They are not official Coralogix quotes.

| Component | Calculation | Monthly Cost |

|---|---|---|

| Logs (3,500 GB) | $0.42/GB × 3,500 GB | ~$1,470 |

| Traces (1,000 GB) | $0.16/GB × 1,000 GB | ~$160 |

| Metrics (500 GB) | $0.05/GB × 500 GB | ~$25 |

| Total Estimated Cost | ~$1,655/month |

What this scenario shows

For a growing team, Coralogix still looks manageable when estimated with its public rates. However, the bill scales with telemetry volume. Moving from 1 TB/month to 5 TB/month increases the estimate from about $331/month to about $1,655/month.

Scenario 3: Mid-Market Team – 250 Hosts, ~27 TB/month

Situation: A mid-market B2B SaaS company runs about 250 hosts across AWS and GCP, with Kubernetes workloads, multiple database clusters, backend microservices, and customer-facing web applications. The team generates approximately 27 TB/month of telemetry. Multiple on-call rotations are active, and the engineering organization needs unified dashboarding, distributed tracing, SIEM, and compliance-grade long-term log archival.

Why teams at this size consider Coralogix

At mid-market scale, observability spend becomes a serious budget item. Coralogix can be attractive because its public pricing is tied to data volume rather than host count or user seats. As the team grows from 50 to 250 hosts, the bill mainly depends on how much telemetry is ingested and retained.

Compliance-grade archival also becomes more important at this stage. Coralogix stores data in the customer’s own object storage and supports long-term retention, which can help teams keep older logs accessible without relying only on expensive hot indexing. Cloud storage costs still apply separately.

Estimated profile:

| Configuration | Detail |

|---|---|

| Hosts | 250 hosts |

| Total telemetry volume | ~27 TB/month (19 TB logs, 5.4 TB traces, 2.7 TB metrics) |

| Pricing basis | Public Coralogix rates |

| Cost variable | Final cost may change based on pipeline routing, usage patterns, egress costs, and negotiated terms |

Estimated monthly cost:

Disclaimer: These are directional editorial estimates based on Coralogix’s public pricing. They are not official Coralogix quotes. Actual costs depend on pipeline allocation, data mix, retention periods, and enterprise negotiations.

| Component | Calculation | Monthly Cost |

|---|---|---|

| Logs | $0.42/GB × 19,000 GB | ~$7,980 |

| Traces | $0.16/GB × 5,400 GB | ~$864 |

| Metrics | $0.05/GB × 2,700 GB | ~$135 |

| Total Estimated Cost | Logs + traces + metrics | ~$8,979/month |

What this scenario shows

At mid-market scale, Coralogix is no longer a small monthly tool expense. Even with public rates, the estimate rises from about $1,655/month at 5 TB/month to about $8,979/month at 27 TB/month.

That is the main point to show: Coralogix can be more predictable than platforms that also charge by host, user, or many add-on modules, but it is still usage-based. As telemetry volume increases, the bill increases too.

What Actually Drives Coralogix Costs

Understanding Coralogix pricing means looking beyond the headline per-GB rate. The final bill usually depends on three main cost drivers.

Pipeline routing is one of the biggest cost-control levers in Coralogix. The TCO Optimizer lets teams route logs and traces based on business value, using High/Frequent Search, Medium/Monitoring, Low/Compliance, or Blocked outcomes. High-priority data can be kept in hot storage for faster investigation, while lower-priority or compliance data can be stored in customer-owned object storage and queried when needed.

Coralogix’s pricing page gives examples showing that 1.3 GB of Frequent Search logs at about $1.15/GB equals 1 unit, while 3 GB of Monitoring logs at about $0.50/GB also equals 1 unit. That shows why routing matters: the more data placed in higher-priority search pipelines, the higher the effective log cost becomes.

Logs are usually the highest-volume telemetry type, so they have the biggest impact on the monthly bill. Coralogix’s pricing is still volume-based, even though units give teams flexibility across logs, metrics, and traces. Reducing noisy logs before ingestion, lowering non-production verbosity, and routing lower-value logs to cheaper pipelines can reduce spend directly.

Traces and metrics also affect the bill, but their public rates are lower than logs. Coralogix’s pricing page lists traces from $0.16/GB and metrics from $0.05/GB. Teams with high-throughput microservices should still manage trace volume carefully through sampling, because large trace volumes can add up quickly at scale.

Additional Costs and Operational Overhead Buyers Should Plan For

Coralogix supports customer-owned archive storage, including Amazon S3. These storage costs are separate from Coralogix platform pricing, so teams should include cloud storage charges when estimating long-term retention. Coralogix notes that archived logs and traces are compressed before being stored in S3, but the storage bill still depends on the customer’s cloud provider, retention period, and data volume.

Coralogix’s TCO Optimizer can help reduce costs by routing logs and traces into different priority levels based on business value. However, the savings depend on good policy design. Teams need to classify telemetry, set routing rules carefully, maintain rule order, and review routing behavior as applications change. If no policy matches, Coralogix routes the data to High/Frequent Search by default, which can increase costs if teams do not manage policies properly.

Coralogix supports SCIM for automated user and group provisioning, and SAML SSO setup paths for identity providers such as Okta, OneLogin, and Microsoft Entra ID/Azure AD. Buyers should verify provisioning behavior, group mapping, claims, and role assignment during implementation. This can add admin overhead in larger organizations with strict access-control requirements.

Cloud egress is not a Coralogix-specific platform fee, but it can still affect the total cost of ownership. If archived data is queried, exported, replicated, or moved across regions or clouds, the customer’s cloud provider may charge data transfer fees. Teams should check this separately based on where their archive storage lives and how often data is accessed or moved.

Coralogix User Reviews in 2026

Coralogix has strong scores on major B2B software review sites. Gartner Peer Insights lists Coralogix at 4.5/5 from 146 ratings, while TrustRadius shows 9.2/10 from 13 reviews and ratings. G2 lists Coralogix at 4.6 stars across its broader product listings, but review counts can vary by product page/category.

What users praise

Log searchability at scale

A TrustRadius reviewer from JioStar said Coralogix helped them search logs across more than 100 containers and quickly identify an intermittent issue that would have been impractical to check manually. This is a strong real-world example of Coralogix’s value for container-heavy environments.

Automatic log severity classification

The same TrustRadius reviewer said Coralogix could classify log severity even when the level was not externally specified. They said this helped catch errors that were logged as info-level messages in the application code.

Support quality

Users mention support positively across G2 and TrustRadius. One G2 reviewer praised quick chat support, while another TrustRadius reviewer described the support as effective and helpful. I would not use the exact “17-second median response time” unless you have a direct Coralogix source for that specific number.

Unified observability

Several reviews mention value from having logs, metrics, traces, alerts, and dashboards in one place. A G2 reviewer said Coralogix helps correlate logs, metrics, and traces and reduces time spent switching tools.

What users criticize

⚠️ Disclaimer

The following points reflect recurring themes from AWS Marketplace, G2, and TrustRadius review snippets and should be treated as user feedback, not universal platform limitations.

Steep learning curve

Some users mention that Coralogix takes time to learn. G2 shows feedback that advanced features like custom parsing pipelines, enrichment rules, and the REST API can have a steep learning curve.

Pricing complexity

AWS Marketplace reviewers note that Coralogix pricing can be tricky to understand because costs depend on ingestion, storage, and query frequency.

Retention and indexing trade-offs

AWS Marketplace feedback also points out that because Coralogix processes logs before indexing, teams need to plan data pipelines carefully. Some data may not be instantly searchable if they aren’t stored in a searchable index.

Summary Rating Breakdown (May 2026)

| Platform | Rating |

|---|---|

| TrustRadius | 9.2/10 from 13 verified reviews |

| Gartner Peer Insights | 4.5/5 from 146 ratings (Observability Platforms category) |

| Capterra | 5.0/5 from 1 review; insufficient sample for conclusions |

| G2 | 4.6/5 from 343 reviews |

| AWS Marketplace | 4.7/5 from 9 AWS customer reviews; plus 346 external reviews from G2 and PeerSpot, not included in the AWS star rating |

Coralogix Alternatives: How It Compares to Competitors

Coralogix vs. CubeAPM

Coralogix and CubeAPM fit different needs. Coralogix is stronger for cost-optimized log management at scale, with flexible pipeline-based pricing and a comprehensive SIEM/CSPM offering. CubeAPM is stronger for deep APM, OpenTelemetry-native observability, and most importantly, self-hosted deployment, where all telemetry remains inside the customer’s own infrastructure.

For organizations in regulated industries (finance, healthcare, and government) or with strict data residency requirements, CubeAPM’s on-premises model means no log, trace, or metric data leaves the company’s own cloud.

| Category | Coralogix | CubeAPM |

|---|---|---|

| Pricing model | Per-GB ingested | $0.15/GB ingested – flat rate |

| Deployment | SaaS | Self-hosted (vendor-managed) |

| APM depth | Deeper APM + RUM + error tracking | Deeper APM with AI-based sampling |

| Log management | Core strength: TCO Optimizer | Strong, privacy-first (no data leaves your cloud) |

| Data residency | Your S3 bucket; SaaS control plane | Fully inside customer infrastructure |

| Best fit | Cost-optimized SaaS observability | Deep APM + strict data control |

Coralogix vs. Datadog

Datadog is one of the most feature-rich observability platforms, with strong APM, infrastructure monitoring, log management, RUM, synthetics, security, and a very large integration ecosystem. Datadog’s docs list more than 1,000 built-in integrations. Its pricing is modular, with separate billing units across products like infrastructure monitoring, APM, logs, RUM, synthetics, and more.

| Category | Coralogix | Datadog |

| Pricing model | Usage-based; no host/user fees | Per host + usage-based add-ons |

| Log management | Core strength; pipeline controls | Strong, separate log pricing |

| APM depth | Strong: Good trace visibility | Strong APM + profiling |

| Integrations | Good, OSS-friendly | 1,000+ integrations |

| Free tier | 14-day free trial | Free infrastructure tier |

| Best fit | Cost-focused log-heavy teams | Feature-first observability teams |

Coralogix vs. Grafana Cloud

Grafana Cloud is a strong choice for teams already invested in Grafana, Prometheus, Loki, Tempo, and the broader open-source observability stack. Its free tier is useful for small teams and testing, with 50 GB of logs, 50 GB of traces, 10k metrics, and 14-day retention listed in the Grafana docs.

Coralogix is stronger when the priority is managed log cost control, pipeline routing, and built-in security/observability features. Its TCO Optimizer routes logs and traces based on business value, and Coralogix says all customers get access to all platform features under its unit-based model.

| Category | Coralogix | Grafana Cloud |

| Free tier | 14-day free trial | Free tier with logs, traces, metrics |

| Cost optimization | TCO Optimizer | Usage controls, Adaptive Logs |

| SIEM/CSPM | Security features available | Usually needs separate tools |

| Query languages | DataPrime, Lucene, PromQL | LogQL, PromQL, TraceQL |

| Best fit | Log-heavy teams needing cost control | Teams already on Grafana OSS |

Coralogix vs. AWS CloudWatch

CloudWatch is the default choice for AWS-native teams because it is deeply integrated with AWS services. The tradeoff is pricing complexity: AWS lists separate CloudWatch charges for logs, metrics, dashboards, alarms, CloudWatch Logs Insights queries, Metric Streams, and other features.

| Category | Coralogix | AWS CloudWatch |

| Pricing complexity | Low: Units + pipeline controls | High: Many separate AWS charges |

| Multi-cloud support | AWS, GCP, Azure, on-prem | Mainly AWS-native |

| SIEM/CSPM | Security features available | Uses separate AWS security service |

| Support | 24/7 support | Depends on AWS support plan |

| Best fit | Multi-cloud logs + cost control | AWS-only monitoring needs |

Coralogix vs. New Relic

New Relic is strong for teams that want broad full-stack observability and a generous free tier, including 100 GB of free data ingest per month. Coralogix is usually a better fit when the main concern is log-heavy cost control, long-term retention in customer-owned storage, and pipeline-based routing through the TCO Optimizer.

| Category | Coralogix | New Relic |

| Pricing model | Usage-based + pipeline controls | Data ingest + users |

| Free tier | 14-day free trial | 100 GB/month free |

| Log management | Core strength, TCO Optimizer | Strong, unified platform |

| APM depth | Strong APM: Good traces + RUM | Strong APM + broad tooling |

| Data storage | Customer-owned bucket | New Relic cloud |

| Best fit | Log-heavy cost control | Full-stack observability teams |

Coralogix vs. Dynatrace

Dynatrace is a strong enterprise observability platform for deep APM, infrastructure monitoring, automation, log analytics, digital experience monitoring, and application security. Coralogix is usually a better fit for teams focused on high-volume logs, retention cost, and pipeline-based cost control, while Dynatrace fits buyers that need enterprise automation, deep APM, and AI-assisted root-cause analysis.

| Category | Coralogix | Dynatrace |

| Pricing model | Usage-based + pipeline controls | Modular consumption pricing |

| Log management | Core strength, TCO Optimizer | Strong Log Analytics |

| APM depth | Strong: Good trace visibility | Very strong APM + automation |

| AI/automation | Alerts + AI observability | Davis AI + root-cause analysis |

| Deployment fit | SaaS | Enterprise SaaS/hybrid options |

| Best fit | Cost-optimized log-heavy teams | Enterprise APM-led teams |

Is Coralogix the Right Choice?

When Coralogix works best

Coralogix works best for teams with meaningful log volume that are willing to manage routing policies. Its TCO Optimizer routes logs and traces based on business value, so teams can keep important data searchable while sending lower-value data to cheaper storage paths. This makes it a better fit for teams that actively manage telemetry, not teams that want to ingest everything without thinking about routing.

Coralogix is a good fit for teams running across AWS, GCP, Azure, Kubernetes, or on-premises environments. It supports OpenTelemetry for logs, metrics, and traces and stores observability data in the customer’s cloud object storage.

Coralogix publicly positions support as a strength. G2’s Coralogix listing mentions free, fast support with less than a 30-second response time and 1-hour resolution time. I’d still phrase this as a vendor/review-platform claim, not a guaranteed SLA unless it appears in the contract.

Coralogix now covers more than logs. Its platform page lists logs, metrics, traces, dashboards, in-stream alerting, RUM, infrastructure monitoring, service maps, and related observability features. G2 review summaries also show users praise broad monitoring coverage, while criticism often centers on the learning curve and setup complexity.

When Coralogix may not be the right fit

Coralogix is still mainly a SaaS-led platform, even though it emphasizes customer data control and customer-owned storage options. Teams that need the observability platform itself to run inside their own cloud or on-prem environment should compare it with self-hosted or BYOC options like CubeAPM.

Coralogix publishes pricing, but the unit model, pipeline priorities, and TCO Optimizer rules can still make planning harder. Buyers should model expected telemetry volume, routing split, retention, archive storage, and support needs before estimating total cost.

Conclusion

Coralogix is a strong fit for teams with high telemetry volumes that want better cost control than traditional indexing-heavy tools. Its strengths include usage-based pricing, no host or user-based billing, TCO Optimizer routing, customer cloud archive options, and broad coverage across logs, metrics, traces, security, RUM, infrastructure monitoring, and AI observability.

The main tradeoff is configuration effort. Coralogix is SaaS-managed, so teams do not operate the backend, but they still need to manage routing policies, priority levels, retention, and archive access patterns. The TCO Optimizer can reduce costs, but only when rules are designed and reviewed properly.

For buyers, the best next step is to request a TCO analysis using real log volume, trace volume, retention needs, and access patterns. If full self-hosting, stricter data control, or simpler flat per-GB pricing matters more, CubeAPM is worth comparing.

Disclaimer: This is an independent editorial review based on publicly available Coralogix documentation, pricing pages, and product materials, supplemented by verified user reviews from TrustRadius, Gartner Peer Insights, Trustpilot, Reddit, and Capterra at the time of writing (May 2026). Pricing, feature availability, and plan terms may change; readers should verify current details directly with Coralogix before making purchasing or implementation decisions.

FAQs

1. What is Coralogix’s pricing in 2026?

Coralogix uses usage-based pricing. Its public pricing lists logs at $0.42/GB, traces at $0.16/GB, metrics at $0.05/GB, and AI telemetry at $1.50 per 1M tokens. All features and support are included in the published model.

2. What is the TCO Optimizer?

The TCO Optimizer routes logs and traces based on business value. Teams define policies that send data to different outcomes, such as high-priority search, monitoring, compliance storage, or blocking.

3. Can I query archived data in Coralogix without rehydrating?

Yes. Coralogix Archive Query lets teams query logs directly from S3 archive using text, Lucene, or DataPrime syntax. Coralogix also says Remote Query works without rehydration or reindexing.

4. Does Coralogix support on-premises deployment?

No. Coralogix is SaaS-led, with data stored in customer-owned object storage. It is not the same as a fully self-hosted observability platform. For self-hosted, young people can consider platforms like CubeAPM, SigNoz, or Grafana.

5. What data types does Coralogix support?

Coralogix supports logs, traces, metrics, and AI telemetry in its public pricing model. Its broader platform also covers observability and security use cases.