An enterprise observability strategy decides if telemetry makes things clearer or more confusing. In today’s Kubernetes and multi-cloud environments, scale matters in operations. The CNCF Annual Survey reports that 96% of organizations use or evaluate Kubernetes, with most running it in production.

At enterprise scale, the challenge moves from collecting signals to controlling them. Hundreds of services can produce tens of millions of traces and billions of metrics each month. Without standardized instrumentation, defined ownership, and cost discipline, observability fragments across tools, teams, and budgets.

For CFOs, the priority is cost predictability. For CTOs, it is MTTR and service reliability. For compliance leaders, it is retention, auditability, and governance. Enterprise observability aligns engineering execution with business accountability.

This article presents a practical enterprise observability framework built for that complexity. It explains why enterprise observability matters, defines the principles that sustain it, outlines a reference architecture, and provides a disciplined step-by-step design approach.

What Is an Enterprise Observability Strategy?

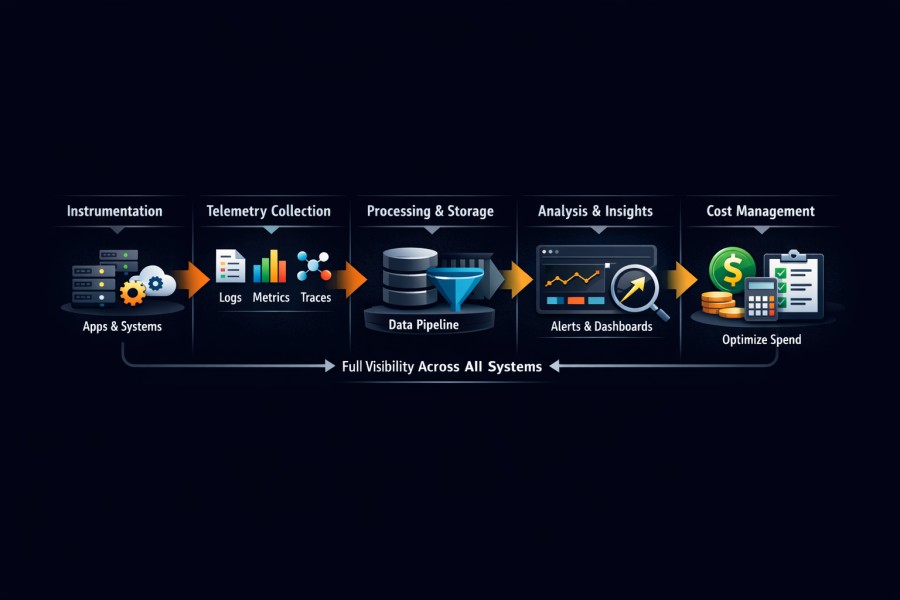

An enterprise observability strategy is the structured plan that governs how your organization instruments, collects, processes, stores, analyzes, and finances telemetry across every system it operates.

It defines how:

- Telemetry behaves as your architecture scales

- Teams work with that telemetry, and

- Leadership measures risk, reliability, and cost

Many enterprises run Kubernetes across multiple clusters, regions, and clouds. At that scale, observability complexity compounds quickly. Service meshes introduce new network layers. Microservices multiply trace volume. Auto-scaling changes metric cardinality by the hour. Multi-region deployments duplicate log streams.

An enterprise observability strategy exists to ease that complexity. It formalizes an enterprise observability framework and turns observability into an operating model. It also helps organizations expand telemetry under control. Because of this:

- Standardization comes before expansion.

- Governance exists from day one.

- Cost control is engineered into the pipeline, and not patched in after finance raises concerns.

This discipline separates companies that investigate incidents in minutes from those that burn hours chasing partial data.

Why a monitoring tool alone is not sufficient for enterprises

Monitoring platforms detect known conditions. But enterprise observability enables investigation of unknown failure modes across distributed systems. That shift demands structural decisions. In large environments, teams often deploy tools for:

- Logs

- Traces and alerts

- Metrics and dashboards

- OpenTelemetry collectors for instrumentation

Each component solves a technical problem. None of them alone defines an enterprise observability operating model.

An enterprise strategy answers deeper questions:

- What metadata must every service emit?

- How is service identity enforced across logs, metrics, and traces?

- Who owns telemetry quality for each domain?

- How do retention tiers align with compliance and cost objectives?

- How is the telemetry cost per service tracked and reviewed?

Without these choices, growth leads to disorder. Standards for tagging change. There are times when the trace context is not passed on correctly. Metrics explode in number. Log ingestion goes up by two times when a new region comes online.

To see things at the enterprise level, you need centralised guardrails and federated accountability. Standards are set by platform teams. Domain teams are in charge of the quality of the instruments. Governance is in charge of the whole enterprise telemetry pipeline. This is the strategy. Choosing a monitoring tool only comes next.

3 Outcomes an Enterprise Observability Strategy Must Deliver

An enterprise observability strategy succeeds only if it delivers measurable outcomes.

Faster Incident Resolution

Enterprises operating hundreds of microservices can easily generate:

- 1 million traces per day per major product domain

- Tens of millions of spans per month

- Billions of log lines across clusters

Without consistent service identity and context propagation, incident response slows. Engineers pivot between dashboards. They copy trace IDs manually and search logs with incomplete metadata.

A mature enterprise observability framework helps you with these:

- Trace context propagates across service boundaries

- Logs, metrics, and traces align around a shared service model

- Investigation flows move from alert to root cause in a single workflow

Research from the 2023 Accelerate State of DevOps Report shows that high-performing organizations recover from incidents significantly faster than low-performing peers. Observability maturity is one of the differentiators.

Predictable and Controlled Cost

Telemetry growth rarely scales linearly. Metric storage can multiply several times over when label discipline is weak. Log ingestion doubles when multi-region duplication is enabled without filtering. Extending retention from 15 days to 90 days increases the storage footprint several times, depending on indexing and compression settings.

Cost discipline by design requires:

- Sampling aligned to service criticality

- Tiered retention across hot and cold storage

- Early filtering of low-value telemetry

- Continuous visibility into cost per service

An observability cost management model integrates engineering and finance.

- Platform teams monitor telemetry growth trends.

- The leadership team reviews the spend against reliability gains.

Cost control engineered into the enterprise telemetry pipeline creates predictability.

Clear Ownership and Accountability

Without ownership, the quality of telemetry goes down quickly. In environments with more than one team:

- Tagging rules change over time

- Policies for sampling are different

- During incidents, logging verbosity goes up and never goes back down.

An enterprise observability operating model helps you figure out:

- Which domain team is in charge of service instrumentation

- Which group of centralised platforms makes sure that standards are followed?

- How rules for governance work in different areas and clouds

- How executive dashboards turn telemetry into operational risk

Clear ownership stops things from getting worse without anyone knowing. It also helps people from different cultures work together. Engineers know how the choices they make about instrumentation affect reliability and cost.

When these three outcomes align, observability becomes infrastructure for decision-making. It results in faster recovery, predictable spend, and accountable engineering. It supports CTO priorities around MTTR and SLO performance. Also, it gives CFOs cost visibility and provides compliance teams with retention and audit control.

Why Enterprise Observability Matters

Enterprise observability matters because scale amplifies every weakness in your systems. What worked for ten services fails at two hundred. What felt manageable in one region becomes chaotic across five. What looked affordable in a single cluster becomes a budget line item that finance questions every quarter.

Here are some of the reasons why enterprise observability is important:

Scale Changes the Rules

Scale introduces nonlinear growth in telemetry. Consider an enterprise scenario:

- 200 microservices

- Each of them generates 1 million traces per day

- That equals more than 30 million traces per month per major domain

If each trace contains 20 spans on average, that number multiplies quickly. Storage, indexing, and query costs follow.

High-cardinality metrics create similar effects. A single dynamic label, such as user ID, request ID, or session token, can increase metric series count several times over. Storage requirements can grow five times or more when label discipline is weak.

Multi-region deployments compound the issue. Logs often replicate across regions for resilience. Ingestion volume doubles when duplication is not filtered or deduplicated. Extending log retention from 15 days to 90 days increases the storage footprint. Depending on the indexing strategy and compression, organizations often see several times more storage consumption.

Kubernetes-based environments intensify these dynamics. Pods scale up and down quickly, labels change, and service endpoints shift. Ephemeral workloads generate bursts of logs and metrics that traditional monitoring models never anticipated.

Scale increases unpredictability. So, an enterprise observability strategy acknowledges this from the start. It designs the enterprise telemetry pipeline with sampling, filtering, and retention guardrails in place before growth accelerates.

Tool Sprawl Creates Blind Spots

Many large enterprises use multiple observability stacks. It is common to see:

- Kubernetes clusters exporting metrics to Prometheus

- Traces routed to observability platforms

- OpenTelemetry collectors bridging different backends

Every tool meets a certain need. The estate breaks up over time. Apart from operational complexity, it impacts investigations. When something goes wrong, engineers switch between different systems. Metrics are all in one place. You need a different query interface for logs. The traces are in a different backend. Different platforms have different rules for alerts. Different services handle context propagation in different ways.

The problem gets worse when the instruments aren’t consistent. One group offers service.tags for version and environment. One does, but the other does not. Some services pass on trace IDs, but not all of them. Correlation breaks without a sound.

An enterprise observability framework makes sure that instrumentation standards are the same for all the SaaS platforms that are running in the estate. By enforcing shared service identity and metadata conventions, it lessens the effects of tool sprawl.

Cost Without Control Becomes a Risk

Telemetry cost doesn’t attract attention in early growth phases. It becomes visible when bills double year over year. Common drivers include:

- High-cardinality metrics with uncontrolled labels

- Trace volume spikes after microservice expansion

- Multi-region ingestion without filtering

- Retention inflation driven by compliance anxiety

For example, extending retention from 15 days to 90 days can multiply storage needs several times. Adding dynamic labels to metrics can increase the series count dramatically. Expanding trace sampling from 5% to 100% during peak traffic multiplies ingestion volume overnight.

When cost control is reactive, organizations cut visibility abruptly. Sampling is reduced without risk analysis. Retention is shortened across the board. Engineering teams lose historical context during critical investigations.

A mature observability cost management model prevents this cycle. It embeds:

- Sampling aligned to service criticality

- Tiered retention based on business impact

- Cost per service dashboards reviewed quarterly

- Clear budget guardrails tied to growth projections

Cost becomes predictable because it is designed into the system. At enterprise scale, uncontrolled observability spend becomes a financial governance issue.

Executive Visibility and Risk Management

Enterprise observability directly influences business risk.

From a CTO perspective, it affects:

- Mean time to recovery

- SLO attainment

- Incident frequency trends

From a CFO perspective, it affects:

- Telemetry cost predictability

- Budget variance

- Infrastructure efficiency ratios

From a compliance perspective, it affects:

- Retention policies

- Audit trails

- Data residency controls

Observability provides the operational lens through which leadership understands system health. It translates distributed technical signals into measurable business risk.

When executives see reliability trends tied to SLO compliance and cost per service, observability becomes part of strategic planning. But when telemetry lacks governance, reliability reporting becomes inconsistent. Also, cost becomes opaque, and risk becomes difficult to quantify.

Enterprise observability bridges engineering complexity and business accountability. It transforms telemetry from raw exhaust into a structured decision-making system.

Failures in Enterprise Observability We’ve Seen

Many businesses fail because they don’t have rules about how to use observability tools. The following situations are common in large multi-cloud, Kubernetes-based environments.

Scenario 1: No correlation between teams in a multi-team setting

What happened

An enterprise that runs more than 150 microservices had a problem in production that affected how customers checked out.

- There were errors in the logs that happened from time to time.

- Metrics showed higher latency.

- The traces were missing parts.

Engineers spent almost two hours trying to match logs with traces across services running in Kubernetes clusters in two different regions. Some services had trace IDs. In some cases, they weren’t there. The names of services were not the same in logs and metrics. Different teams used different ways to tag things. So, the correlation fell apart.

Why it happened

There was no standard for tagging across the whole company. Every team set up its own way to log things. Some people used structured JSON with fields that were always the same. Some people used free-form logging instead. OpenTelemetry instrumentation did not consistently enforce trace context propagation.

The organization had tools for measuring things. It didn’t have any standardisation.

Strategic fix

The company made a service identity model that everyone had to follow:

- Fields that must be filled out for service.name, service.team, environment, and version

- Forced all services to share the trace context

- Central validation checks in CI pipelines for telemetry fields that are needed

They put logs, metrics, and traces together using a common schema for metrics, traces, and logs. The correlation got better right away. The amount of time it took to look into incidents went down a lot in later events.

Lesson in governance

Before scaling, standardisation must come first. An enterprise observability framework requires telemetry standards to be followed before new services can be added. Without that base, correlation is weak, and investigation is just guesswork.

Scenario 2: Tool Sprawl Led to a Three-Hour Investigation

What happened

During peak hours, a spike in latency affected API traffic. Multiple systems sent out alerts. Prometheus metrics showed that some Kubernetes nodes were using all of their CPU. Logs showed that the database connection pool was full. Traces in a SaaS platform showed that there were retries at the service mesh level.

Engineers switched between three different platforms to put together the timeline of the event. There were different ways to name services for each system. There were different alert thresholds. It took almost three hours to find the full root cause.

Why it happened

The organization used different tools for:

- Metrics

- Logs

- Traces

- Alerts (for some managed services)

Each tool had grown on its own. There was no central observability strategy that linked them all together. Different platforms had different standards for instrumentation. The alignment of metadata was not consistent. The investigation workflows were broken up. The problem wasn’t that there wasn’t enough telemetry, but that they didn’t work together.

Strategic fix

The business made a formal plan for centralised observability:

- All tools use the same rules for naming services.

- Standardised metadata fields for logs, metrics, and traces

- Set up investigation workflows that go from alerts to traces to logs to metrics

- Used OpenTelemetry collectors to standardise telemetry before sending it to the backend

They put tools under the same observability operating model. Subsequent incidents adhered to a uniform investigative trajectory, thereby decreasing resolution time.

Lesson on governance

Without a governing framework, tool sprawl makes it hard to see what’s going on. For an enterprise telemetry pipeline to work, SaaS platforms and cloud-native monitoring systems all need to use the same standards.

Scenario 3: Telemetry Growth Made Observability Spend Twice as Much in One Year

What happened

An enterprise that went from 80 to 220 microservices saw its observability costs more than double in a year.

After teams turned on 100% sampling in production for debugging, trace ingestion shot up. Metrics storage grew a lot because dynamic request attributes added high-cardinality labels.

To meet audit requirements without looking at the effects on storage, log retention was increased from 30 to 90 days. Finance said that the observability budget was not possible to keep up with.

Why it happened

The enterprise telemetry pipeline did not include cost control. Engineering teams made the decisions about sampling on their own. Retention policies were made bigger without taking into account how much storage would grow. When you ingest logs from multiple regions, the volume is copied across clusters.

There was no model for managing observability costs and no way to see the cost per service. Telemetry grew faster than governance did.

Strategic fix

The organization did the following:

- Tiered sampling based on how important the service is

- Retention tiers that keep hot, searchable data separate from long-term storage

- Filtering out low-value debug logs early on

- Dashboards that show the cost of telemetry per service and region

- Quarterly cost reviews with the platform engineering and finance teams

They kept things visible while making costs more predictable.

Lesson in governance

You have to plan for cost discipline from the beginning. Telemetry volume growth is unavoidable at the enterprise level. An enterprise observability framework assumes that growth will happen and builds economic guardrails into decisions about instrumentation, processing, and storage.

Core Principles of an Enterprise Observability Strategy

Technology and platforms change. Versions of Kubernetes are moving forward. SaaS companies change how they charge for their services. The principles that make observability possible at scale will always be the same.

Businesses that don’t follow these rules make things more complicated. Companies that make them a part of their business create strong operating models.

Standardization Comes Before Scale

Standardisation comes before growth at the enterprise level. Adding more coverage without shared rules makes things inconsistent, which gets worse over time. A new service adds its own way of logging. A different team adds custom labels to metrics. Different languages handle trace propagation in different ways.

Correlation starts to break down without anyone noticing. A well-organised enterprise telemetry pipeline begins with:

- Standardised instrumentation rules for all teams

- Consistent names for services, tags for environments, and metadata about ownership

- Logging structure that works the same way on all apps and platforms

- Used OpenTelemetry or similar standards to make sure that the trace context was passed on

Automation doesn’t work without these building blocks. Cross-signal linking also stops working. As metadata can’t be trusted, investigation workflows take longer.

Standardisation makes it possible to correlate, model costs, and enforce governance. Companies that grow first and then standardise later have to deal with operational friction.

Open and Interoperable Foundations

Open and interoperable foundations reduce long-term risk. Vendor-neutral instrumentation strategies protect your architecture from lock-in. Instrumentation should not bind your services to a single backend.

Separating data collection from storage preserves flexibility:

- OpenTelemetry collectors normalize signals before routing

- Metrics may flow to Prometheus-compatible systems

- Logs may index into Elasticsearch or OpenSearch

- Traces may route to enterprise platforms such as Datadog, New Relic, Splunk, or cloud-native backends

The key principle is portability. In multi-cloud environments, workloads move between providers. Mergers and acquisitions introduce new stacks. Hybrid architectures persist for years.

Multi-cloud observability governance requires an enterprise observability operating model that transcends individual vendors. Open standards provide that stability.

Interoperability ensures your enterprise telemetry pipeline evolves without requiring reinstrumentation every time backend preferences change.

Correlation Across Signals

A common service identity model must bring together metrics, events, logs, and traces (MELT). Without that alignment, observability stays broken up. To be effective, correlation needs:

- Service identity to be included in every signal

- The flow of context across service boundaries

- Adding metadata at the collector or processing layer

- Investigation workflows that move seamlessly across signals

Engineers gain insight from dashboards when they can go from an SLO breach to a trace to the right logs without having to change the way they think. In Kubernetes-based environments, where services can change size and dependencies can change, context propagation is very important. When there is a lot of traffic, missing trace context can make incidents last a lot longer.

Governance as a Top Priority

Observability without governance gets worse. Standards for tagging change and retention policies grow without being checked. During incidents, sampling policies change and stay higher than normal.

A mature enterprise observability framework sets up:

- Clear ownership of telemetry across domain teams

- Centralised enforcement of standards for instrumentation

- Set rules for collecting and keeping data

- Access controls that meet compliance standards

- Auditability throughout the company’s telemetry pipeline

Governance makes it possible to grow and stops things from falling apart. Multi-cloud observability governance makes sure that retention policies are the same in all regions, security boundaries are followed, and telemetry practices follow the rules.

Cost Discipline by Design

As architectures grow, the amount of telemetry data grows faster. Costs go up faster than infrastructure spending when there are no guardrails. An effective observability cost management model puts cost controls right in the enterprise telemetry pipeline:

- Sampling strategies that match the importance of the service

- Retention tiers that separate hot, searchable data from data that is stored for later use

- Filtering out low-value debug or duplicate logs early on

- Constant access to the cost per service and region

Telemetry cost trends should be shown next to reliability metrics for engineering teams. As part of their quarterly architecture talks, platform teams should look at how ingestion is growing.

Cutting costs reactively makes it harder to see. Engineered cost discipline keeps an eye on things while keeping costs predictable.

Developer Experience Is Important

Engineers skip observability if it makes their brains work harder. They don’t pay attention to alerts that are too loud. If queries don’t work right, they go back to manual debugging. A strong enterprise observability strategy makes developers’ jobs easier:

- Signal quality standards cut down on alert noise.

- Consistent query patterns across logs, metrics, and traces

- Shared dashboards that show a standardised service identity

- Easy-to-follow rules for new services to get started

Engineers love observability when it makes debugging faster and ownership clearer. Instead of being an afterthought, it becomes part of the daily routine.

When done right, observability:

- Speeds up productivity

- Cuts down on switching contexts

- Makes investigations go faster

- Makes it easier for platform and domain teams to work together

These rules are the foundation of your business’s observability framework. They affect how telemetry is set up, run, paid for, and used. They also decide if complexity gets worse or easier to handle.

Enterprise Observability Reference Architecture

An enterprise observability reference architecture defines how telemetry moves through your organization from code to executive reporting. It’s a control model.

At scale, observability fails when telemetry flows grow organically without structure. A reference architecture exists to impose consistency, governance, and cost discipline across every layer of the enterprise telemetry pipeline. The core flow remains consistent across industries and platforms.

Service Layer

Services run in Kubernetes clusters, virtual machines, serverless platforms, or managed cloud services. They take care of data processing, business logic, API traffic, and background jobs. At this level, identity is the most important thing.

Each service must expose a stable service name, environment, version, and ownership metadata. Without that identity, every downstream signal loses meaning.

Instrumentation Layer

Modern enterprises standardize on OpenTelemetry SDKs and auto-instrumentation where possible. This creates consistency across languages and frameworks. Instrumentation responsibilities include:

- Emitting traces with propagated context

- Producing structured logs with consistent fields

- Exporting metrics with controlled cardinality

The instrumentation layer is where standards are enforced. If instrumentation diverges, no downstream system can correct it reliably. This layer determines signal quality.

Collector Layer

Collectors form the control plane of the enterprise telemetry pipeline. OpenTelemetry collectors typically run as:

- Sidecars for latency-sensitive workloads

- DaemonSets in Kubernetes clusters

- Regional gateways aggregating traffic from multiple environments

Collectors decouple services from backends. They absorb traffic spikes, normalize metadata, and provide a single place to enforce processing rules.

Processing Layer

The processing layer turns raw telemetry into data that is controlled. Some of the most common duties are:

- Sampling that matches the importance of the service

- Adding environment and ownership metadata to signals

- Filtering out telemetry that is low-value or a duplicate

- Sending signals to the right backends

This is where cost discipline comes into play. If there is no processing layer, decisions about sampling get into the application code. Retention policies become specific to the backend, and cost control becomes less effective.

Processing brings control together and makes sure things are always the same.

Storage Layer

Storage systems persist telemetry for analysis. In enterprise environments, this layer often includes:

- Prometheus-compatible systems for metrics

- Dashboards for visualization

- Tools for log indexing

- Trace backend tools or cloud-native platforms

Multiple storage systems are normal. Fragmentation becomes a problem only when governance is absent. The reference architecture assumes heterogeneity. It controls behavior through standards and processing, not by forcing a single backend.

Governance Layer

Governance overlays the entire enterprise telemetry pipeline. It does not live only in the storage layer. Governance defines:

- Retention policies by signal type and service class

- Sampling policies enforced centrally

- Access control and data visibility boundaries

- Ownership enforcement and accountability

- Observability cost management model tracking cost per service and per region

In multi-cloud observability governance, these controls must apply consistently across regions and providers. Governance turns observability from an engineering convenience into an enterprise capability.

Investigation Layer

At the investigation layer, telemetry becomes operational insight. It supports:

- Cross-signal workflows from alert to trace to logs to metrics

- Consistent query patterns across tools

- Shared dashboards aligned to service identity

- Reduced context switching during incidents

This layer depends entirely on the upstream discipline. When service identity, metadata, and processing are consistent, investigations become fast and repeatable. When they are not, engineers revert to manual correlation.

Executive Reporting Layer

The last layer turns telemetry data into business signals. Executive reporting focuses on:

- Trends in reliability and following the SLO

- Average time to get better

- How often and how bad the incidents are

- Telemetry cost growth and predictability

This layer links observability to managing risk. CTOs keep an eye on the health of the business. CFOs track cost behavior. Compliance teams keep an eye on retention and auditability. Platform teams keep an eye on how well standards are being used.

Why the Architecture Matters

This reference architecture enforces a critical principle. Governance applies across the entire enterprise telemetry pipeline, not just storage.

- Instrumentation defines quality.

- Collectors enforce control.

- Processing manages cost.

- Governance ensures sustainability.

- Investigation delivers speed.

- Reporting creates accountability.

Enterprises that adopt this architecture scale observability deliberately. Enterprises that skip it allow complexity to accumulate unchecked.

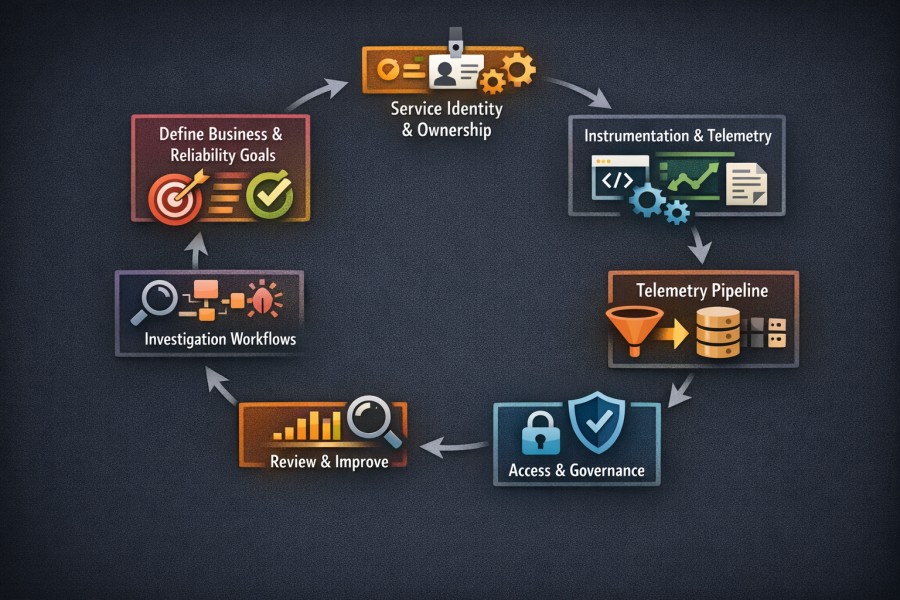

How to Design an Enterprise Observability Strategy Step by Step

Designing an enterprise observability strategy requires discipline and sequence. Many organizations attempt to start with tooling. The correct starting point is clarity.

Each step builds on the previous one. Skipping steps introduces fragility that only becomes visible at scale.

Step 1: Define Business and Reliability Outcomes

Start with outcomes, not telemetry. An enterprise observability framework must align with measurable business goals. That means defining:

- MTTR targets for critical services

- SLO coverage across customer-facing systems

- Budget guardrails for telemetry growth

Without explicit targets, observability becomes reactive. For example, if your goal is to reduce MTTR from 90 minutes to 30 minutes for Tier 1 services, that requirement influences sampling decisions, retention tiers, and investigation workflows.

If leadership sets a telemetry budget growth cap of 15 percent annually, that requirement shapes your observability cost management model from day one. Reliability and cost are business metrics; observability exists to serve them.

Step 2: Set up Service Identity and Ownership

The whole enterprise telemetry pipeline is based on service identity. Before adding more instruments, make a structured service catalogue that lists:

- Names of canonical services

- Teams that own things

- Types of environments

- Levels of importance

All logs, metrics, and traces must have the same service metadata. It must be clear who owns something. Domain teams are in charge of making sure that their services have good instrumentation. A centralised platform group makes sure that all businesses follow the same rules.

When people know who is responsible, the quality of telemetry goes up. Standards change when they aren’t clear. Service identity makes it possible to see connections, manage costs, and see costs. It is the basis.

Step 3: Standardize Instrumentation and Telemetry Rules

After accountability, make sure that your instruments are always the same. Standardisation includes:

- Tags that are needed, like service.name, team, version, and environment

- Structured logging policies that work across languages

- Used OpenTelemetry standards to make sure that the trace context was passed along.

- Naming conventions for metrics that stop uncontrolled cardinality

If 200 microservices send out traces in different ways, for instance, correlation stops being reliable. If dynamic labels add user-level cardinality to Prometheus metrics, storage growth happens very quickly.

Standards for instrumentation must be written down and, when possible, enforced through CI validation. Consistency is important for automation.

Step 4: Design the Telemetry Pipeline

This is where architecture and economics come together. The enterprise telemetry pipeline needs to have a controlled flow:

- Service

- Instrumentation

- Collector

- Processing

- Storage

Some important design choices are:

- Using OpenTelemetry collectors to route and normalise signals

- Centralising processing logic to make sure that sampling and enrichment happen

- Setting up retention tiers for data that is hot and cold

Sampling has to be planned. When there are 200 microservices making 1 million traces every day, that quickly adds up to more than 30 million traces per month for each domain. Costs for ingestion and indexing go up quickly if sampling isn’t based on risk.

Some important services may need higher trace sampling rates. Internal services might be able to handle lower rates. Policies for sampling should be based on how they affect the business, not what engineers want.

Try to avoid duplicating ingestion across multiple regions when you can. Instead of copying the same logs across clusters, regional collectors should filter and route them smartly.

Retention policies should take into account the importance of the service and the need to follow the rules. Not all telemetry needs to be stored for the same amount of time.

Step 5: Set Up Access and Governance Controls

Governance helps teams grow and stops things from drifting out of control. Governance of multi-cloud observability needs to be the same across all providers. Policies should apply regardless of whether telemetry traverses Prometheus or enterprise SaaS platforms. An observability operating model that works well includes:

- Central rules for tagging and sampling enforcement

- Defined retention policies were looked over with people who are responsible for compliance.

- Role-based access controls that follow the principle of least privilege

- The ability to audit sensitive log data

Step 6: Build Correlated Investigation Workflows

Telemetry is only useful if it speeds up the investigation. Make workflows that let engineers:

- Go from alert to trace to related logs without having to copy them by hand.

- Connect unusual metrics with trace spans

- Quickly find downstream dependencies

Cross-signal visibility reduces cognitive load. You need to control the noise that alerts you. SLO-based alerting cuts down on extra signals. A clear service identity makes it easier to find patterns in queries. Shared dashboards that are connected to ownership cut down on context switching.

Step 7: Measure, Improve, and Review Quarterly

An enterprise observability strategy changes over time. Check both the reliability and the cost:

- MTTR by level of service

- Trends in the number and severity of incidents

- Rates of compliance with SLO

- Cost of telemetry per service

- The rate of growth of telemetry by region

Add cost anomaly detection to your model for managing observability costs. Before invoices come, you should look into sudden rises in trace volume or metric series count.

Platform engineering, finance leadership, and technology executives should all be part of quarterly strategy reviews. CTOs look at how to make things more reliable. CFOs look at how predictable costs are. Platform teams check to see if standards are being followed.

When you follow these 7 steps in order, architecture, governance, and cost management all fit together naturally. This structured method turns observability from a bunch of tools into a long-lasting business skill.

Measuring Success and Maturity

An enterprise observability strategy needs to show progress that can be measured. Observability is still based on stories if there are no clear maturity markers and KPIs. People feel like they have more to do. Dashboards are growing. Prices go up. Leadership lacks clarity on whether the investment improves reliability.

Maturity tells you where you are. But metrics tell you if you’re getting better.

Enterprise Observability Maturity Stages

Maturity in enterprise observability is not about the number of tools deployed. It reflects how coherently telemetry supports reliability, governance, and cost control.

Reactive

At the reactive stage, observability exists but lacks structure. Typical characteristics include:

- Isolated tools for logs, metrics, and traces

- Manual log scraping during incidents

- No standardized service identity

- Slow and inconsistent incident response

Telemetry exists. Correlation does not. Engineers spend time reconstructing events rather than analyzing them. Incident recovery depends on individual experience rather than system design.

Instrumented

At the instrumented stage, signals are broadly collected. Organizations often deploy OpenTelemetry SDKs, Prometheus for metrics, Elasticsearch or OpenSearch for logs, and one or more trace backends. However:

- Cross-signal linking remains limited

- Trace context propagation is inconsistent

- Metadata conventions vary by team

- Cost controls are not formally defined

Data volume increases. Insight improves slightly. Complexity grows alongside it.

Correlated

At the correlated stage, logs, metrics, and traces connect around a shared service identity. Key characteristics include:

- Standardized trace context propagation across services

- Unified metadata fields across telemetry

- Defined investigation workflows from alert to root cause

- Noticeably faster root cause analysis

Cross-signal navigation becomes reliable. Engineers pivot less. Incident timelines shorten. Correlation transforms the enterprise telemetry pipeline from fragmented streams into a cohesive investigative system.

Optimized

The optimized stage adds governance and economics to the way the business runs. Some of the features are:

- Governance policies that apply to the whole telemetry pipeline

- Sampling that fits the importance of the service

- Tiered retention was used in all environments in the same way.

- Cost per service was tracked and looked at.

Executive dashboards are operational and reliable. Observability is a structured enterprise capability. We keep an eye on the growth trends in telemetry. There is a way to find cost anomalies. Reliability metrics are in line with the goals of the business.

At this point, observability helps with long-term growth and making financial predictions. Maturity moves forward when governance, correlation, and cost discipline come together.

Strategic KPIs That Matter

Strategic KPIs link telemetry to performance and money.

Just looking at operational metrics isn’t enough. Businesses need to keep track of both their financial behavior and their reliability outcomes.

Key indicators include:

- MTTR by service tier: MTTR reflects investigation efficiency. A correlated system shortens recovery time.

- Change failure rate across production deployments: Change failure rate reflects system resilience. Observability accelerates the detection and mitigation of regressions.

- SLO compliance percentages for customer-facing services: From the customer’s point of view, SLO compliance measures reliability. It connects telemetry to how users feel about it.

- Cost per service or per product domain: The cost per service shows how people act in the economy. Teams see how decisions about instruments affect spending.

- Telemetry growth rate by region and signal type: The telemetry growth rate tells you if the enterprise telemetry pipeline is still under control. Sudden spikes are often a sign of high-cardinality metrics, changes in trace sampling, or duplication across regions.

A fully developed observability cost management model keeps an eye on these signs all the time.

Executive-Level Reporting

Executive reporting turns telemetry data into business risk. Let’s understand what CTOs, CFOs, and compliance leaders are concerned about:

- CTOs: CTOs are concerned with reliability posture. It’s about trends in the frequency of incidents, MTTR for important services, and the rates of SLO attainment.

- CFOs: CFOs care about knowing how much things will cost. It’s about the increase in telemetry spending, cost per service difference, and improvements in efficiency linked to optimization efforts.

- Compliance leaders: They pay attention to retention adherence, the ability to audit sensitive logs, and consistent access control across regions.

Operational dashboards for leaders should show:

- Reliability changes over time

- Cost trends went along with service growth.

- Metrics for following governance

These dashboards need to be consistent and able to be defended. Without needing a technical translation, executives should be able to trust the data and know what it means.

When executive reporting is in line with the enterprise observability framework, telemetry becomes an important part of the business.

Enterprise Observability vs Ad-Hoc Observability

At scale, the difference between enterprise observability and ad-hoc observability becomes visible quickly.

Ad-hoc approaches evolve organically. A team adds Prometheus for metrics. Another team deploys Elasticsearch for logs. Traces flow into a SaaS backend. Alerts are configured locally. Over time, complexity accumulates.

An enterprise observability framework imposes structure across that complexity. It defines standards, ownership, governance, and cost controls before scale magnifies weaknesses.

| Category | Enterprise Observability | Ad-Hoc Observability |

| Standardization | Unified instrumentation standards enforced across teams | Team-specific conventions with inconsistent tagging |

| Service Identity | Consistent service naming and ownership metadata across logs, metrics, and traces | No canonical identity model, correlation relies on manual effort |

| Ownership Model | Centralized governance with federated team accountability | Ownership unclear or distributed without enforcement |

| Telemetry Pipeline | Defined enterprise telemetry pipeline with collectors and centralized processing | Direct-to-backend ingestion without centralized control |

| Correlation | Logs, metrics, and traces aligned around a shared service context | Signals stored in separate systems with limited linking |

| Cost Management | Observability cost management model tracking cost per service and telemetry growth | Costs reviewed reactively after unexpected increases |

| Retention Policies | Tiered retention aligned to service criticality and compliance | Uniform retention or ad-hoc extensions without cost modeling |

| Multi-Cloud Governance | Multi-cloud observability governance applied consistently across regions | Region-specific policies with inconsistent controls |

| Investigation Workflow | Structured alert-to-root-cause workflow across signals | Manual pivoting between tools during incidents |

| Executive Reporting | Reliability and cost dashboards aligned with business metrics | Operational dashboards without executive-level context |

Common Failure Patterns in Enterprise Observability Programs

Enterprise observability programs don’t collapse overnight. They erode through small decisions that seem harmless at first. A new tool is added. A retention period is extended. A team bypasses tagging standards for speed. Sampling is increased during an incident and never reduced. At scale, these decisions compound.

The patterns below appear consistently across multi-cloud, Kubernetes-based enterprises operating large distributed systems. Recognizing them early prevents long-term instability.

Tool Sprawl Without Standards

Enterprises often run multiple tools for logs, metrics, traces, dashboards, and alerting. But more than multiple, the problem is with the lack of standards. Without a single framework for enterprise observability:

- Different platforms use different service names.

- Metadata rules change over time

- One system sends out alerts without any context in another.

- Cross-referencing by hand is needed for the investigation.

Fragmentation makes investigations take longer and makes people less likely to trust telemetry. A centralized observability strategy makes sure that all of the telemetry pipeline’s services have the same identity, metadata, and investigation workflows.

No Clear Ownership of Telemetry

When ownership is unclear, the quality of telemetry goes down. In big companies:

- Platform teams think that application teams are responsible for instrumentation.

- Application teams think that platform teams make sure that standards are followed.

- No one keeps track of sampling rules.

- No one checks on the growth of cardinality.

Over time, tags don’t always match up, and trace propagation breaks. Logging verbosity goes up, and you can’t see the costs clearly. Also, a lack of governance leads to economic instability.

A good observability operating model outlines:

- Which team is in charge of service-level instrumentation?

- Which group makes sure that business standards are followed?

- Who looks over the growth of telemetry and the cost of each service?

- How to check that compliance requirements are met

Ignoring Cost Until It Escalates

The cost of observability does not go up and down randomly. It grows in a way that is easy to see when it is not managed. Some common drivers are:

- Allowing full trace sampling in production

- Adding metric labels with a lot of values

- Copying logs to other regions without filtering

- Extending retention without figuring out how it will affect storage

When cost reviews happen only after bills go up a lot, engineering teams act defensively. Visibility drops off quickly. Retention is shorter for all services. Sampling drops without regard.

Reactive cuts damage reliability. Cost discipline must be engineered into the enterprise telemetry pipeline through:

- Defined sampling policies aligned to service risk

- Tiered retention strategies

- Cost anomaly detection

- Quarterly reviews with engineering and finance stakeholders

Over-Retention of Low-Value Data

Over-retention is a bad way to save money. Extensions of retention often happen when there is pressure:

- Compliance concerns

- Fear of losing forensic information

- Requests from executives for historical analysis

It might seem okay to extend retention from 30 days to 90 days. It greatly increases the need for storage and indexing when used on a large scale. Not all telemetry is equally valuable.

An enterprise observability framework delineates:

- Which signals need to be kept for a long time

- Which logs can be stored in less expensive storage

- Which metrics can be added up after certain amounts of time

- Which traces need to stay searchable

Retention should be based on how important the service is and what the law says, not on the default settings.

Alert Noise Overwhelming Engineers

Engineers are overwhelmed by alert noise. Alert fatigue makes people less likely to trust observability. In environments that are broken up:

- Alerts based on thresholds go off a lot

- Duplicate alerts go off in different tools.

- There is no clear ownership of SLO violations.

- Engineers turn off loud channels.

As noise rises, signal reliability falls. A mature enterprise observability operating model enforces:

- SLO-driven alerts that are in line with business impact

- A clear map of who owns each alert

- Removing duplicates across platforms

- Regular reviews of alerts to get rid of low-value triggers

Where CubeAPM Fits in an Enterprise Observability Strategy

A platform that supports standards, governance, and cost discipline is needed for an enterprise observability strategy. Your operating model needs to match the platform. If not, things get complicated again.

CubeAPM is a good fit for companies that use OpenTelemetry as their standard and see observability as part of their infrastructure.

Architecture with OpenTelemetry First

Mature businesses use OpenTelemetry to separate code-level instrumentation from backend decisions. This keeps things flexible in the long run and stops the need to re-instrument hundreds of services when tools change.

CubeAPM natively takes in OpenTelemetry signals, making it easy to set up a clean enterprise telemetry pipeline in both Kubernetes and multi-cloud environments.

Unified Investigation Across Signals

Prometheus and Grafana are often used for metrics in big environments, Elasticsearch or OpenSearch for logs, and Datadog, New Relic, Splunk, or CloudWatch for APM. Investigations often involve more than one system.

CubeAPM brings together metrics, events, logs, and traces based on shared service identity and trace context. Engineers can go from alert to trace to logs without changing the way they look into things. That unity speeds up the time it takes to solve problems.

Designing for Cost Management

The amount of telemetry grows as the system gets more complicated. Hundreds of services can make tens of millions of traces every month. Extended retention and high-cardinality metrics raise storage costs.

An enterprise observability framework needs to have predictable costs for ingestion and be able to see the cost per service. CubeAPM has a pricing model based on ingestion that lets

- Predicting the growth of telemetry

- Aligning sampling with how important the service is

- Tracking the cost of each workload

Predictability helps with disciplined scaling and planning for executives.

Governance Built into the Pipeline

Retention policies, sampling controls, and access management must be in place for the whole telemetry flow.

CubeAPM lets you set up structured pipelines with retention tiers, role-based access control, and centralized oversight. This fits with the multi-cloud observability strategy and governance-first architecture.

Strategic Alignment

CubeAPM works with businesses that put the following first:

- Instrumentation that works with OpenTelemetry

- Cross-signal investigation that is unified

- Managing the costs of structured observability

- Governance built into the telemetry pipeline

CubeAPM helps businesses keep an eye on their operations in a disciplined and scalable way.

Check out how a D2C brand saved monitoring costs by 70% with CubeAPM.

Conclusion

Enterprise observability functions as an operating model that shapes how telemetry is collected, governed, and used across the organization. At scale, discipline matters. Clear ownership, enforced standards, and structured retention policies create long-term resilience.

Standardization enables sustainable growth. When instrumentation, service identity, and investigation workflows follow defined conventions, expansion strengthens visibility instead of fragmenting it. Cost predictability protects margins by aligning telemetry growth with financial accountability.

Strategy determines sustainability. Organizations that embed governance, economic guardrails, and architectural clarity into their observability framework build systems that remain reliable, scalable, and financially stable as complexity increases.

Disclaimer: The information in this article reflects the latest details available at the time of publication and may change as technologies and products evolve.

Frequently Asked Questions (FAQs)

1. What is the difference between enterprise observability and standard observability?

Enterprise observability focuses on governance, scale, cost control, and cross-team alignment across large, distributed systems. Standard observability often centers on tooling and signal collection for individual applications or teams.

2. How is enterprise observability different from monitoring?

Monitoring answers predefined questions using alerts and thresholds. Enterprise observability enables investigation of unknown failures across logs, metrics, and traces, with governance and cost controls built in for scale.

3. When should an organization formalize an enterprise observability strategy?

Organizations should formalize a strategy when they operate multi-team, multi-cloud, or microservices environments, experience rising observability costs, or struggle with fragmented tooling and unclear ownership.

4. What role does OpenTelemetry play in enterprise observability?

OpenTelemetry provides a standardized, vendor-neutral foundation for collecting logs, metrics, and traces. It reduces instrumentation inconsistency and prevents vendor lock-in at enterprise scale.

5. How do enterprises control observability costs without losing visibility?

Cost control typically involves intentional sampling policies, tiered retention strategies, telemetry filtering, and aligning data collection with service criticality rather than ingesting everything indiscriminately.