Modern software runs across dozens of microservices, containers, serverless functions, and cloud regions. When something breaks at 2 AM, you need answers immediately. That requires telemetry: the structured data that tells you what your system is doing and why it misbehaved. OpenTelemetry (OTel) is the open-source project that standardizes how that telemetry is generated, collected, and delivered.

As of 2026, OpenTelemetry is the second most active project in the Cloud Native Computing Foundation (CNCF) ecosystem, behind only Kubernetes. More than 1,000 integrations, 12 language SDKs, and contributions from over 185 organizations have made it the de facto standard for observability instrumentation in cloud-native environments.

This guide covers everything you need to understand OpenTelemetry: what it is, how its architecture works, what its core components do, how it compares to its predecessors, and how to choose an observability backend that complements it.

What is OpenTelemetry?

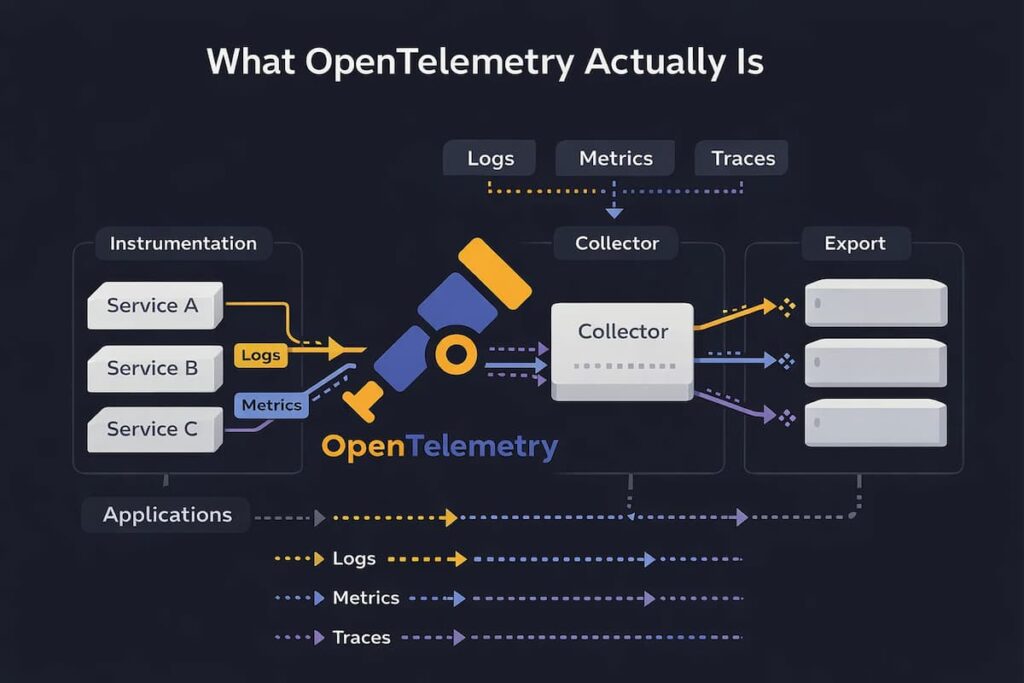

OpenTelemetry is an open-source observability framework and toolkit that provides a standardized way to generate, collect, and export telemetry data such as traces, metrics, and logs. It is vendor-neutral and tool-agnostic, meaning it works with a broad variety of observability backends, including open-source tools like Jaeger and Prometheus as well as commercial platforms like CubeAPM, Datadog, Grafana, and New Relic.

The project is hosted by the Cloud Native Computing Foundation (CNCF), the same foundation that stewards Kubernetes and Prometheus. OTel is built on two guiding principles, as stated in its official documentation:

- You own the data that you generate. There is no vendor lock-in.

- You only have to learn a single set of APIs and conventions.

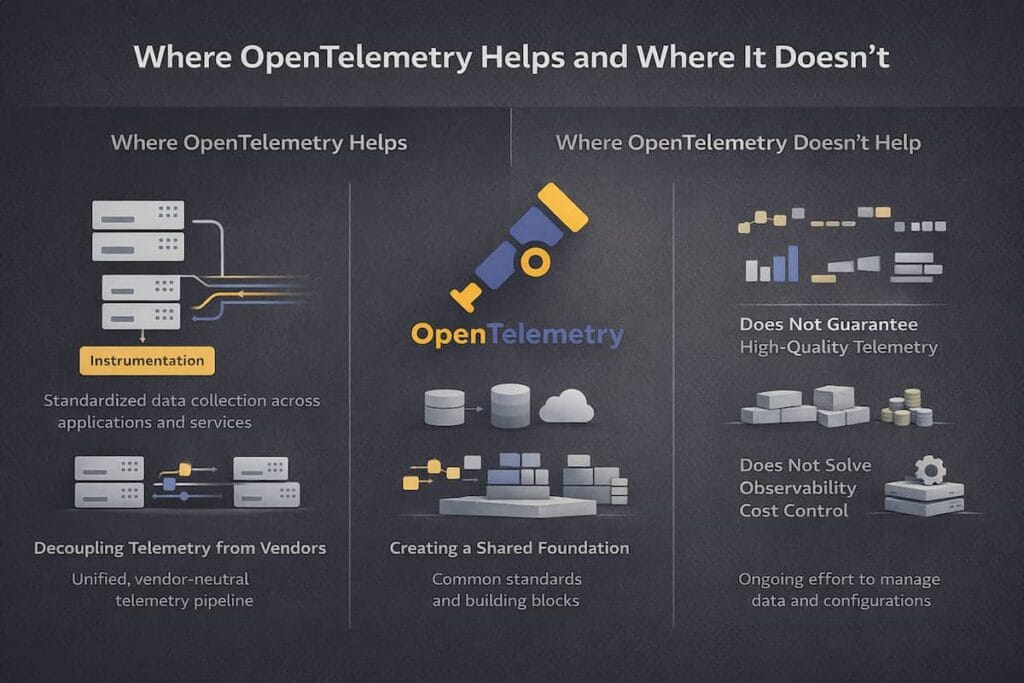

Practically speaking, OpenTelemetry lets you instrument your application code once and then route the resulting telemetry to any backend of your choice. You can switch from one observability vendor to another without re-instrumenting your codebase. This is one of the primary reasons organizations are adopting it at scale.

A Brief History: From OpenTracing and OpenCensus to OTel

Before OpenTelemetry, the observability landscape was fragmented. Two open-source projects competed for adoption:

- OpenTracing (2016): A vendor-neutral API standard for distributed tracing. It defined how to instrument code but did not provide an implementation. It was limited to traces only.

- OpenCensus (originated at Google): A collection of language-specific libraries that provided both metrics collection and distributed tracing. It included implementations but was tightly coupled to specific exporters.

Neither project could fully solve the problem on its own. OpenTracing lacked implementations; OpenCensus lacked the breadth of signal coverage and community alignment. In 2019, the two projects merged to form OpenTelemetry, combining OpenTracing’s vendor-neutral API approach with OpenCensus’s practical library support.

OpenTelemetry entered beta in 2020 and has since grown to become the industry standard for telemetry instrumentation. It unified the community under a single specification, eliminated the duplication of effort, and provided a path for vendors to integrate once rather than many times.

| Attribute | OpenTracing | OpenCensus | OpenTelemetry |

|---|---|---|---|

| Year introduced | 2016 | Originated at Google, open-sourced 2018 | 2019 (GA 2021) |

| Signals covered | Traces only | Traces and Metrics | Traces, Metrics, Logs, Profiles (in progress) |

| Approach | API specification only | Language-specific libraries | APIs, SDKs, Collector, Protocol |

| Vendor neutrality | Yes | Partial | Yes, fully |

| Current status | Archived | Archived | Active, CNCF incubating |

The Three Pillars of Telemetry: Logs, Metrics, and Traces

Observability relies on three primary types of telemetry data. OpenTelemetry standardizes the generation and transport of all three, plus an emerging fourth signal: continuous profiling.

Logs

Logs are timestamped textual records of discrete events that occurred within a system. They are the oldest form of telemetry. A login attempt, an exception stack trace, and a database query result: all of these are captured as log entries. Logs are best suited for debugging specific incidents and verifying that code executed as expected.

A typical log entry captures the timestamp; severity level (INFO, WARN, ERROR); a message; and contextual attributes like user ID or request ID.

Metrics

Rather than capturing every event, metrics summarize system behavior as numeric measurements sampled or aggregated over time. CPU utilization, memory usage, request rate, error rate, and latency percentiles are the kinds of signals metrics track.

Keeping the data compact means it stays queryable and cheap to store, which is why metrics work so well for live dashboards, threshold-based alerts, and identifying trends over weeks or months.

Traces

A distributed trace tracks the complete journey of a single request as it travels through multiple services. Each service adds a span to the trace, recording the time taken and contextual attributes for that operation. Spans are linked by a shared trace ID and a parent-child relationship, forming a tree structure that reveals exactly where latency or errors occurred.

Traces are most valuable in microservice architectures where a single user-facing request may touch ten or more backend services. Without distributed tracing, pinpointing the source of a performance degradation in such an environment is extremely difficult.

Profiles (Emerging Signal)

Continuous profiling is being treated as a fourth signal in OpenTelemetry, sitting alongside the traditional three. It collects CPU usage, memory allocation, and code-level performance data continuously in production, giving teams visibility into what the code is actually doing at runtime.

Support for profiling was added to OpenTelemetry’s primary signals not long ago, though it has not yet reached stable status across all language SDKs, so how much you can rely on it depends on your stack.

OpenTelemetry Architecture: How the Components Fit Together

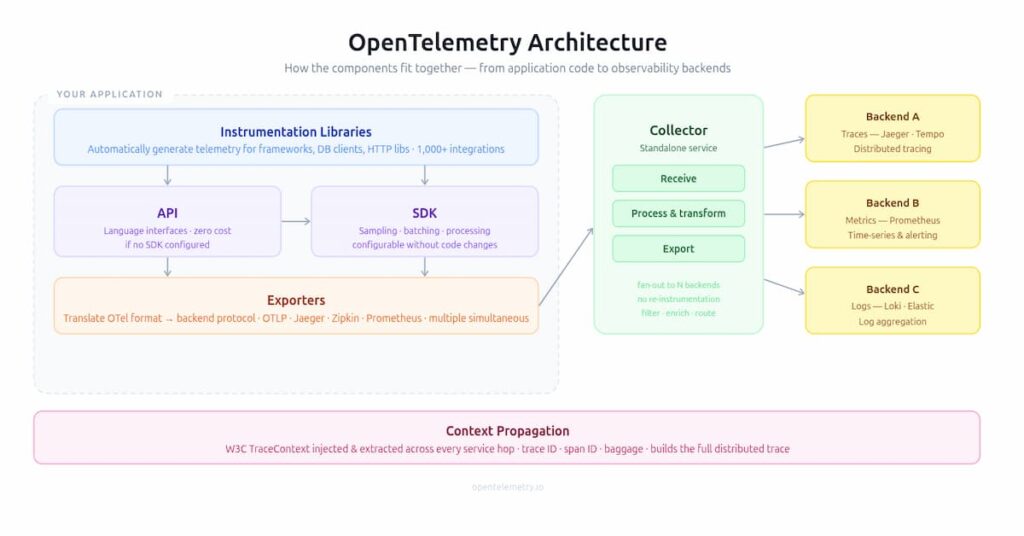

OpenTelemetry is built around a modular, layered architecture. Each component has a well-defined responsibility, and components can be adopted independently or used together as a full pipeline.

APIs

The OTel API defines the interfaces that application code calls to produce telemetry. These interfaces are language-specific and follow the OpenTelemetry specification. A key design principle is that the API is decoupled from the SDK: if no SDK is configured, API calls become no-ops and carry zero runtime cost. This means you can safely instrument a library using only the API without forcing downstream users to adopt the full SDK.

SDKs

The OTel SDK implements the API and handles the practical work of data gathering, processing, batching, sampling, and exporting. SDKs are configurable without requiring changes to application code. An engineering team can change the sampling rate, add resource attributes, switch exporters, or route data to multiple backends entirely through SDK configuration.

Instrumentation Libraries

OpenTelemetry provides instrumentation libraries for popular frameworks and libraries in each supported language. These libraries automatically generate telemetry for web frameworks, database clients, HTTP libraries, messaging systems, and cloud SDKs without requiring developers to add manual instrumentation. The project currently supports more than 1,000 integrations across languages and frameworks.

Exporters

Exporters translate telemetry data from the OTel format into the format required by a specific backend. Each SDK ships with exporters for common protocols, including OTLP, Jaeger, Zipkin, and Prometheus. Multiple exporters can be configured simultaneously, allowing the same telemetry to be sent to more than one backend without re-instrumentation.

The OpenTelemetry Collector

The Collector is a standalone service that sits between your instrumented applications and your observability backends. It receives telemetry, processes and transforms it, and exports it to one or more destinations. The Collector is discussed in detail in the next section.

Context Propagation

Context propagation links spans across services. When service A calls service B, it passes trace details like the trace ID and span ID through HTTP headers. Service B reads that context and creates a child span under the same trace. This continues across each service call, helping build one complete distributed trace. OpenTelemetry uses W3C TraceContext as the default format.

The OpenTelemetry Collector: The Central Routing Hub

The OpenTelemetry Collector is a vendor-agnostic service for receiving, processing, and exporting telemetry data. It eliminates the need to run multiple proprietary agents from different vendors, replacing them with a single configurable pipeline.

The Collector is built from three types of components that are chained together in a pipeline:

- Receivers: Components that accept incoming telemetry data. The Collector supports more than 200 receivers, including OTLP, Jaeger, Zipkin, Prometheus scraping, Fluent Bit, and cloud-native sources like AWS CloudWatch and Google Cloud Monitoring.

- Processors: Components that transform the data in-flight. Common processors handle batching (grouping spans to reduce network calls), attribute modification (adding, removing, or renaming attributes), sampling (reducing data volume by dropping low-value spans), and filtering.

- Exporters: Components that send the processed data to a backend. As with receivers, the Collector ships with exporters for every major observability platform.

The Collector can be deployed in two topologies. In agent mode, a Collector instance runs on each host or as a sidecar in each Kubernetes pod, collecting data close to the source. In gateway mode, a centralized Collector cluster receives data from all agents or applications and routes it to backends. Many production deployments use a combination of both.

The Collector also handles interoperability with whatever tooling your organization already has in place. If you are running Prometheus or FluentBit, those data streams can be redirected through the Collector, which translates them into OTel format and forwards them over OTLP or any other supported protocol. That means teams can shift to OpenTelemetry incrementally, without needing to rip out existing infrastructure all at once.

The OpenTelemetry Protocol (OTLP)

OTLP (OpenTelemetry Protocol) is the specification that defines how telemetry data is encoded and transported between sources, intermediaries, and backends. It uses Protocol Buffers for encoding and supports two transport mechanisms: gRPC and HTTP/1.1 with JSON or Protobuf payloads.

OTLP has become the de facto standard for telemetry data transfer in the observability industry. Virtually every major observability platform and open-source backend now supports OTLP as a native ingestion protocol. This means instrumentation that exports via OTLP can be directed to any modern observability platform without code changes.

Before OTLP, teams had to use backend-specific exporters and protocols. Switching vendors required updating instrumentation code throughout the codebase. With OTLP as the universal wire format, the instrumentation layer is permanently decoupled from the storage and analysis layer.

Instrumentation: Code-Based vs. Zero-Code

OpenTelemetry supports two instrumentation approaches, and both can be used simultaneously within the same application.

Code-Based Instrumentation

Code-based instrumentation, sometimes called manual instrumentation, means adding OTel API calls directly into your source code so a developer can explicitly create spans, record metrics, and emit log records at exactly the points where telemetry matters most.

That level of control produces richer telemetry than any automated approach, since the developer can attach custom attributes and context that a library would have no way of knowing about.

Zero-Code Instrumentation (Auto-Instrumentation)

Zero-code instrumentation, also called auto-instrumentation, uses agents or bytecode injection to generate telemetry automatically without modifying source code. The instrumentation is applied at the library level, capturing telemetry for every HTTP request, database call, and messaging operation made by popular frameworks and libraries that your application uses.

This approach requires no access to the application source code and is ideal for getting started quickly, for third-party dependencies, and for cases where modifying code is not feasible. The trade-off is less granular control over what is captured and less context about application-specific operations.

In practice, production deployments typically combine both approaches: auto-instrumentation for framework-level coverage and manual instrumentation for business-critical paths that need custom context.

Language SDKs and Multi-Language Support

OpenTelemetry provides native SDKs for more than 12 programming languages. Each SDK implements the OTel specification and includes APIs, instrumentation libraries, exporters, and auto-instrumentation capabilities.

| Language | Tracing Status | Metrics Status | Logs Status |

|---|---|---|---|

| Java | Stable | Stable | Stable |

| Python | Stable | Stable | Stable |

| Go | Stable | Stable | In development |

| JavaScript / Node.js | Stable | Stable | Stable |

| .NET / C# | Stable | Stable | Stable |

| Ruby | Stable | Stable | In development |

| PHP | Stable | Stable | In development |

| Rust | Beta | Beta | In development |

| C++ | Stable | Stable | In development |

| Swift | Stable | In development | In development |

| Erlang / Elixir | Stable | Stable | In development |

| Kotlin | Uses Java SDK | Uses Java SDK | Uses Java SDK |

Stable status means the API and SDK have reached a point where backward-incompatible changes will not be made without a deprecation period. Teams can safely use stable components in production. Components still in beta or development are functional but may have API changes before reaching stable status.

The source for SDK status information is the OpenTelemetry language status page, which is updated by the project maintainers as each language SDK matures.

Semantic Conventions: Why Consistent Naming Matters

Telemetry is only useful for cross-service comparisons when everyone is using the same attribute names. OpenTelemetry addresses this through semantic conventions, a set of standardized names and values for common telemetry attributes that applies across services, languages, and teams.

In practice, this means the HTTP span attribute for a request method is always http.request.method, the database name is always db.name, and the host is always host.name. Dashboards, alerts, and queries built in one team’s environment will work against another team’s services without any translation, regardless of what language either side is using.

Semantic conventions are maintained in the OpenTelemetry Semantic Conventions specification and are continuously extended to cover new domains, including database systems, messaging queues, cloud providers, Kubernetes, and generative AI operations.

What OpenTelemetry Is Not

Understanding the boundaries of OpenTelemetry is as important as understanding what it provides. There are common misconceptions worth clarifying:

- It is not an observability backend: OTel does not store telemetry data, so querying traces, building dashboards, and configuring alerts are all handled by whatever backend you send data to, not by OpenTelemetry itself. It is also not a monitoring tool. OpenTelemetry collects and transports data, but it does not actively check system health or fire alerts. That work belongs to the platforms downstream.

- Not a monitoring tool: OpenTelemetry does not actively check system health or send alerts. It collects and transports data to platforms that do.

- Not a visualization layer: There is no OpenTelemetry UI. Data visualization is provided by backends such as Grafana, Jaeger, Zipkin, Datadog, or New Relic.

OpenTelemetry vs. Proprietary Agents

Before OpenTelemetry, the standard approach to instrumentation was to install a proprietary agent from your chosen observability vendor. These agents were optimized for a single vendor’s backend and often provided excellent out-of-the-box functionality. However, they came with significant trade-offs:

| Factor | Proprietary Agents | OpenTelemetry |

|---|---|---|

| Vendor lock-in | High. Switching vendors requires re-instrumentation. | None. Switch backends by changing Collector configuration. |

| Agent count | One per vendor. Multiple vendors means multiple agents competing for resources. | One Collector handles all backends. |

| Standardization | Proprietary data models vary by vendor. | Single specification across all languages and backends. |

| Community | Vendor-controlled roadmap. | CNCF-governed, 185+ contributing organizations. |

| Cost | Typically included in vendor pricing. | Free and open-source. |

| Feature richness | Often higher out of the box for the specific vendor. | Rapidly closing the gap with growing ecosystem support. |

Choosing an Observability Backend for OTel

OpenTelemetry produces the data. Your choice of backend determines how you store, query, and act on it. There are two broad categories: open-source and commercial.

Open-Source Backends

Open-source backends give you full control over data storage and no per-seat or per-volume costs after infrastructure is accounted for. The most widely used include the following:

- Jaeger (jaegertracing.io): A distributed tracing backend built for microservice environments. Ideal for trace storage and visualization. Supports OTLP natively.

- Prometheus (prometheus.io): The standard for metrics storage in Kubernetes environments. OTel SDKs can export metrics in Prometheus format, and the Collector can scrape Prometheus endpoints.

- Grafana Tempo: A high-scale, cost-efficient distributed tracing backend from Grafana Labs. Designed to pair with Grafana dashboards and Loki for logs.

- Zipkin (zipkin.io): One of the original distributed tracing systems. Lightweight and straightforward. Supported natively by OTel SDKs.

- OpenSearch / Elasticsearch: Used for log storage and full-text search. The Collector’s Elasticsearch exporter sends log data directly.

Commercial Backends

Commercial backends provide managed infrastructure, advanced analytics, machine learning-driven alerting, and support contracts. All major commercial platforms support OTLP natively:

- CubeAPM: Provides native OTel support. It is a self-hosted observability backend for teams that want OpenTelemetry-based monitoring while keeping telemetry data inside their own cloud or infrastructure.

- Datadog is a large SaaS observability platform for metrics, logs, traces, infrastructure monitoring, RUM, and security use cases. It supports OpenTelemetry data for metrics, traces, and logs.

- Splunk Observability Cloud is an enterprise observability platform focused on metrics, traces, logs, and incident response. It is often used by larger teams that already work with Splunk products.

- New Relic is a SaaS observability platform for APM, infrastructure, logs, browser monitoring, synthetics, and dashboards. It provides OpenTelemetry integration for sending telemetry into New Relic.

- Dynatrace is an enterprise observability platform with APM, infrastructure monitoring, logs, automation, and AI-assisted root cause analysis. It supports OpenTelemetry ingestion through OTLP API, OTel Collector, and Dynatrace OTel Collector.

OpenTelemetry in Practice: Key Use Cases

When a user reports that checkout is slow, a distributed trace shows exactly which service, which database query, or which external API call added the latency. Without distributed tracing, isolating this kind of issue in a service mesh of 20 or 30 services can take hours. With OTel and a tracing backend, it typically takes minutes.

The OTel Collector acts as the single ingestion point for all telemetry from all services. Operations teams configure the pipeline once and can change backends, add processors, or redirect data flows without touching application code.

OpenTelemetry can capture data requests between servers and group them by service, giving platform teams a clear picture of how shared systems are being used. When one service is quietly consuming more than its share, that shows up in the data. It also makes cost attribution more accurate across teams.

Sensitive data has no business traveling through a telemetry pipeline unfiltered. The Collector’s processor pipeline gives teams a place to scrub or anonymize it before export, with attribute processors that can remove or hash fields like user IDs, email addresses, and IP addresses.

For organizations subject to data residency or privacy requirements, this is the mechanism that keeps telemetry pipelines compliant without requiring changes to application code.

The OpenTelemetry project is extending semantic conventions to cover generative AI operations. Attributes for LLM model names, token counts, prompt and completion content, and inference latency are being standardized, making OTel the instrumentation layer for AI-powered applications as well as traditional services.

Challenges and Limitations

OpenTelemetry is a powerful and well-designed standard, but it is not without challenges that teams encounter when adopting it:

- OpenTelemetry is well-designed, but adopting it is not without friction. The first thing to check is SDK maturity for your language. Tracing and metrics are stable across most major languages, but logging and profiling are still catching up in several, and shipping a signal in production that has not reached stable status is a risk teams should consciously decide to take.

- Running a Collector fleet at scale is not simple. It requires Kubernetes expertise and deliberate capacity planning, and unlike most of the other OTel components, the Collector is a long-running service that needs ongoing attention: versioning, monitoring, and maintenance all come with the territory.

- Semantic convention churn: The semantic conventions specification is still evolving. Attribute names have changed between minor versions in some areas, which can break dashboards and alert rules if SDK versions are updated without updating backend queries.

- Learning curve: The combination of APIs, SDKs, Collector, OTLP, and semantic conventions is a large conceptual surface area. Teams new to observability may find the number of moving parts overwhelming without good documentation and internal champions.

- Worth stating plainly: OTel is not a complete observability stack. Storage and analysis still require a separate backend, and running that backend adds infrastructure costs and operational work that sit entirely outside what OpenTelemetry itself provides.

The Road Ahead: What is Coming in OTel

The OpenTelemetry project is under active development. Several major areas of work are progressing as of 2026:

- Continuous Profiling: The profiling signal was added to OTel’s scope. Language SDK implementations are in progress and will bring code-level performance visibility into the same pipeline as traces, metrics, and logs.

- Client-Side RUM (Real User Monitoring): Specifications for browser and mobile instrumentation are being developed, which will allow frontend telemetry to share the same context as backend traces.

- AI and GenAI Semantic Conventions: Standardized attributes for large language model operations, vector databases, and AI agent workflows are being formalized, making OTel the default instrumentation layer for AI systems.

- OpenTelemetry Arrow: A high-efficiency columnar encoding for OTLP that significantly reduces the bandwidth and storage cost of large telemetry streams. It is designed for high-volume production pipelines.

- Entity Model: Work is underway to introduce a formal entity model that connects telemetry signals to the infrastructure resources that produced them, making correlation between signals and deployment topology more automatic.

Summary

OpenTelemetry is the open-source standard for cloud-native observability instrumentation. It provides APIs, SDKs, a Collector, a wire protocol (OTLP), and semantic conventions that together let any team instrument any application in any language and send the resulting telemetry to any backend without vendor lock-in.

The project emerged from the merger of OpenTracing and OpenCensus in 2019, reached production stability for tracing and metrics across major languages by 2021, and is now the second most active CNCF project with contributions from over 185 organizations. It covers logs, metrics, and traces today, with continuous profiling and real user monitoring following.

For teams building on CubeAPM or any APM platform, OpenTelemetry is the recommended instrumentation approach. It future-proofs your observability investment: you instrument once, and your telemetry works with any backend, today and as your stack evolves.

Disclaimer: The information in this article reflects details available at the time of publication. Source links are provided throughout for all claims. Verify all pricing with each vendor before making decisions.

FAQs

1. Is OpenTelemetry free?

Yes. OpenTelemetry is fully open-source, licensed under the Apache 2.0 license. The SDKs, Collector, and all associated tooling are free to use. The cost of an OpenTelemetry deployment comes from the observability backend you choose (if it is a commercial product) and the infrastructure required to run the Collector.

2. Does OpenTelemetry replace Prometheus?

No. OpenTelemetry and Prometheus serve complementary roles. OpenTelemetry standardizes how metrics are generated and transported from application code. Prometheus is a backend for storing and querying those metrics. OTel SDKs can export metrics directly to Prometheus in the Prometheus exposition format, and the Collector can scrape Prometheus endpoints, making the two tools highly compatible.

3. Is OpenTelemetry production-ready?

Yes, for the tracing and metrics signals. The OpenTelemetry tracing and metrics APIs and SDKs are stable in Java, Python, Go, JavaScript, .NET, and other major languages. Thousands of organizations are running OTel in production. The logging signal is stable in several languages and approaching stability in others. Always check the status page for your specific language before adopting a new signal.

4. What is OTLP?

OTLP stands for OpenTelemetry Protocol. It is the specification that defines how telemetry data is encoded (using Protocol Buffers) and transported (over gRPC or HTTP). It is the standard wire format for sending telemetry from OTel-instrumented applications to backends and has been adopted by virtually every major observability platform.

5. How does OpenTelemetry handle vendor lock-in?

OpenTelemetry breaks vendor lock-in at two levels. First, your instrumentation code uses only the OTel API, which has no dependency on any vendor. Second, the Collector or SDK exporter can be reconfigured to send data to a different backend by changing a configuration file, not application code. Switching vendors becomes a configuration change rather than an engineering project.

6. Can OpenTelemetry work with existing Prometheus or Jaeger deployments?

Yes. The OTel Collector includes receivers for Prometheus scraping and Jaeger ingestion. If your existing agents produce Prometheus metrics or Jaeger traces, you can point them at the Collector, which will translate the data to OTel format and route it to any configured exporter. This enables incremental migration without disrupting existing tooling.