Executive Summary

Running a global travel marketplace requires strict reliability, fast incident response, and predictable infrastructure costs. One of our clients, redBus, is the world’s largest online bus aggregator. It is a part of MakeMyTrip Limited (NASDAQ: MMYT), one of the leading online travel companies in Asia.

redBus operates a high-volume microservices architecture where uptime directly affects revenue. As traffic grew across markets, the observability stack began to create operational friction. During peak booking periods, dashboards slowed down, alert noise increased, and rapid telemetry growth caused unpredictable observability costs. Engineering teams spent increasing time managing ingestion pipelines, storage, and observability infrastructure instead of focusing on platform reliability.

The engineering team defined several goals:

- Reduce MTTR using unified signal correlation across metrics and traces

- Improve dashboard performance during peak booking traffic

- Move to predictable observability spending aligned with telemetry ingestion

- Remove operational overhead from managing observability infrastructure

- Ensure responsive support during production incidents

After using CubeAPM, the platform saw real changes:

| Metric | Improvement |

| MTTR | 50% faster incident resolution |

| Dashboard Performance | 4× faster query response during peak traffic |

| Observability Cost | Predictable ingestion-based model |

| Migration Downtime | Zero downtime during transition |

This case study shows how redBus, the biggest online bus aggregator in the world, brought together observability, improved cost control, and restored operational confidence in a production environment where performance and reliability are very important to the business.

Company Context: Observability at Marketplace Scale

redBus operates one of the largest digital transportation marketplaces globally. As part of MakeMyTrip Limited (NASDAQ: MMYT), the platform processes more than 100 million ticket bookings annually across multiple international markets. Millions of users search routes, check seat availability, compare operators, and complete transactions every day. Here’s what happens at this scale:

- High concurrency in distributed microservices that handle payments, search, pricing, etc.

- Syncing with operator systems in the background

- Heavy writing during transaction flows to confirm bookings

- Checkout paths that are sensitive to latency

- High traffic during holidays or special occasions

At this level of engagement, the amount of telemetry data sent increases directly with the amount of user activity. Every time you use a service, it makes metrics, traces, and operational signals. During busy booking times, ingestion rates can go up by a factor of ten in just a few minutes. So, observability needs to grow in a way that is predictable with traffic, without slowing down query performance or making operations more difficult.

The size of distributed marketplace systems also creates dependency chains. A single broken upstream service can cause search delays, seat lock failures, or payment timeouts. If you can’t see all these layers at once, it takes longer and is harder to find the root cause.

Why Observability Matters for High-Scale Marketplaces

For a platform like redBus, observability directly affects revenue and user experience. When millions of searches and booking transactions occur daily across distributed microservices, engineers must quickly detect, diagnose, and resolve production issues.

- Revenue and uptime: The system has to be working for every booking to go through. When search, seat allocation, or payment services don’t work right away, it hurts sales right away, and it gets worse as more people use them.

- Traffic spikes and exposure risk: Seasonal peaks and regional travel spikes put a lot of stress on the systems. Concurrency and infrastructure stress rise, and little things that aren’t quite right lead to production problems.

- Dashboard latency: When dashboards are slow, it’s harder to see what’s going on in real time when you need to. If engineers can’t quickly find services that aren’t working, it takes longer to investigate and fix them.

- Incident response and revenue: The sooner you know about booking problems, the faster they can be fixed. A shorter average time to resolution keeps transactions going, and customers trust you.

- Stability and resilience: For the company to run smoothly, it needs to be stable and able to bounce back from problems. The stability of a platform affects how much people trust the brand, how much investors trust it, and how much money it makes.

Observability Challenges at Marketplace Scale

The observability layer started to show signs of structural strain as the number of transactions across markets grew. Telemetry grew with traffic. Every search, attempt to book a seat, lock a seat, and payment event made operational data.

Under sustained peak concurrency, things that used to work well started to slow down, break up, and become more expensive in an unpredictable way. During traffic spikes, visibility got worse. The engineers’ focus changed from making the system better to managing the tools.

Dashboards that are slow during traffic spikes

The dashboard’s performance was inconsistent during busy booking times. The high ingestion volume made queries take longer, and telemetry retrieval slowed down just when real-time visibility was most important. Investigation cycles took longer because engineers had to wait for dashboards to load or queries to come back. The effect was real and immediate:

- Slower separation of latency spikes

- Late discovery of failing downstream services

- Less trust in real-time telemetry during incidents

When observability tools slow down when they’re busy, it takes longer to make decisions. In busy markets, delays add up quickly.

Fatigue from alerts and blind spots in incidents

As the amount of telemetry grew, the number of alerts grew. The quality of the signal got worse. Engineers got a lot of alerts, but they weren’t clear about which ones were most important. Noise started to cover up events that could be acted on. At the same time:

- Metrics and traces were present in broken workflows

- Engineers used different tools to put the context back together.

- It wasn’t easy to see cross-service dependencies right away.

To find the root cause, data had to be manually stitched together. The length of investigation paths increased. There were blind spots all over the distributed services.

Costs of ingestion that are hard to predict

The increase in traffic directly led to an increase in telemetry volume. Seasonal peaks made ingestion rates go up in a short amount of time. Billing patterns followed traffic spikes, which made monthly observability spending go up and down. This caused ongoing stress:

- Keep enough telemetry to debug reliably

- Stay away from uncontrolled storage and ingestion costs

- Plan ahead for how much money you’ll need during busy times.

Without clear cost alignment with traffic growth, financial planning stayed reactive.

Operational Overhead of Managing Observability Infrastructure

The observability platform needed to be actively maintained. Along with core production systems, engineering teams took care of ingestion infrastructure, adjusted storage lifecycles, and kept an eye on cluster health. As traffic grew, it became harder to run things. The burden showed itself in a number of ways:

- Management of ongoing ingestion clusters

- Changes to the index lifecycle

- Scaling storage during busy times

- Vendors taking too long to respond to production problems

The observability stack added extra operational drag.

What the Engineering Team Had to Fix

At the scale of a marketplace, observability needs to work as production infrastructure. Anything less causes problems when they matter most. The way forward needed structural changes. The team put first:

- Unified signal correlation to lower MTTR

- Dashboard performance that stays the same even when there is a lot of traffic

- Taking away ownership of observability infrastructure

- Cost governance that is in line with traffic growth and is easy to predict

- Support for incidents that happen in minutes

Technical Objectives

The engineering team set clear technical goals to fix problems with performance, cost control, and operational ownership that were caused by structural limitations. These goals were realistic, quantifiable, and in line with what was possible in a marketplace of that size. These objectives framed the transformation from reactive observability management to performance-aligned operational maturity.

- To lower MTTR: Metrics and traces needed to work together in a single investigative workflow so that engineers could go straight from symptom to root cause without having to manually stitch together different tools.

- To reduce extra work to manage clusters: Observability infrastructure shouldn’t take up too much time that engineers can’t complete their core work. Someone else must handle ingestion pipelines, storage management, and lifecycle tuning, so that the core platform team can focus on important things.

- To improve dashboard performance: The dashboards must be fast all the time. It wasn’t okay for queries to take longer, even when there are a lot of bookings.

- To keep the cost structure clear: The team must spend money on clear observability, and it should make sense with traffic growth. There should be no billing changes that were hard to predict because of seasonal spikes in ingestion.

- Responsive support staff: We measured the response time for incident-time help in minutes, not ticket cycles. During outages, production urgency required direct, responsible help.

Before Migration Architecture

Before the change, the observability environment showed gradual growth instead of being planned for a large market. The stack had changed over time to meet the needs of the moment. As traffic grew and microservices grew, the architecture started to show its flaws. The tools worked, but they weren’t all the same. It helped with visibility, but it needed constant management to stay stable.

Fragmented observability Stack

Metrics and traces were in their own dashboards. When engineers looked into incidents, they often started in one system and moved to another to get a better idea of what happened. Every pivot made things harder.

There wasn’t much correlation between the signals. A latency spike in one service necessitated manual verification against traces from other locations. You couldn’t see dependency chains right away. The investigation depended more on the engineer’s experience than on structured telemetry linkage.

Operational ownership also stayed inside the company. The team was in charge of the ingestion infrastructure and storage management. Regular checks were needed to make sure that index lifecycle tuning and data retention changes were working. Infrastructure that was fully observable was not managed. It was another system to keep up with while working on production. This structure created a subtle drag over time:

- Changing contexts while debugging

- Putting together telemetry insights by hand

- Ongoing maintenance work to keep performance up

- The stack sent data. It didn’t always make things clear.

Scaling Limitations

As the number of bookings and traffic spikes grew, the need to scale up became clear. The rates of ingestion went up a lot during the busiest travel times. The system was able to handle the load, but query performance started to change when it was under stress.

- During booking spikes, heavy writing traffic patterns put more pressure on ingestion.

- During times of high load, query latency went up, particularly when engineers needed quick answers.

- When speed was most important, visibility slowed down.

Cost patterns changed based on how traffic behaved. Growth in ingestion led to increases in reactive spending. It was harder to predict the budget because seasonal spikes made the data bigger. At the same time, the operations became more complicated. More services led to more telemetry, which means more work to manage storage.

Technical Implementation with CubeAPM

CubeAPM provides a unified observability platform designed for high-scale distributed systems. It correlates metrics, traces, logs, and infrastructure signals in a single investigative workflow. This unified telemetry architecture allows engineering teams to diagnose incidents faster while eliminating the need to operate and maintain multiple monitoring tools.

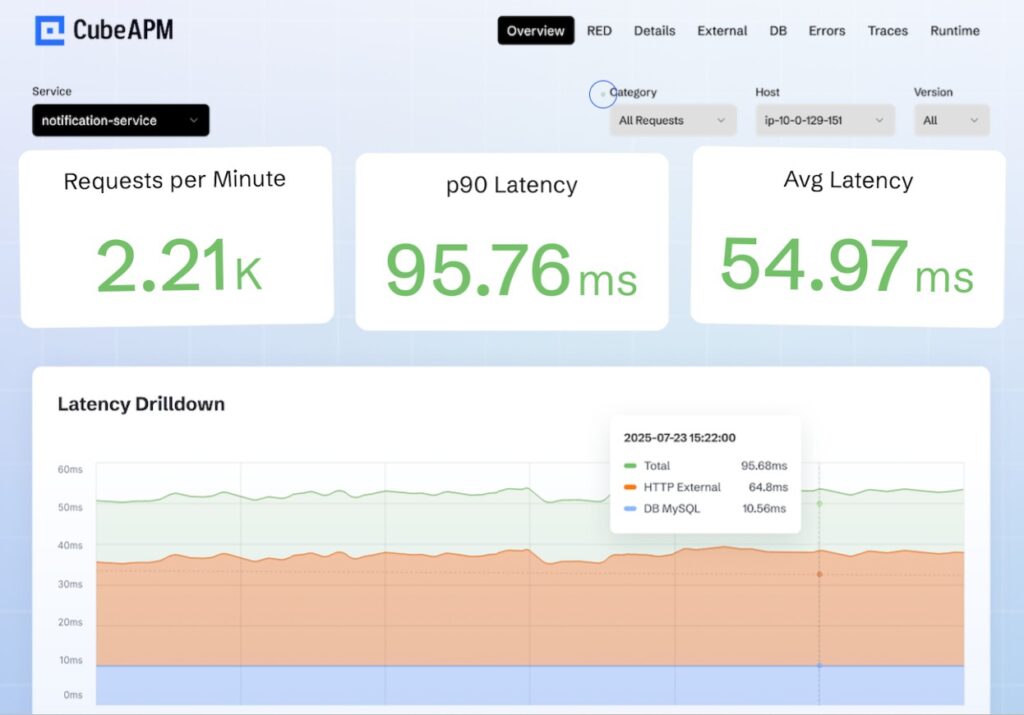

Unified Telemetry

Distributed services across the redBus platform were instrumented using OpenTelemetry, allowing trace context propagation across microservices running in a Kubernetes-based infrastructure. Metrics and traces flowed through a unified telemetry pipeline, enabling engineers to move directly from alerts to trace-level root cause analysis.

When latency appeared in one part, the dependencies that came before and after it were also visible in the same workflow. The change made how incidents were handled different. Now, teams can get clearer signal alignment with fewer query loops and context switching.

Optimized Storage and Ingestion

The ingestion layer switched to a managed model, which meant that internal scaling changes were no longer necessary during times of high traffic. Engineers no longer saw ingestion clusters as a separate reliability issue that needed to be watched.

Storage lifecycle management was improved so that retention matched operational value. The telemetry strategy changed from keeping everything equally to being more selective. CubeAPM’s Smart sampling puts high-signal data first while keeping the amount of data from growing too quickly. This meant:

- Keeping error traces and latency anomalies at full fidelity

- Lowering low-signal telemetry while the system is running normally

- Keeping the depth of the investigation without raising the cost of ingestion

Better performance for dashboards and queries

Even when the number of bookings went up sharply, dashboards stayed responsive during the busiest times. Query latency was the same across all high-concurrency conditions. This lets engineers get telemetry at the same speed as production.

When dashboards load quickly during busy times, engineers take action right away. There is no doubt about how fresh or complete the data is. Investigating an incident becomes a straight line instead of a loop. Performance bottlenecks that used to happen during ingestion bursts are no longer a problem. Visibility stayed the same during both normal operations and seasonal spikes.

Managed Infrastructure Layer

The engineering team no longer had to worry about cluster maintenance, storage rebalancing, and upgrade coordination. The effect on the ground could be measured.

Engineers stopped making time for lifecycle tuning and ingestion scaling changes. Operational drag also became less. The focus went back to how well the service worked and how strong the system was. Over time, observability became aligned with production scale without adding maintenance complexity.

Migration Strategy: Execution with No Downtime

Migrating observability in a high-traffic marketplace requires careful planning to avoid production risk. Booking traffic, search queries, and payment flows must remain uninterrupted during the transition.

Dual-Write Phase

Telemetry was sent to both the existing platform and CubeAPM simultaneously. This allowed engineers to validate signal completeness, latency, and trace integrity without affecting production systems. During this phase the team verified:

- Metrics accuracy across critical services

- End-to-end trace propagation

- Alert thresholds triggered correctly

- Dashboard outputs matched production behavior

Before any cutover took place, engineers made sure that the new pipeline showed the real state of production. This method made the company less risky and more confident.

Migrating Services Gradually

Instead of moving the entire system at once, services were migrated incrementally. High-impact workflows were transitioned carefully while monitoring guardrails ensured alerting and dashboards remained accurate.

This staged approach reduced risk and allowed the team to validate performance under real traffic before expanding the rollout. Any inconsistencies were resolved early, preventing instability as migration progressed.

Last Change

After confirming telemetry parity and stable performance, the platform completed the transition to the unified observability stack. Legacy ingestion pipelines were retired and operational workflows consolidated.

Throughout the migration:

- Booking traffic remained stable

- Incident detection and dashboard visibility were preserved

- The transition completed with zero downtime

Measurable Improvements in Performance

Improvements in observability only matter if they lead to measurable changes in how things work. In this case, the performance improvements changed how incidents were handled, how engineers worked under stress, and how well the platform handled high booking times.

4× Faster Dashboards

The dashboard worked much better, even during busy times. Telemetry that used to take a long time to load was now available right away. Engineers looking into a surge no longer waited for views to load before doing something. Dashboards that loaded faster meant:

- You can see how healthy your service is right away when traffic spikes.

- Shorter feedback loops while looking into an incident

- Faster validation after putting fixes in place

When dashboards respond right away when they’re busy, investigation becomes linear instead of iterative. Engineers act quickly after looking at something, without worrying about how old the data is.

MTTR Reduced by 50%

The average time to resolution went down by half. This improvement was due to changes in the structure of signal correlation. Metrics and traces worked together in a single workflow, so engineers could go from symptom to trace context without having to rebuild anything by hand.

Root cause isolation sped up because it was easy to see service dependencies in one path. It was possible to find connections between latency spikes, error rates, and downstream failures without changing tools. The shorter investigation cycle cut down on downtime and kept transactions going during busy booking times.

Reduced Operational Overhead

Engineers no longer had to manage ingestion clusters or make changes to the storage lifecycle as part of their daily work. Tasks that used to take a lot of time for maintenance were taken off the production critical path.

This change brought engineering capacity back to projects that improve reliability and develop new products. Instead of tuning storage or coordinating upgrades, teams worked on making booking work better and making service more reliable. Over time, this made the platform more stable.

Responsive 24/7 Support

Incident response went beyond just tools. Support responsiveness has gotten a lot better. Instead of ticket cycles, on-call help was measured in minutes. The escalation paths were clear and matched the urgency of production.

During busy times, this responsiveness cut down on recovery time and stress at work. Faster escalation meant that there were fewer long outages and that normal booking flow could be restored more quickly. In a market where revenue is important, support speed became a key part of the reliability strategy instead of an afterthought.

Cost Modeling: From Reactive to Predictable Spending

When the marketplace is big enough, observability cost is a variable that changes with size. As the number of searches and bookings goes up, so does telemetry. If you don’t carefully align your costs, observability will become reactive to financial changes instead of planned.

Previous Cost Structure

In the old model, growth in telemetry meant growth in billing. Seasonal booking spikes raised the amount of food eaten in short amounts of time, and costs followed the same pattern. The pattern put a strain on operations and finances:

- During busy travel times, spikes in ingestion led to spikes in billing.

- It was hard to guess how much it would cost during the busiest times because traffic patterns were unpredictable.

- Infrastructure management added indirect costs that weren’t just for licensing.

Costs that weren’t obvious added up in the work of running the business. Engineering time spent on managing ingestion clusters, scaling storage, and tuning the lifecycle had an opportunity cost. These costs made it harder to get things done. Over time, spending on observability became reactive. Changes to the budget were made based on changes in traffic, not planned growth.

CubeAPM’s Cost Framework

The change made observability economics more transparent in terms of structure.

- Pricing is based on clear scaling parameters and open ingestion

- A spending model is in line with traffic growth

- Predicting how seasonal demand will affect things is possible

- Managed infrastructure lowers the total cost of ownership

Talks about costs changed from damage control to planning for strategic capacity. It was possible to predict sustainable growth because telemetry economics became clear. The organization could increase the number of bookings without also increasing the uncertainty about how much money it was spending.

With ingestion-aligned pricing, observability costs scaled predictably with booking traffic rather than fluctuating unpredictably during peak travel seasons.

Operational and Business Impact

Technical improvements are only useful if they change how teams work and how the business operates when things get tough. In this instance, the transformation affected both the way engineering was done and the level of trust in the organization.

Impact on the Engineering Team

With faster unified telemetry and dashboards, investigation became faster. It saved engineers from waiting for queries to come back or putting together context from different tools. This change led to measurable improvements in the way work got done:

- Fewer query iterations and faster debugging cycles

- Less firefighting during busy traffic times

- More visibility across all services in the system

When signal correlation is built in, and dashboards respond right away, making decisions is faster. When everyone works from the same telemetry context, cross-team collaboration gets better. Stress at work also went down. By taking infrastructure maintenance out of daily tasks, engineers could focus on reliability engineering instead of managing tools.

Impact on Business

Stable observability performance during booking surges made uptime more reliable. Traffic spikes no longer caused gaps in visibility or delays in detection. Main results:

- Monitoring stable performance during busy booking times of the year

- Executive and engineering leadership have more faith in uptime.

- Budgeting that is predictable and in line with traffic growth

- Better alignment with what enterprise governance expects

When performance stays the same, and costs go up in a clear way, leaders can plan for growth with fewer operational unknowns. For a high-volume travel marketplace that follows the rules for public companies, being able to bounce back and being financially responsible go hand in hand.

Lessons for High-Scale Platforms

Organizations operating large distributed systems, such as digital marketplaces, can improve observability outcomes by:

- Unifying telemetry signals across metrics, traces, and logs

- Implementing distributed tracing for microservices monitoring

- Aligning observability costs with telemetry ingestion volume

- Using Kubernetes-native observability architectures

- Ensuring dashboards remain responsive during traffic spikes

- Reducing operational overhead by eliminating observability infrastructure management

For a deeper look at how redBus implemented CubeAPM and what changed afterward, explore the full redBus case study.

Testimonial

“Running a large-scale travel platform like redBus means uptime and rapid issue resolution are critical. With CubeAPM, we improved incident visibility and significantly reduced investigation time. The cost savings compared to our previous observability stack were also substantial.”

— CTO, edBus (MakeMyTrip Limited)

Disclaimer: The information in this article reflects the latest details available at the time of publication and may change as technologies and products evolve.

FAQs

How did this marketplace reduce MTTR by 50 percent?

The platform unified metrics and traces into a single workflow. Engineers could move from alert to trace-level root cause without switching tools. Faster signal correlation reduced investigation time and cut MTTR by 50 percent.

How do large platforms keep dashboards fast during peak traffic?

They optimize ingestion and storage for high concurrency and heavy write loads. Efficient query architecture and structured telemetry prevent dashboard lag during traffic surges.

Why do observability costs spike at scale?

Telemetry volume grows with traffic. Ingestion-based pricing, high-cardinality data, and long retention periods drive unpredictable cost increases during peak seasons.

What does managed observability mean in practice?

It removes infrastructure responsibility from internal teams. No cluster maintenance, no index tuning, no upgrade coordination. Engineers focus on reliability, not tooling operations.

How can enterprises migrate observability platforms without downtime?

They validate telemetry in parallel, migrate services incrementally, and confirm alert and dashboard accuracy before full cutover. Production traffic remains uninterrupted throughout the transition.