Observability costs are a growing concern for DevOps and software engineering teams. The numbers clearly reflect this challenge. In 2025, Gartner reported that 36% of their clients spent over $1M annually, with 4% spending over $10M in observability costs. It is evident that costs are getting out of control. Often, decision makers will go for tools with rich features. However, this usually changes as the system scales, and cost becomes unpredictable.

In this case study, we examine how an engineering team reduced their observability spending by 70% while maintaining full visibility across logs, metrics, and traces. The following sections outline the original infrastructure baseline, the factors that caused cost escalation, and the architectural changes that enabled long-term cost control.

Infrastructure Baseline

In our case understanding the scale of the challenge is critical. We must delve into the environment snapshot prior to the migration. Notably, the organization operated a cloud-native ecosystem, making observability a necessity.

Environment Snapshot

The infrastructure was built on a modern stack designed for scalability, resulting in increased cost as usage grew.

- Cloud Provider: The organization used a public cloud provider for its multi-region deployments, with autoscaling at both the node and pod levels. Consequently, this introduced surprise bills, especially when traffic spiked.

- Kubernetes Clusters: Kubernetes clusters with hundreds of nodes were used to support a web of microservices.

- Telemetry Volume: The company experienced rapid growth, and with it usage increased steadily. Approximately 45 GB per month across logs, traces, and metrics. Being a distributed system, the trace depth was significant due to multi-hop service chains.

- Retention Requirements: Engineering teams required 5-month data retention periods for trend analysis and historical debugging.

- Compliance Requirements: The compliance requirements influenced retention limits and data storage. The company operated where regional laws demanded that data stay in the designated region. The need for observability, data governance, and auditability compelled the company to adjust retention periods and also data location.

Observability Requirements

Despite the cost pressure, the engineering team could not compromise on performance and reliability. So here were the mandatory observability requirements that operations teams needed to maintain stability and performance:

- 100% distributed tracing expectations: Primary goal here was a complete trace coverage for all critical services, as it was critical for error and latency investigation.

- Log search latency expectations: Engineers needed real-time debugging during incidents. This meant logs needed to remain searchable during incidents. Long query latency would be a major bottleneck when triaging issues.

- Alerting and SLO requirements: Alerts were based on SLOs and production reliability targets. The observability platform of choice had to support robust alerting without increasing noise or metrics costs.

- Engineering team size and workflow: Cost governance was assigned to the DevOps team because they already managed the telemetry pipelines and observability infrastructure. Centralizing ownership reduced operational fragmentation and improved visibility into ingestion volume, retention policies, and monitoring workflows across multiple dashboards and tools.

Original Observability Architecture (Before CubeAPM)

Prior to the migration, the organization relied on a purely SaaS platform. The organization also relied on a traditional and fragmented monitoring approach.

Tooling Stack

- APM vendor: The DevOps team relied on a commercial APM solution. It was natively a SaaS solution but offered a self-hosted option. However, for the self-hosted option, the team was required to run it themselves; that is, scaling, tuning, and maintenance added operational overhead. The platform used a host-based pricing model. Often, the engineering team struggled with increased costs, especially with infrastructure scaling.

- Log indexing vendor: Teams had a separate platform for log indexing. This was billed per GB ingested and indexed. It was fragmented and required teams to switch between tools just to get into the root cause and debug issues during incidents.

- Metrics backend: Metrics were either handled within the APM platform or routed to a separate backend optimized for time-series storage. High-cardinality dimensions and custom metrics introduced additional billing exposure over time.

- Separate dashboards and systems: The teams had to switch between tools to access the necessary signals during incidents. As stated earlier, they had to switch to a different tool to perform log indexing.

Pricing Model Breakdown

- Host-based pricing: The APM licensing, and especially the resulting costs, was tied to the number of monitored hosts. With the Kubernetes environment and autoscaling enabled, costs were significantly high even when request volumes remained stable.

- Log ingestion pricing: The company was billed for both ingested and indexed logs. Since the DevOps teams had put in place structured logging, as services expanded, the ingestion volume also grew steadily, and indexing costs compounded month over month.

- Retention tiers: The APM in use had a default retention period of 30 days. Retention beyond this default window attracted additional costs for the company as teams were required to upgrade to higher storage tiers. Longer retention periods, for historical depth and trend analysis, meant materially higher monthly costs. Such expenses forced a trade-off between budget control and operational visibility.

- Overage penalties: Incidents significantly contributed to the surprise bills. Traffic spikes resulted in unexpected log surges and subsequent overage charges. These were difficult to predict and introduced budget volatility during high-growth periods. For DevOps teams, it felt like the pricing model penalized them at the exact moment when they needed it the most.

- Scaling impact under Kubernetes: Because infrastructure scaled horizontally and pods churned frequently, both host-based licensing and ingestion-based indexing charges amplified cost growth. Observability spend became tightly coupled to scaling events rather than strictly to business value.

Operational Constraints

To reduce the costs, the team was forced to adopt aggressive measures:

- Sampling to reduce cost: In a bid to control costs, they adopted trace sampling during peak periods. The result was a reduced ingestion volume. However, this introduced blind spots. Most of the blind spots included missing performance-related issues.

- Tool fragmentation: They maintained a separate platform for logs. However, the context switching between tools during the investigation increased the time they took to resolve an issue.

Vendor lock-in limitations: Proprietary agents limited flexibility. The team faced significant challenges during the migration process as they had to re-instrument their application. Moving to a new vendor demanded removing the legacy APM’s proprietary code and replacing it with the new vendor to maintain visibility into application performance.

Root Cause Analysis: Why Costs Escalated

To address the rising costs month over month, the team had to check the architecture. They directed focus to why the architecture increased costs when the system was under load or during incidents. They uncovered four primary cost drivers inherent to the legacy stack:

Kubernetes Horizontal Scaling Effect

Infrastructure growth was healthy and expected. Usage was expected to increase steadily, and thus Kubernetes would scale pods and nodes based on the growing demand. However, because the APM used a host-based pricing model, every scaling event increased the observability cost. Even the temporary autoscaling during peak hours introduced additional cost.

In a Kubernetes-native environment, elasticity is a feature. Under a host-based model, that elasticity became a cost multiplier.

Indexed Log Explosion

Structured logging improved debugging quality but increased ingestion volume significantly. Every additional field, request ID, tenant identifier, and contextual attribute expanded indexed data size. Since billing was based on gigabytes indexed per day, richer logs translated directly into higher monthly charges.

As services multiplied, log volume grew non-linearly. What started as helpful context gradually became a pricing liability.

Trace Sampling Trade-Off

To offset rising costs, trace sampling ratios were reduced during periods of high growth. While this controlled ingestion, it introduced investigation gaps. Non-error performance degradations and intermittent latency spikes were sometimes excluded from sampled datasets.

Sampling became a financial decision rather than an architectural one. Visibility was no longer deterministic.

Data Duplication Across Tools

Telemetry was often ingested into multiple systems simultaneously. Logs were fed into the indexing platform while metrics were exported separately, and traces were retained within the APM vendor’s backend. In some cases, overlapping collectors created duplicate ingestion streams.

This became another cost contributor since a single telemetry event could generate costs in both the tools that the team used. In the long term, the redundant ingestion inflated the total observability cost without increasing insight.

Retention Inflation

The system scaled, usage grew, and so did the retention requirement. To perform trend analysis and other critical tasks such as post-incident analysis and regression comparisons, the engineering team required historical depth. The result was an extended retention period beyond the default window, which was billed at premium tiers.

The team faced recurring trade-offs between maintaining adequate history and controlling monthly cost. Observability strategy was increasingly shaped by billing constraints.

Design Principles for the New Architecture Required

Before the team settled on their new platform, they established a set of design principles for the new observability platform:

Cost proportional to telemetry, not infrastructure

The engineering team and all stakeholders reached a consensus that observability costs needed to scale with the telemetry data generated and not with underlying hosts or node counts. Infrastructure elasticity should not automatically trigger billing escalation. Pricing alignment had to reflect telemetry volume rather than Kubernetes mechanics.

Maintain full error trace capture

Teams realized that a robust system is one that captures valuable signals. For critical services, they needed a deterministic visibility into failures and anomalies. The decision was on a system with an architecture that preserved a full error trace capture while optimizing the overall ingestion strategy.

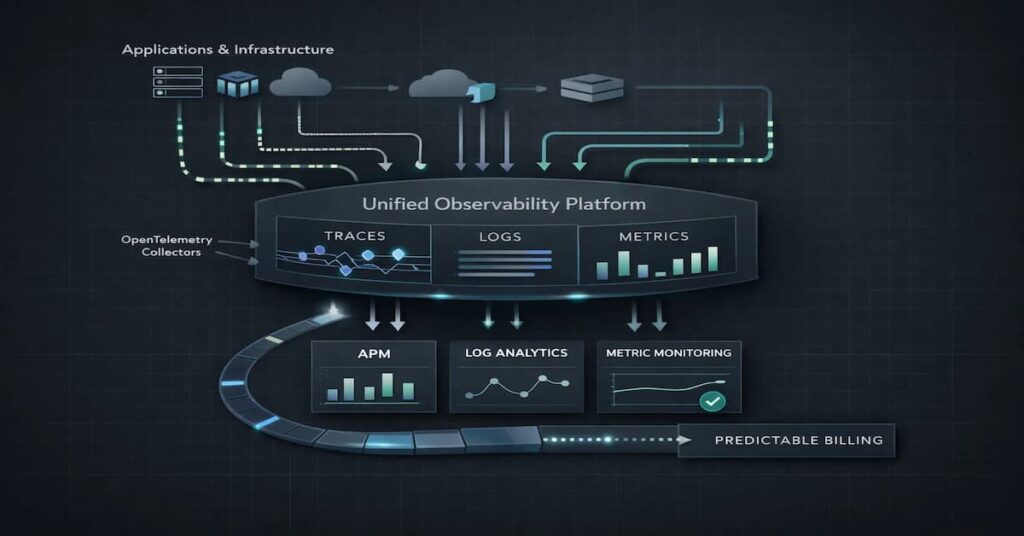

Unified logs, metrics, and traces

Fragmented tooling increased investigation time and duplicated ingestion paths. The team acknowledged the importance of a unified platform to easily correlate logs, traces, and events under a single platform.

OpenTelemetry-native foundation

Using the proprietary agents as data collectors limited the team’s options. The new platform should not only support OpenTelemetry but should be built around OpenTelemetry standards. This would guarantee portability and long-term architectural independence.

Predictable billing under scale

Cost volatility created planning friction. The new model had to eliminate overage surprises and tier-based unpredictability. Engineering leadership required cost modeling that could be forecast accurately as telemetry volume grew.

Minimize operational disruption

The team had to counter any downtime or telemetry blackout during migration. The new architecture needed to integrate with existing instrumentation while allowing a controlled, parallel rollout.

New Observability Architecture with CubeAPM

Transitioning to CubeAPM marked a major milestone for the team in terms of managing observability costs, data retention, and compliance. They moved from fragmented monitoring to a unified observability platform built around OpenTelemetry standards. The engineering team invested efforts to redesign their data pipelines that enabled them to decouple costs from the Kubernetes scaling events.

Unified Telemetry Ingestion

- Single pipeline for logs, metrics, and traces: For the team, CubeAPM now delivered a unified data pipeline built around OpenTelemetry collectors. All the signals followed a standardized ingestion layer route into CubeAPM. With this, the team eliminated the redundant workload of shipping logs, traces, and metrics to separate backends with overlapping agents.

- OpenTelemetry collector support: Engineering teams did not need to instrument their application; they remained largely unchanged. OpenTelemetry collectors were configured to normalize attributes, enforce semantic conventions, and eliminate redundant exporters. The changes significantly reduced the duplicate ingestion, as was the case when they used different tools, and made pipeline management simple.

- Compatibility with existing agents: Legacy agents were still needed but were gradually phased out. OpenTelemetry exporters were ushered in gradually during the migration to avoid a disruptive cutover. With this, the team had the avenue to validate completeness before decommissioning the prior stack.

Ingestion-Based Pricing Model

- Shift from host-based to telemetry-based billing: The pricing was now based on the telemetry volume rather than the host count. Cost was now proportional to the telemetry ingested. Kubernetes autoscaling behavior did not affect the bill.

- Alignment of cost with actual usage: Infrastructure footprint did not affect billing, meaning the autoscaling events did not introduce any hidden cost multipliers. Cost modelling was now tied to the amount of generated telemetry, improving forecast accuracy.

Smart Sampling Strategy

- Tail-based sampling: Tail-based sampling seeks to capture the high-value traces. Decisions are made at the end of the trace, preserving error and high-latency traces. In other words, it is a sampling approach that analyzes the entire trace before deciding to store it

- Error-first prioritization: The system is configured to prioritize the capture of all error traces. Most of the performance anomalies that went beyond the latency thresholds were prioritized, while the repetitive traces were selectively sampled.

- Intelligent retention rules: Sampling was aligned with operational risk rather than billing pressure. The primary objective here was to reduce the noise-signal ratio. With this choice, the team discarded low-value signal that increased noise, while retaining high-value signal.

Tiered Retention Strategy

The new architecture categorizes data into different tiers to optimize cost:

- Hot storage: High-performance storage was designated for recent telemetry. Helps the team to quickly search the data, especially during incidents.

- Warm storage: Historical but less frequently accessed data was retained at lower cost tiers while remaining queryable when needed.

- Long-term archive: Compliance and audit requirements were satisfied through archival storage strategies without incurring premium indexing costs across the entire retention window.

Tool Consolidation

Replacement of multiple tools: Separate APM, log indexing, and metrics systems were consolidated into a unified observability platform. This reduced duplicate ingestion charges and eliminated cross-system investigation friction.

Reduced context switching: The Engineering team had unified MELT within a single interface. Logs could be queried and indexed, and they could conveniently pivot to a distributed trace within the same platform. This improved MTTR (Mean Time to Recovery) as it reduced the response time, especially when faced with live incidents.

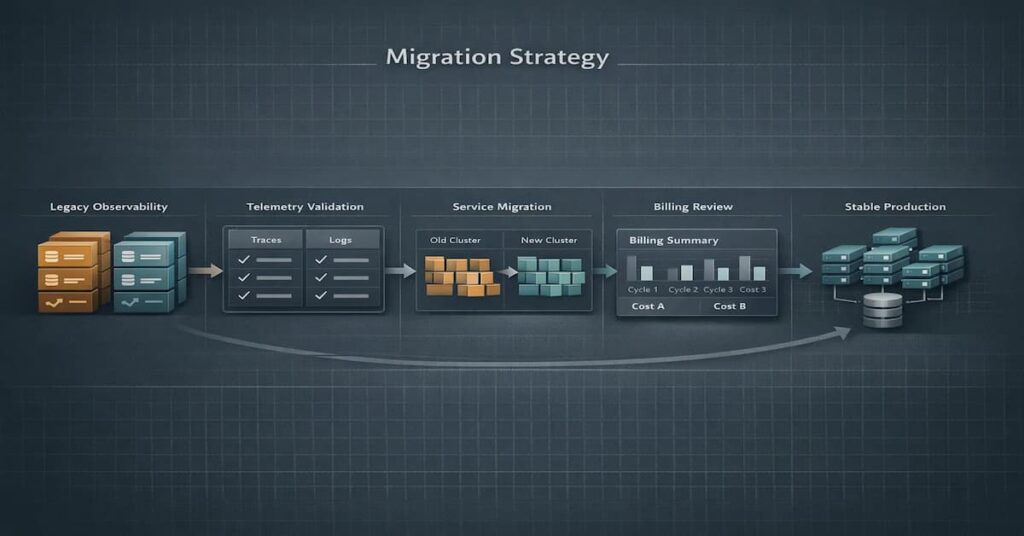

Migration Strategy

For the team, a migration without interrupting live service was the ultimate goal. The team implemented a structured, multi-phase migration designed to mitigate risk and validate data integrity.

Parallel Deployment Phase

A side-by-side approach was executed during migration. The team deployed CubeAPM alongside the existing observability stack. This kept services up and running without major interruptions. OpenTelemetry exporters were configured to send data to both systems. Both systems ingested data simultaneously and presented the engineering team the opportunity to evaluate the tools while at the same time guaranteeing zero visibility risk under real production load.

The legacy stack remained fully operational during this stage to provide rollback assurance.

Telemetry Validation

Before switching to the new vendor, the team undertook a comprehensive data comparison.

- Completeness: The team began verifying the telemetry data volume. Verification was mainly a comparison of the log volume in CubeAPM and the legacy system. They wanted to be certain that the log volume in both systems was similar.

- Accuracy: Distributed traces were checked to ensure span relationships and metadata remained intact.

- Integrity: Automated checks were run to confirm no data was lost or corrupted during ingestion.

Gradual Service Cutover

The strategy focused on moving the services incrementally.

- Phased Migration: The cutover was handled cluster-by-cluster or service-by-service. This meant migrating lower-risk services first to validate pipeline stability. High-throughput and customer-facing services were migrated after confirming ingestion and retention performance.

- Zero Downtime: This approach allowed the engineering team to monitor the migration’s impact in real time. It ensured end-users experienced zero downtime.

Billing & Risk Validation

Financial performance was tracked as closely as technical performance.

- Cost Comparison: The team monitored billing across multiple cycles to confirm the projected savings were manifesting in reality.

- Rollback Plan: A documented rollback procedure was kept ready for each phase to revert to the legacy stack if any critical issues were detected.

Risk Mitigation

- Zero downtime: At no point was telemetry paused or rerouted in a way that risked blackout. Parallel ingestion provided redundancy throughout the transition.

- Rollback plan: Because the legacy stack remained active during early phases, reverting was technically straightforward if issues had surfaced.

- Data integrity checks: Attribute consistency, context propagation, and trace stitching were audited during rollout to prevent subtle instrumentation regressions.

Quantified Results

Shifting to CubeAPM delivered immediate results. The organization reported measurable improvements across financial and operational metrics.

Cost Impact

At the time of migration, the company’s observability spend reflected the following host-based and indexed billing structure.

- 125 APM-monitored hosts

- 200 infrastructure hosts

- 10 TB/month indexed log ingestion

- 500 million indexed spans per month

- 1.5 million container-hours per month

- 300,000 high-cardinality custom metrics

- Continuous profiling enabled for critical services

- 45 TB/month total telemetry across logs, traces, and metrics

Observed Monthly Cost Structure (Pre-Migration)

Figures reflect the company’s actual observed usage and billing configuration prior to migration. Enterprise discounts and contract variations are not reflected.

| Component | Usage | Rate | Monthly Cost |

| APM Hosts | 125 hosts | $30 per host | $3,750 |

| Infrastructure Hosts | 200 hosts | $18 per host | $3,600 |

| Profiled Hosts | 40 hosts | $45 per host | $1,800 |

| Profiled Container Hosts | 100 hosts | $3 per host | $300 |

| Container Hours | 1,500,000 hours | $0.003 per hour | $4,500 |

| Custom Metrics | 300,000 metrics | $0.012 per metric | $3,600 |

| Log Ingestion | 10,000 GB | $0.12 per GB | $1,200 |

| Indexed Logs | 3,500M events | $1.85 per million | $6,475 |

| Indexed Spans | 500M spans | $1.70 per million | $925 |

| Total Monthly Cost | $22,975 |

Observability Monthly Cost After CubeAPM (Ingestion-Based Model)

| Component | Usage | Rate | Monthly Cost |

| Total Telemetry (Logs + Traces + Metrics) | 45TB | $0.15/GB | $6,750 |

| Total Monthly Cost | $6,750 |

Monthly cost before vs after

The company’s observability cost, as seen in the table, was $22,975 per month before the migration. Based on the report from the engineering team, host-based licensing, container-hour billing, high-cardinality custom metrics, and indexed log and span charges represented the major cost drivers. The numbers clearly support the report, as it is evident that infrastructure elasticity and indexed telemetry volume represent the biggest multipliers. For instance, container hours and indexed logs alone contributed over $10,000 in costs per month.

After consolidating into a unified ingestion-based model, total observability spend decreased to $6,750 per month at 45 TB of total telemetry across logs, traces, and metrics. The cost structure shifted from multi-dimensional billing (hosts, containers, metrics, indexing) to a single ingestion-aligned model.

This represents a monthly reduction of $16,225 while maintaining full telemetry coverage.

Percentage reduction

The reduction equates to approximately 70% lower monthly spend:

(22,975−6,750) / 22,975 ≈70.6%

Importantly, the savings were not achieved by reducing telemetry, limiting indexing, or shortening retention. Total telemetry volume remained at 45 TB per month under the new architecture as it was previously. Error trace coverage remained complete, and log retention policies were preserved.

The savings were structural, mostly driven by eliminating host multipliers, indexed billing penalties, and redundant container-based pricing and not by reducing observability depth.

Annualized savings

At pre-migration levels, annual observability cost would have reached:

22,975 X 12 = $275,700 per year

Under the ingestion-based model:

6,750 X 12 = $81,000 per year

Annual savings total:

275,700 − 81,000 = $194,700 per year

This translates to nearly $195,000 in annual savings for a midsized environment, while supporting greater total telemetry volume.

More importantly, cost growth became predictable. Instead of scaling with infrastructure mechanics (hosts, containers, indexed entities), spend now scales linearly with telemetry ingestion. Kubernetes elasticity no longer acts as a billing multiplier.

Visibility Impact

- 100% error trace coverage: The new architecture covered all the production error traces. Since CubeAPM used smart sampling, only the high-value signals were captured. Notably, the sampling was intended for this purpose (capturing high-value signals) and not as a cost-control mechanism.

- Increased retention window: With cost pressure reduced, retention policies could be preserved without constant budget trade-offs. Historical data remained available for regression comparison and post-incident analysis.

- No blind sampling gaps: The new system introduced a sampling strategy that reduced the generic rate reductions. It made it easy for engineers during investigations, as they did not encounter situations where critical signals were missing during investigations due to financial constraints.

Operational Efficiency

- Reduced MTTR: Incident resolution time improved due to unified telemetry context and deterministic trace availability. Engineers no longer questioned whether a trace was sampled out or split across platforms.

- Simplified troubleshooting: All the signals were easily accessible in a single interface. It offloaded the burden they previously had of switching between tools. The team would carry on their investigations without the fragmentation, and the result was a reduced cognitive load during incidents.

- Fewer tools to manage: Consolidation reduced operational overhead, vendor coordination, and pipeline maintenance complexity. Engineering focus shifted from tool management back to reliability engineering.

See how Delhivery modernized its observability approach to improve efficiency at scale. The team needed better visibility across a fast-moving, high-volume environment without adding more operational complexity. Read the Delhivery story to see how CubeAPM supported that shift.

Before vs After Comparison

Financial Impact

| Metric | Previous Stack | With CubeAPM |

| Monthly Cost | $22,975 | $6,750 |

| Annual Cost | $275,700 | $81,000 |

| Cost Drivers | Hosts, container hours, indexed logs, indexed spans, custom metrics | Telemetry ingestion volume only |

| Scaling Behavior | Cost increases with infrastructure and indexing growth | Cost scales linearly with telemetry volume |

| Reduction | — | 70% |

The previous observability stack introduced complex pricing and cost drivers. Based on the company’s reports, infrastructure scaling was actually what they needed for growth, but with this growth came the increased costs. Infrastructure scaling, container churn, custom metric growth, and indexed telemetry volume all contributed to compounding monthly spend.

Moving to CubeAPM enabled the company to scale operations without being hit with surprise bills. Under the ingestion-based model, cost alignment shifted to a single predictable variable: total telemetry volume. Kubernetes elasticity and container scaling no longer created billing multipliers.

Operational Comparison

| Dimension | Previous Stack | With CubeAPM |

| Trace Coverage | Indexed and sampled | Smart sampling, full error retention |

| Log Retention | Tier-based, cost-sensitive | Unlimited, no additional costs |

| Metrics Cardinality | Billed per custom metric | Included within ingestion model |

| Tool Fragmentation | Multiple billing SKUs and signal silos | Unified logs, metrics, traces |

| Investigation Workflow | Cross-tool correlation | Single telemetry context |

While using the previous observability platform, engineers were forced to navigate the cost constraints when making the decisions whether to retain metrics, increase sampling, or extend the limited retention period. Observability design decisions were influenced by billing pressure.

After migration, the telemetry strategy was guided by operational value rather than cost containment. Error traces remained deterministic, high-cardinality metrics were preserved, and container scaling no longer introduced unpredictable billing exposure.

Trade-Offs & Lessons Learned

When ingestion-based pricing may not produce large savings

Ingestion-based pricing is critical in large enterprises where telemetry volumes are huge. It saves costs in these environments. However, environments with low telemetry volume and stable VM-based infrastructure may not experience significant differences between host-based and ingestion-based models. The savings in this case were driven by Kubernetes elasticity, high-cardinality metrics, and indexed telemetry growth.

Cost alignment depends heavily on workload characteristics.

Telemetry Hygiene Still Matters

Moving to ingestion-based billing does not eliminate the need for disciplined telemetry practices. Excessively verbose logging, uncontrolled metric cardinality, and redundant trace attributes can still inflate ingestion volume.

The migration included:

- Attribute normalization

- Collector-level filtering of non-actionable logs

- Clear semantic conventions across teams

Cost efficiency improved because signal quality improved.

Migration Complexity Must Be Managed Carefully

Parallel ingestion and validation were critical. Without side-by-side telemetry comparison, subtle trace propagation issues or attribute mismatches could have introduced silent visibility regressions.

A phased rollout reduced the blast radius and allowed real production traffic validation before full cutover.

Organizational Alignment Is Required

Engineering teams developed a solid understanding of how an observability architecture drives cost. Cost transparency was not confined to finance-only reporting; engineering teams now understood the observability costs more deeply.

This required internal education on:

- Metric cardinality

- Container churn effects

- Trace sampling strategies

- Retention economics

Observability became an engineering design consideration, not just an operational tool.

What Did Not Work Initially

Early ingestion estimates underestimated span growth during peak traffic. Without modeling tail latency bursts, telemetry forecasts would have been inaccurate.

Additionally, initial collector configurations allowed duplicate exporters in a few services, briefly inflating ingestion. This was identified during validation and corrected before full migration.

When This Architecture Works Best

Ideal For:

- Kubernetes-Heavy SaaS Platforms: Environments with frequent horizontal scaling benefit the most from ingestion-based pricing. When node count, container churn, and autoscaling events are common, host-based billing models tend to compound costs rapidly. Ingestion-aligned pricing removes infrastructure elasticity as a billing multiplier.

- High-Cardinality, High-Volume Telemetry: Teams generating large volumes of structured logs, distributed traces, and custom metrics see significant savings when indexed billing penalties are removed. This is critical in multio-tenant systems where major drivers of increased metrics cardinality are attributes such as tenant ID, region, or request metadata.

- Rapidly Scaling Engineering Organizations: Most teams that are experiencing rapid growth tend to expand the telemetry volume and services more than they expand cost governance. A unified ingestion model simplifies forecasting and prevents cost growth from outpacing infrastructure expansion.

- DevOps-Led Cost Optimization Initiatives: Very few organizations treat observability as an engineering discipline. For those that do, they massively benefit from architectural cost alignment. Ingestion-based pricing encourages telemetry hygiene and OpenTelemetry standardization.

Less Ideal For

- Small Monolithic Applications: Applications that are running on stable infrastructure and generate limited telemetry as well as minimal scaling might not really experience a huge difference between host-based and ingestion-based models.

- Static VM Environments: If infrastructure does not scale dynamically and telemetry volume remains predictable and low, host-based pricing may not create meaningful cost pressure.

- Low Telemetry Workloads: Teams generating minimal logs, traces, and custom metrics may not see large percentage reductions because the indexed billing multipliers are small to begin with.

Conclusion

This case study shows that observability cost reduction is an architectural problem, not a visibility problem.

By replacing host-based and indexed billing with ingestion-aligned pricing, the company reduced monthly spend from $22,975 to $6,750, a 70 percent reduction, while increasing telemetry coverage to 45 TB per month. No traces were dropped. No retention windows were shortened.

Make an effort to comprehend the architecture of your preferred observability platform. It will help you understand costs, especially when usage grows. You will avoid the surprise bills that disrupt the budget. This is because with an architectural design that facilitates ingestion-based pricing and maybe a self-hosted option where you don’t pay for retention limits, the cost becomes predictable as it scales with telemetry volume rather than infrastructure growth. For Kubernetes-heavy environments, aligning pricing with system design delivers sustainable observability without sacrificing depth or reliability.

Disclaimer: The information in this article reflects the latest details available at the time of publication and may change as technologies and products evolve.

FAQs

1. How can I reduce observability costs without losing visibility?

To reduce observability cost while keeping visibility means that you need to focus on observability architectures that align pricing with telemetry volume rather than infrastructure footprint. Host-based and indexed billing models compound in Kubernetes environments. Implementing ingestion-based pricing and eliminating duplicate telemetry pipelines often delivers structural savings without reducing trace or log coverage.

2. Why is observability so expensive in Kubernetes environments?

Kubernetes has multiple cost drivers, including horizontal scaling, container churn, and high-cardinality metrics. When pricing is tied to hosts, container hours, indexed spans, or custom metrics, costs multiply quickly. Elastic infrastructure becomes a billing amplifier rather than just a scaling mechanism.

3. Is ingestion-based pricing cheaper than host-based pricing?

There is a caveat to this. If your team is experiencing rapid growth, you need to scale rapidly, and running microservices with ingestion-based pricing is the best. However, in environments where telemetry generated is modest and infrastructure does not scale dynamically, there is usually no difference between ingestion and host-based pricing.

4. What causes observability costs to spike unexpectedly?

There are a myriad of causes. Major ones that you can note include rapid infrastructure scaling, verbose structured logging, high-cardinality custom metrics, span indexing, and container-hour billing. Indexed pricing models are particularly sensitive to traffic growth and trace depth.

5. How much should a mid-sized SaaS company spend on observability?

There is no fixed benchmark, but costs should scale proportionally with telemetry volume, not with infrastructure mechanics. In Kubernetes-heavy environments generating tens of terabytes per month, architectural alignment between pricing and telemetry design becomes critical to prevent cost escalation.