Most observability failures do not start with missing data. Teams begin by selecting a tool prematurely, prior to gaining a thorough understanding of their systems. In 2021, 90% of respondents in the DevOps Pulse Survey reported overreliance on multiple observability tools to compensate for gaps in visibility. These outcomes are rarely accidental. They are the downstream effects of early decisions that do not hold up as systems scale.

The core issue is feature parity masking long-term behavior. Nearly every observability platform claims support for logs, metrics, and traces. What differs is how those signals behave at scale, during traffic spikes, partial outages, and sustained growth. Sampling control, query performance, and cost dynamics only reveal themselves under stress.

This article is a practical checklist for evaluating observability platforms before those problems surface. It is not a “top tools” list and does not rank vendors or compare pricing pages. Instead, it focuses on real-world behavior, ownership trade-offs, and architectural fit over time.

Define the Evaluation Context First (Before You Look at Tools)

Before evaluating any observability platform, teams need to understand the environment it will actually run in. Many skip this step and move straight to feature lists, dashboards, or pricing pages. That approach often produces tools that look good in demos but struggle once traffic grows, regions multiply, or architectures become more distributed. What works for a small, single-region service can quietly break down when applied to hundreds of microservices across multiple environments.

Defining the evaluation context early changes the entire conversation. Cost behavior, scaling limits, and ownership issues surface faster when the system is viewed as it actually exists, not as it is described in tool documentation. This shifts the comparison away from generic feature lists. Teams can then choose a platform that fits both today’s workload and how the system is likely to change over the next 12–24 months.

Before looking at specific observability tools, teams need to pause and examine a few foundational factors:

- Current system size (services, regions, traffic shape): Observability behaves differently depending on architecture complexity and deployment topology. As services spread across regions and traffic becomes less predictable, observability tools are tested to the limits. Platforms need to cope with high-cardinality data, shifting load, and distributed traces without turning fragile or expensive.

- Expected growth over 12–24 months: Early success is not a useful signal. Many platforms perform well at a small scale, then quietly struggle as traffic increases and services multiply. As systems scale, high cardinality and sudden ingestion spikes can quickly become a challenge. Costs often start to behave in ways teams didn’t expect. Considering how the system might grow over the next 12–24 months makes these risks easier to spot and easier to avoid before they become permanent.

- Who owns observability (platform vs product teams): Ownership defines responsibility and control. Platform teams, SREs, and product engineers have distinct incentives and priorities. Clarifying ownership ensures the right configuration, monitoring practices, and escalation paths are in place.

- Primary use cases: incidents, performance, cost control, compliance: Not all observability platforms excel in every domain. Defining primary objectives, whether fast incident response, performance tuning, cost monitoring, or regulatory compliance, guides feature prioritization and evaluation.

- Regulatory or data-residency constraints: In some systems, rules come first and architecture follows. Data might need to stay in a single region, or specific fields may only be processed inside tightly controlled boundaries. If a platform cannot enforce those boundaries consistently, it stops being an option, regardless of its observability features.

Looking at these factors early changes how the evaluation is done. What starts as a simple tool comparison becomes a broader architectural decision. In other words, the “best” platform is never universal. It depends entirely on context, scale, and ownership realities.

Telemetry Coverage: What Signals Actually Matter for You

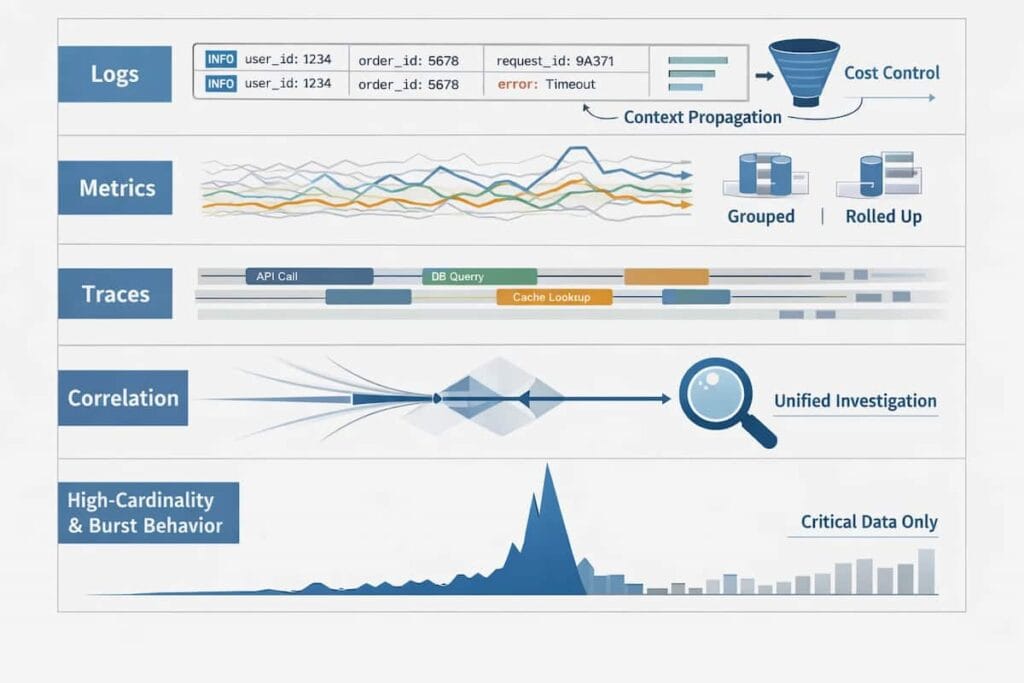

Most observability evaluations stop too early. Teams ask whether a platform supports logs, metrics, and traces, then move on. That question made sense years ago. Today, it is mostly meaningless. Nearly every serious observability tool claims support for all three signals. What separates platforms is not whether they collect telemetry but how that telemetry behaves once systems grow and things start to break.

In real environments, telemetry is not evenly distributed. Errors spike. Traffic bursts. Cardinality explodes. The way a platform handles these moments determines whether engineers gain clarity or lose trust in their data. This is why coverage should be evaluated in terms of structure, control, and behavior under stress, not just signal availability.

With that in mind, a practical evaluation should break telemetry down into the following areas and examine how each one performs when systems are under load.

Logs: structure, context propagation, cost control

Logs are often the largest source of observability data and the fastest to become expensive. Structured logging, consistent context propagation, and the ability to control ingestion all matter. Without them, logs become noisy, disconnected, and financially unsustainable at scale.

Metrics: cardinality behavior, aggregation model

Metrics appear cheap and simple until high-cardinality labels enter the system. How a platform aggregates metrics, enforces limits, and prices cardinality directly affects both cost predictability and query performance. These trade-offs are rarely visible in demos.

Traces: sampling control, tail vs head vs adaptive

Tracing is where many platforms diverge sharply. Head-based sampling favors cost control, tail-based sampling favors accuracy, and adaptive models attempt to balance both. The key question is not which model exists but who controls it and how it behaves during incidents.

Correlation: how signals are joined during investigation

Observability breaks down when engineers must manually stitch data together. Effective platforms allow seamless movement between metrics, logs, and traces using shared context. Poor correlation turns investigations into guesswork.

High-cardinality and burst behavior

The hardest test is not steady traffic. It is a sudden change. A useful platform should remain predictable when request rates surge, error dimensions multiply, or new services are deployed quickly. This is where many tools silently degrade.

Sampling and Ingestion Control (The Make-or-Break Factor at Scale)

Sampling and ingestion control are where observability platforms quietly reveal their true design assumptions. When systems are small, teams can afford to collect almost everything. As traffic grows, that approach breaks down. Data volume increases faster than insight, costs become unpredictable, and engineers are forced to choose between visibility and budget. At this point, sampling is no longer an optimization. It becomes a core reliability mechanism.

The challenge is that sampling decisions are often abstracted away. Many tools promise “smart” or “automatic” sampling but offer little clarity about how those decisions are made or how they change under stress. During high-traffic events or cascading failures, this lack of control becomes visible. Important traces disappear. Errors are under-represented. To evaluate whether a platform can support you at scale, teams need to examine how sampling and ingestion behave in real failure conditions, not just steady state.

These questions are not theoretical. They expose how an observability platform behaves when conditions stop being ideal and start looking like production. It is prudent to consider:

- Where sampling decisions are made: When sampling happens at the agent, teams gain early control but accept tighter coupling to services. Collector-level decisions shift that balance, while backend sampling trades immediacy for centralized flexibility. Each option shapes latency, cost, and control in different ways, and none of those trade-offs are neutral at scale.

- Whether rules can change during an incident: The ability to change sampling rules during an incident is even more revealing. Incidents are dynamic. Traffic patterns shift, errors cluster, and what mattered five minutes ago may no longer be relevant. Platforms that require redeployments or slow configuration changes make it harder to respond in real time. Platforms that allow live adjustments let teams follow failure as it unfolds instead of working around it.

- Error and latency bias handling: Bias in sampling is another area where tools quietly fail teams. Under normal conditions, most requests look similar. During an incident, they do not. Slow paths, retries, and outright failures begin to dominate. If the sampling logic does not deliberately favor these signals, the data engineers need most is often the first to disappear. What remains is a clean picture of healthy traffic, which is rarely useful when systems are misbehaving.

- Auditability and predictability of sampling behavior: Trust also depends on predictability. Engineers need to understand what data is present and, just as importantly, what is missing. When sampling decisions are opaque or change in unexpected ways, investigations turn into guesswork. Teams spend time questioning the tooling instead of the system. Platforms that make sampling behavior observable and auditable reduce this friction and make debugging faster, not harder.

- Impact on investigations during traffic spikes: Traffic spikes are the final test. They compress time, volume, and complexity into a narrow window. Some observability systems respond by shedding data aggressively, slowing queries, or imposing hard limits that fragment investigations. Others degrade more gracefully, preserving enough context to let teams trace failures end to end. The difference only shows up under pressure, which is why this scenario belongs in any serious evaluation.

OpenTelemetry and Vendor Lock-In

OpenTelemetry has become the default way teams collect and move telemetry. It promises standardized instrumentation across services and platforms, and on paper, that sounds like an easy way to avoid vendor lock-in. In practice, it’s more complicated. Adopting OpenTelemetry alone does not guarantee long-term portability or flexibility.

Teams need to look past the marketing claims and understand how their observability platform actually handles OpenTelemetry data. Some tools fully embrace OpenTelemetry as a first-class model. Others offer “compatibility” layers that still depend on proprietary formats, pipelines, or assumptions behind the scenes. Those differences matter when systems grow, costs rise, or a migration becomes necessary.

To navigate these challenges effectively, it is critical to examine key aspects that reveal where lock-in can occur, how data and instrumentation move between platforms, and what trade-offs exist between short-term convenience and long-term flexibility. Specifically, the evaluation should focus on:

- Native vs “compatible” OpenTelemetry support: Determine whether the platform fully implements OpenTelemetry natively or relies on partial compatibility, which can affect fidelity and future migrations.

- Where vendor logic still applies: Identify hidden transformations or processing layers that could tie your instrumentation to the platform.

- Portability of data and instrumentation: Assess how easily telemetry data, metrics, and traces can be exported or reused elsewhere without losing context or history.

- Migration complexity between platforms: Evaluate the effort required to move off the platform if scaling, cost, or architecture decisions change.

- Long-term flexibility vs short-term convenience: Balance immediate ease of use against the future cost of being locked into specific vendor behaviors or APIs.

Cost Model Reality Check

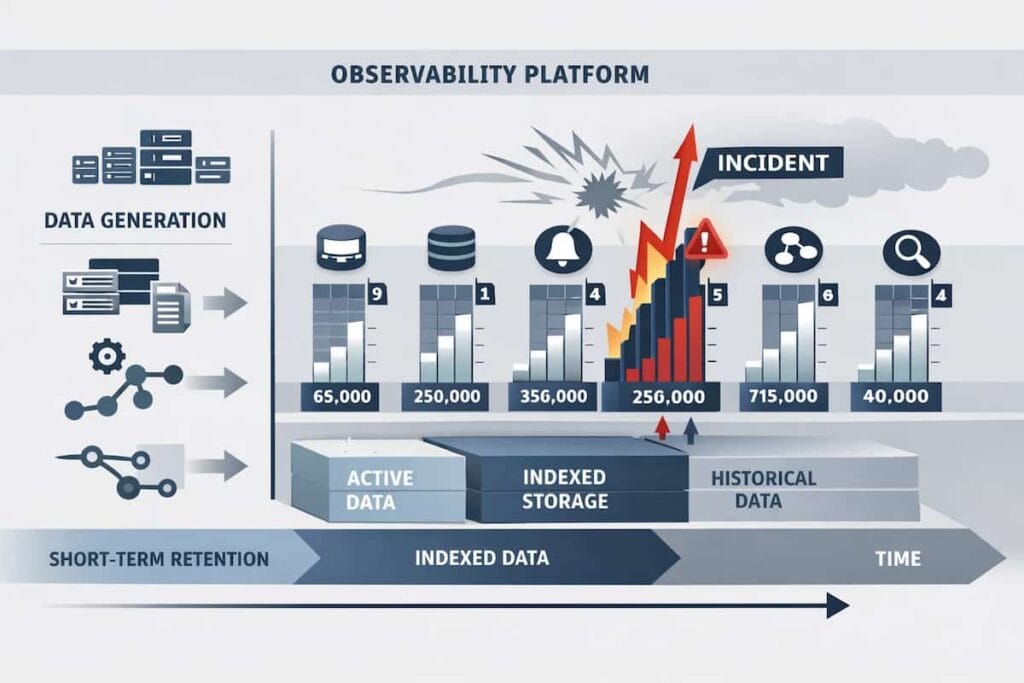

For most teams, observability costs do not become a problem during evaluation. They become a problem later. Pricing pages are designed to look predictable, but real-world usage is not. As systems grow, traffic spikes, services multiply, and incident-driven data volume increases sharply. What looked affordable in a proof of concept can turn into one of the largest line items in the engineering budget. This is why cost evaluation needs to focus less on list prices and more on how a platform behaves when the system is under stress.

To understand whether an observability platform will remain sustainable over time, teams need to look past marketing tiers and ask harder, operational questions.

Once a system is live, cost stops being theoretical. Traffic fluctuates. Incidents happen. Usage spikes in ways no pricing calculator can predict. That is when the real cost mechanics of an observability platform become visible, often uncomfortably. It is critical to direct focus on:

- What is actually billed: Start with what is actually billed. Some platforms charge by hosts; others by data volume, events, spans, metrics, or even queries. Each model ties cost to system behavior in a different way. The tighter that coupling, the more likely your bill will change as your architecture evolves, sometimes in ways you did not anticipate.

- Cost behavior during incidents: Incidents make this even clearer. Error rates climb, logs get noisier, traces multiply. If costs rise sharply during those moments, teams are forced into a negative trade-off: investigate faster or spend less. Platforms that behave this way penalize visibility precisely when it matters most.

- Retention, indexing, and rehydration trade-offs: Longer-term costs are shaped by retention and indexing decisions. The length of data retention, default indexing, and the ability to rehydrate cold data later all influence the depth of investigations. These choices also accumulate quietly. Storage and access patterns that seem reasonable early on can become expensive as systems and teams grow.

- Who pays versus who generates data: Ownership matters too. In many organizations, the teams generating the most telemetry are not the ones paying for it. That gap creates friction. Over time, it can distort incentives, encourage over-collection, or trigger sudden clampdowns that reduce visibility when new services come online.

- Forecasting difficulty over time: Forecasting adds another layer of uncertainty. Some platforms scale in ways that are easy to model. Others feel linear at first, then suddenly become nonlinear. Traffic patterns shift. Cardinality increases. What once looked stable becomes volatile without a clear cause.

Query Experience Under Pressure

Dashboards are designed for calm systems. Incidents are not calm. When something breaks, engineers stop looking at curated charts and start asking urgent, open-ended questions: What changed? Where did it start? What is affected right now? At that moment, the true test of an observability platform is not how polished its dashboards look but how quickly and reliably engineers can query raw telemetry under load. Many platforms perform well during normal operation, yet degrade precisely when they are needed most.

This gap exists because most evaluations over-index on visualization and under-evaluate investigation. Query engines behave differently under stress. High traffic, elevated error rates, and concurrent users all compete for the same backend resources. The difference between a useful platform and a frustrating one often comes down to how well it supports engineers thinking in real time, across signals, without artificial friction. With that in mind, teams should evaluate query experience through the lens of incident response, not steady-state reporting.

To make this evaluation concrete, teams should look closely at how the query layer behaves once systems are already under stress. This is where theoretical capability turns into lived experience.

- Query latency during high load: Query latency during high load becomes immediately visible during an incident. If every search takes tens of seconds to return, engineers lose momentum.

- Exploratory workflows versus predefined dashboards: Investigation requires flexible tools that let teams pivot, trace unusual signals, and dig into unexpected behavior as it arises.

- Cross-signal querying between logs, traces, and metrics: Cross-signal querying across logs, traces, and metrics often determines whether investigations stay focused or fragment. The ability to move from a spike in latency to a specific trace and then into the relevant logs without rebuilding context saves time when it matters most.

- Limits, throttling, and fairness controls: Limits, throttling, and fairness controls tend to surface only when many people are querying at once. During a high-severity incident, platforms must decide who gets priority and how resources are shared, and those decisions have real consequences.

- Debugging depth versus surface-level visibility: Debugging depth versus surface-level visibility is the final separator. Some tools expose raw data paths and detailed context. Others flatten everything into summaries, which look clean but leave engineers guessing.

Deployment Model and Ownership Trade-offs

How an observability platform is deployed is usually a consequential decision. It quietly defines who owns the data, who carries the pager when things break, and how much control the team actually has during an incident. These implications are easy to miss early on. Different deployment models come with built-in assumptions about responsibility, cost, and risk, and those assumptions usually surface only when systems are under pressure.

Deployment choices matter because they set the boundaries of control. Once these constraints are in place, they are hard to undo. With that context, it becomes easier to evaluate the specific factors that define ownership, risk exposure, and ongoing operational effort.

These differences usually surface in three practical areas: how the platform is deployed and owned, how telemetry data moves and is stored, and how much ongoing maintenance the team is expected to handle.

- SaaS vs BYOC vs self-hosted: Each deployment model changes where the work actually lives. A SaaS setup usually removes a lot of operational friction, which is attractive early on, but it also means giving up some control when things go wrong. Self-hosted systems sit at the opposite end of the spectrum. You own everything, including the flexibility, but also the upgrades, scaling issues, and late-night fixes. BYOC often looks like a compromise, and sometimes it is. Still, it introduces its complexity, especially around networking, security boundaries, and long-term support.

- Control over data path and storage: Telemetry does not just appear in a dashboard. It moves through collectors, queues, and storage layers before anyone queries it. Teams should know where data goes, where it ends up, and who can access it.

- Upgrade and maintenance responsibility: Some platforms stay current quietly in the background. Others require hands-on work as schemas change, agents evolve, or features roll out. That difference shows up over time, not on day one.

- Failure modes and blast radius: Failures are inevitable. What teams usually discover too late is whether the observability layer contains the damage or makes it harder to see what’s going on. When visibility drops during an outage, the tool becomes another problem to work around.

- Exit strategy and portability: Change is inevitable. Migration cost is not. Platforms that make data and setup portable give teams options later, when options matter most.

Security, Compliance, and Data Governance

Observability platforms hold a lot of sensitive data. For regulated teams, mistakes can be costly. Uncontrolled access, exposed PII, or storing data in the wrong region can quickly become compliance problems.

Engineers need to look beyond features. Data governance has to be part of every evaluation step. In practice, security and governance concerns show up in a few concrete areas related to where data lives, how sensitive fields are handled, who can access telemetry, and how controls behave during incidents.

- Data residency and regional control: Ensure the platform can store data in compliant regions and respect local residency laws.

- PII handling and redaction: Sensitive data should be automatically masked or removed at ingestion, preventing exposure in logs, traces, or metrics.

- Access controls and audit logs: Sensitive information must be masked or removed as soon as it enters the system. Logs, traces, and metrics should never leak private data.

- Compliance posture (SOC 2, GDPR, HIPAA where relevant): Evaluate the certifications and controls the platform provides, but also verify practical enforcement within your specific workflows.

- Incident response access controls: During outages or breaches, temporary escalation of access should be tightly controlled and fully logged to prevent misuse.

Operational Overhead and Day-2 Costs

Observability tools do not stop demanding effort after setup. Once systems are live, maintenance becomes the real work. Dashboards drift. Signals get noisy. Someone has to keep things usable. The time spent doing that often matters more than how fast the tool was installed.

Understanding operational overhead is critical. It is not just about how quickly a platform can be installed but also about how much effort it takes to keep it reliable, maintain signal quality, and ensure engineers remain productive.

- Setup vs ongoing tuning effort: How much setup is needed, and how often must it be adjusted? Constant tuning can drain team bandwidth.

- Alert fatigue and maintenance: It happens slowly. Warnings fire, nothing is wrong, and response times slip. Useful alerts are specific and rare. Everything else becomes background noise that still has to be maintained.

- Schema and tagging discipline requirements: Observability falls apart without consistency. Tags drift. Fields mean different things to different teams. Correlation gets harder, not easier.

- Training cost for new engineers: Teams must consider how much effort it takes to onboard new members to effectively use the platform.

- Documentation and support quality: An engineer’s ability to obtain guidance limits the platform’s usefulness.

Evaluation Through Failure Scenarios (The Litmus Test)

Observability looks fine when everything is calm. Dashboards load. Metrics are clean. Traces line up. That is not the moment that matters. The real test comes when traffic spikes, dependencies wobble, or part of the system goes dark. That is usually when teams learn what their tooling can and cannot do.

A useful way to evaluate a platform is to think in failures, not features. Walk through situations that have already happened or eventually will. How does the system behave when the load jumps suddenly? Can it keep up with ingestion? Do queries slow down? Do alerts arrive late, or not at all? Many platforms hold steady during normal operation, then degrade quietly when pressure increases.

- Traffic spike: A sudden surge in requests stresses ingestion pipelines, sampling rules, and query paths all at once. Some tools respond by throttling data.

- Partial outage: One service fails, or a region drops out, and the impact spreads unevenly. Engineers must quickly identify the affected and unaffected areas.

- Dependency failure: Dependency failures show up differently. An external API starts timing out. A database becomes unstable. Symptoms appear far from the cause. In these cases, observability is only useful if signals stay intact and connected. Broken traces, dropped context, or noisy logs turn root-cause analysis into guesswork.

- Cost spike during incident: Then there is cost. Incidents rarely stay quiet. Error rates rise, retries increase, and data volume follows. Some platforms become significantly more expensive at the exact moment teams are under pressure.

Scoring the Platforms: A Practical Evaluation Matrix

Evaluating observability tools often feels fuzzy. Priorities change between teams, and a setup that works in one system can break down in another. Without structure, comparisons turn into opinions. A simple scoring model helps anchor the discussion and makes trade-offs easier to see.

Here are the key aspects to include in your evaluation:

- Weighted criteria: Identify the factors that matter most: cost predictability, operational control, visibility depth, and ownership responsibility. Assign weights based on what impacts your team and system the most.

- Objective scoring: Score platforms using evidence, not claims. Run small tests. Push real traffic where possible. Look at how queries behave, how data shows up, and how long it takes to answer a basic debugging question.

- Common traps: Be careful of familiar traps. Shiny dashboards can hide slow queries. “Automatic scaling” often means limited control when load increases.

- When to walk away: Sometimes the right move is to stop. Popular tools are not always a fit. If costs are opaque, control is limited, or governance feels bolted on, walking away is not failure. It is part of the evaluation.

A Practical Observability Evaluation Checklist

When evaluating an observability platform, most teams focus on features. A more reliable approach is to evaluate how the system behaves under scale, cost pressure, and operational stress. The checklist below is designed to surface those differences early.

1. Cost & Scale Predictability

- How does cost grow as telemetry volume increases—linearly, exponentially, or unpredictably?

- What happens to cost during incidents, traffic spikes, or debugging-heavy periods?

- Can you model future observability expenditures before scaling workloads?

- Are pricing drivers transparent and controllable, or opaque and usage-surprise prone?

2. Control, Sampling, and Data Governance

- Who controls sampling decisions—engineering teams or the platform?

- Can sampling be adjusted dynamically during incidents without losing critical context?

- Is it possible to enforce consistent retention and sampling policies across teams?

- How much control do you have over where data is stored and how long it is retained?

3. Telemetry Quality & Correlation

- Can logs, metrics, and traces be reliably correlated at query time?

- Does high-cardinality data degrade query performance or visibility?

- How does the platform behave when telemetry volume spikes suddenly?

- Are investigations driven by request-level context or by disconnected dashboards?

4. Operational Reliability During Incidents

- Does query performance degrade when the system is under stress?

- Can teams investigate incidents without dropping data or timing out queries?

- What fails first during peak load: ingestion, querying, or correlation?

- Is observability still usable when it’s needed most?

5. Portability & Long-Term Flexibility

- How portable is your telemetry if you change platforms later?

- Does OpenTelemetry adoption meaningfully reduce lock-in, or only shift it?

- Are migration paths realistic at scale, or only in theory?

- Can the platform evolve with your architecture over the next 2–3 years?

How to use this checklist

An observability platform that performs well across all five areas is usually one that scales predictably with both system complexity and organizational maturity. Gaps in any one category tend to surface later as cost overruns, blind spots during incidents, or forced replatforming.

This checklist is most effective when applied before comparing vendors or pricing plans.

Conclusion: Evaluation Is a Long-Term Architecture Decision

Choosing an observability platform is not a quick decision, even if it feels that way at the start. The choice affects how teams see their systems, how incidents unfold, and how much effort it takes to understand failures later on. Decisions around sampling, data flow, and retention tend to stick. They resurface months down the line, usually when something breaks.

That is why evaluation matters more than initial setup. Teams that slow down and test assumptions early tend to avoid painful rewrites later. Context, ownership, and real failure behavior reveal more than feature lists ever will.

When observability is treated as part of the system design, the outcome changes. Tools stop being bolt-ons. Platforms like CubeAPM fit into the architecture instead of sitting on top of it, supporting how systems actually operate as they grow.

Disclaimer: The information in this article reflects the latest details available at the time of publication and may change as technologies and products evolve.

FAQs

1. What should teams evaluate first when choosing an observability platform?

Start with context. System size, growth projections, team ownership, and primary use cases set the foundation for meaningful comparisons.

2. Why are feature comparisons a poor way to evaluate observability tools?

Feature lists can be misleading. Logs, metrics, and traces may all be supported, but how the platform handles high-cardinality data, sampling, or bursts in traffic is where the real differences appear.

3. How important is sampling when evaluating an observability platform?

Sampling controls visibility and cost. Poor sampling can hide critical signals or bias investigations, while effective sampling strategies allow teams to retain fidelity where it matters most during incidents.

4. What causes observability costs to become unpredictable over time?

Expenses tend to increase with traffic spikes, unforeseen retention needs, or heavy indexing-based workflows.

5. How can teams avoid vendor lock-in when selecting an observability platform?

Look beyond immediate convenience. A system that binds you to proprietary formats or logic can restrict your flexibility as your systems change.