Observability problems rarely start with dashboards or alerts. They start with architecture. Most teams discover this not during rollout, but months later when telemetry volume surges and the bill follows.

At a small scale, almost any pricing model feels reasonable. A handful of services, moderate log volume, conservative trace sampling. But once Kubernetes, autoscaling, and distributed microservices enter the picture, telemetry growth accelerates. Pods multiply. Traces fan out across dozens of services. Incident investigations increase verbosity precisely when systems are under stress. Cost does not rise gradually. It compounds.

Observability adoption is now universal across engineering teams. Pricing predictability is not. This article examines ingestion vs host-based pricing models and how they behave under real production conditions, and what CTOs and DevOps leaders should evaluate before observability spend begins scaling faster than the infrastructure it is meant to protect.

Host-based pricing ties cost to infrastructure footprint

With host-based observability pricing, the cost is directly related to compute resources like virtual machines, Kubernetes nodes, containers, or vCPUs. As infrastructure grows, observability cost goes up linearly no matter how much telemetry those resources send. This model assumes that the size of the infrastructure is related to the complexity of the system and the value of the telemetry. This was true in VM-centric and monolithic environments. But in cloud-native architectures, infrastructure is very dynamic, so observability is more affected by autoscaling events, environment duplication, and redundancy strategies than by actual system behavior.

Ingestion-based pricing ties cost to telemetry volume

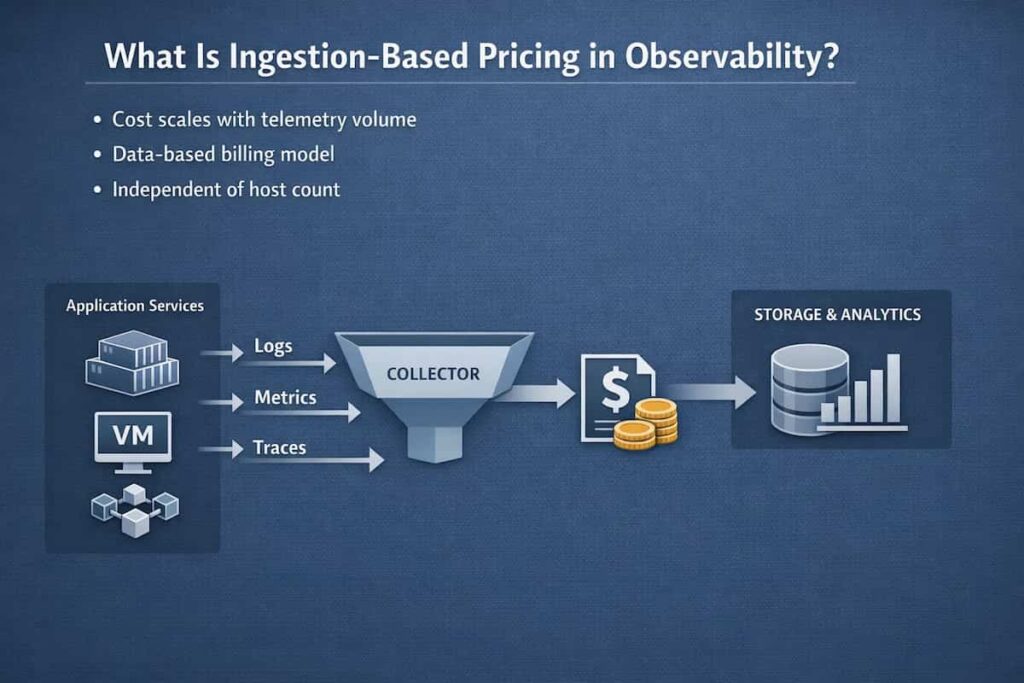

Ingestion-based pricing charges based on how many logs, metrics, and traces are ingested. This separates the cost of observability from the size of the raw infrastructure. This model makes costs more in line with how the system works, how many users are on it, and how the application behaves. Telemetry volume, on the other hand, is greatly affected by engineering decisions like how much logging to do, how many metrics to collect, how to sample traces, and how to set up OpenTelemetry pipelines. Ingestion-based pricing moves cost control from infrastructure teams to application and platform engineering teams. This makes observability cost an engineering governance problem instead of just a problem with scaling infrastructure.

In Kubernetes-native systems, these behave very differently under stress

Infrastructure elasticity and microservices fan-out change the cost dynamics in Kubernetes environments in a big way. Even if new nodes don’t send much telemetry, host-based pricing goes up automatically during autoscaling events. This means that costs go up when the infrastructure changes.

Ingestion-based pricing, on the other hand, goes up when there are a lot of retries, errors, and debugging signals, which means that the cost is directly tied to the amount of work and failures that happen. The main difference is control. Scaling infrastructure is often done automatically and without much visibility, while telemetry volume can be controlled through sampling, filtering, and pipeline controls. This makes ingestion-based pricing more flexible under stress, even though it can be unstable when incidents happen.

What Is Host-Based Pricing in Observability?

Host-based pricing emerged in an era dominated by virtual machines and monolithic architectures. Instead of charging based on telemetry volume, vendors structured pricing around the number of monitored infrastructure units, typically physical servers or virtual machines. The model assumed relatively stable infrastructure footprints and predictable resource boundaries.

How Host-Based Billing Is Calculated

Host-based pricing usually charges by:

- Host, VM, node, or container: Vendors charge based on the number of infrastructure units monitored, meaning every VM, Kubernetes node, or container increases licensing costs.

- Virtual CPU or memory unit: Some vendors bill based on allocated compute resources, so larger instances with more vCPUs or RAM cost more to monitor.

- Instance of an agent: Each monitoring agent deployed counts as a billable unit, so scaling agents increases observability licensing costs.

Most vendors want an agent to be installed on every host, node, or container. Prices go up linearly as infrastructure grows.

Common Add-On Costs

Prices based on the host don’t always cover everything. Vendors often charge more for:

- Taking in and indexing logs: Logs require significant storage and compute for indexing and querying, so ingestion and indexing are billed separately.

- Monitoring Real Users (RUM): RUM tracks frontend user sessions and performance, usually billed per session, user, or event.

- Synthetic monitoring: Synthetic checks simulate user traffic and uptime tests, generating telemetry that incurs additional charges.

- Security telemetry (SIEM signals): Security and audit logs are billed at premium rates due to compliance and high ingestion volume requirements.

- Long-term retention: Storing telemetry for months or years incurs storage costs, often billed separately from ingestion.

Beyond feature-based add-ons, some host-based vendors also enforce ingestion limits per host. Telemetry volume is not always unlimited. If a single host exceeds its allocated ingestion threshold, overage charges apply. This means organizations may pay for the host license and still incur additional data charges once telemetry volume crosses defined limits.

In high-throughput environments, such as microservices with verbose logging or bursty trace traffic during incidents, both host count and data volume can begin influencing overall spend.

This fragmentation causes observability signals to have more than one cost center.

What Counts as a “Host” in Modern Cloud Environments?

In modern cloud architectures, a “host” is no longer a simple unit. Virtual machines coexist with containers. Kubernetes nodes run dozens of pods. Autoscaling creates and terminates infrastructure dynamically. DaemonSets and sidecars add background workload overhead. The definition of a billable host becomes fluid, and pricing models respond very differently to that fluidity.

Virtual Machines vs Containers vs Kubernetes Nodes

- Virtual Machines: In traditional infrastructure models, the virtual machine is the primary billing boundary. Early observability platforms were designed for VM-centric environments, where workloads were long-lived and infrastructure growth was deliberate. Pricing per VM aligned with relatively static compute allocation.

- Kubernetes nodes: This is a common way to charge for cloud-native platforms. Many vendors now charge per Kubernetes node, which becomes expensive in autoscaling clusters.

- Containers: Containers introduce a more granular execution unit. Some observability platforms charge per container, while others infer container cost indirectly through node-level pricing. Because containers are ephemeral and may scale independently of nodes, the billing boundary may not perfectly reflect application-level activity.

Autoscaling Groups and Ephemeral Infrastructure

Kubernetes and cloud autoscaling dynamically provision and decommission compute resources based on workload demand, creating ephemeral infrastructure instances. Each temporary instance could cause billing events, which would make things more unstable.

DaemonSets and Sidecar Overhead

Monitoring agents often work as:

- One DaemonSet for each node, ensuring an agent runs on every node, multiplying resource and licensing costs.

- Sidecar containers (one for each pod), injecting monitoring into each pod and increasing CPU, memory, and network overhead per service instance.

This overhead gets worse as scaling events happen, which raises the cost of infrastructure indirectly.

Where Host-Based Pricing Works Well

In some situations, host-based pricing still works well:

Static VM-Based Infrastructure

- Workloads common in fixed capacity planning: Capacity-planned environments grow by decision, not by traffic bursts. The bill changes when you add machines, not when usage spikes.

- Infrastructure is stable and predictable: For example, businesses running static VM-based setups with fixed capacity planning often benefit from it. If your infrastructure footprint rarely changes and telemetry volume stays relatively consistent, the pricing model remains easy to manage.

Monolithic Applications

A monolith usually means fewer services, fewer moving parts, and fewer new hosts spun up just to handle one workflow. Monolithic architecture has:

- Low variability in telemetry volume: If telemetry output is steady, monitoring overhead doesn’t surprise you. You aren’t paying for constant new hosts just to keep up with bursts.

- Simpler architectures (no heavy service mesh): Without a busy mesh and lots of sidecars, the infra shape stays simpler. That reduces the pressure to add nodes just to support the monitoring stack.

Predictable Node Counts

- Clusters on-site with fixed capacity

- Environments with a set amount of space

In these situations, host-based pricing offers cost models that are linear and easy to predict.

Structural Limitations of Host-Based Pricing

Host-based pricing appears simple on paper. In modern distributed systems, that simplicity breaks down quickly. As infrastructure becomes dynamic, environments multiply, and telemetry signals fragment across tools, the model begins to introduce structural cost distortions that compound at scale.

Autoscaling Volatility in Kubernetes

Autoscaling in Kubernetes can rapidly increase the number of nodes during load spikes. Host-based pricing scales linearly with node count. Therefore, observability costs surge even if telemetry volume or user traffic does not increase proportionally. This creates a cost model tied to infrastructure volatility rather than system behavior or business activity.

Environment Duplication

Modern teams don’t run just one environment anymore. Production, staging, QA, dev, and short-lived feature branches all need monitoring coverage. When pricing is tied to hosts, every duplicated environment multiplies cost, even if real business traffic hasn’t increased.

Add-On Signal Pricing Fragmentation

Many host-based plans don’t bundle all telemetry into one model. Logs, Real User Monitoring, synthetics, and security signals are often priced separately. That separation creates budgeting complexity and makes it harder to see total observability spend clearly.

Over-Provisioned Monitoring Agents

Monitoring agents consume CPU, memory, and network resources on every host or container. Over-provisioned agents increase infrastructure resource requirements, indirectly raising compute and cloud costs beyond observability licensing. In large clusters, agent overhead can materially affect capacity planning and operational efficiency.

What Is Ingestion-Based Pricing in Observability?

Ingestion-based pricing charges based on telemetry data ingested instead of the number of infrastructure units.

How Ingestion Billing Is Calculated

Ingestion pricing usually charges by:

- GB ingested, measuring raw telemetry volume.

- Different prices for logs, metrics, and traces due to differing storage and compute requirements.

Indexing vs Retention Cost Differences

- Retention: The cost of storing raw telemetry, often in low-cost storage tiers.

- Indexing: Cost for data that can be searched, which requires expensive compute resources.

- Rehydration: The cost of reindexing archived data for audits or investigations.

Many vendors charge more for indexed data than for raw data storage.

Technical Mechanics Behind Ingestion Costs

The amount of telemetry is determined by how the application works, not how big the infrastructure is.

Log Verbosity and Logging Patterns

- Debug logs can make the volume go up by 10 to 100 times due to verbose output.

- Structured logging makes the payload bigger due to metadata.

Trace Sampling Strategies

- Head sampling cuts down on volume early on

- After collection, tail sampling picks high-value traces

High-Cardinality Metrics

Metrics labeled per user, session, tenant, or request ID can make the number of records and the amount of data that can be processed very large.

OpenTelemetry Pipeline Transformations

OTel collectors can:

- Filter telemetry

- Combine metrics

- Get rid of low-value logs

- Black out sensitive fields

Changes to the pipeline have a direct effect on the cost of ingestion.

Where Ingestion Pricing Works Well

Ingestion-based pricing ties observability spend to signal volume rather than infrastructure footprint. In systems where operational complexity is driven by request flow, service interaction, and telemetry density, this model maps cost to runtime behavior instead of static capacity allocation.

Kubernetes-Native Environments

In Kubernetes environments, telemetry volume tracks workload behavior rather than static node counts. Ingestion-based pricing aligns cost with request activity and scaling events, allowing clusters to autoscale without automatically increasing observability licensing tied to infrastructure growth.

Microservices Architectures

In microservices, telemetry volume is driven by the number of calls, not the number of machines. A single user request can fan out across auth, catalog, pricing, checkout, payments, and fraud and each hop adds spans, logs, and metrics. Ingestion-based pricing maps to that reality: when the system produces more signals, you pay more; when it produces less, you pay less.

Multi-Cluster or Multi-Cloud Setups

Multi-region and multi-cloud deployments usually duplicate infrastructure on purpose: failover, latency, regulatory boundaries. Host-based pricing often treats that redundancy like “more product usage,” even when traffic has not changed.

With ingestion pricing, expansion is simpler to reason about. Cost follows emitted telemetry, so standing up a second cluster for resilience only becomes expensive if it starts generating a lot of additional data.

Vendor-Neutral Telemetry Pipelines

OpenTelemetry pipelines let you shape telemetry before it hits storage: drop noisy attributes, reduce high-cardinality dimensions, sample traces during normal operation, and keep more detail during incidents. That is where cost control actually happens.

Ingestion pricing works well with this model because the engineering team can tune volume directly in the pipeline, instead of being locked into a licensing unit that ignores whether the data is useful.

Structural Limitations of Ingestion Pricing

Ingestion pricing aligns cost with telemetry volume. That sounds logical. But telemetry volume is not stable. It changes with traffic patterns, engineering decisions, and incident behavior.

Incident-Driven Log Spikes

Outages generate noise, multiply retries and services emit stack traces. Engineers temporarily enable debug logs. A request that normally produces five spans may suddenly produce fifty.

It’s not unusual to see 10x or even 100x telemetry expansion during a major incident. The problem is timing. The spike happens precisely when the system is under stress and when teams are least focused on cost controls. In an ingestion model, that surge directly translates to a billing surge.

Debug Logging Mistakes

Misconfigured logging levels can cause massive telemetry explosions. A single service deployed with debug-level logging in production can emit terabytes of logs within hours, especially in high-throughput systems. Because ingestion pricing charges per data volume, even a minor configuration error can translate into substantial and unexpected observability spend.

Cardinality Explosions

High-cardinality metrics occur when labels are dynamically generated, such as user IDs, request IDs, or tenant identifiers. Each unique label combination creates a new time series, which increases both ingestion volume and query complexity. Cardinality explosions can generate millions of metric series, dramatically raising storage, indexing, and compute costs while also degrading query performance.

Long-Term Indexing and Storage Growth

Retention and compliance requirements often mandate storing telemetry for months or years. Over time, even moderate ingestion rates accumulate into petabytes of telemetry data. While raw retention storage may be inexpensive, indexed data required for fast querying is significantly more costly, and rehydrating archived telemetry for audits introduces additional compute and indexing expenses.

Cost Behavior Under Real Production Scenarios

Cost Transparency vs Cost Opacity

Host-based pricing ties spend to infrastructure units. That makes it operationally stable, but it often obscures inefficiencies in telemetry design. You can over-instrument a service, emit verbose logs, or attach high-cardinality labels and nothing changes on the invoice if host count stays the same. The inefficiency hides inside the telemetry.

Ingestion-based pricing makes telemetry volume directly visible in spend. Poor sampling strategies, verbose logging, and uncontrolled high-cardinality metrics surface quickly as financial impact. The cost model exposes signal quality decisions rather than masking them behind infrastructure abstraction.

Host-based models are typically stable but blunt. Ingestion-based models are granular but sensitive to runtime behavior.

Scenario 1: Kubernetes Autoscaling from 20 to 200 Nodes

Host-Based Cost Expansion

A cluster scales from 20 to 200 nodes during a traffic surge. Under host pricing, licensing scales with node count. Even if the spike lasts two hours, the cost impact reflects the full infrastructure footprint for that period.

- Traffic might only be 2x.

- Infrastructure might be 10x.

- Billing follows infrastructure.

Ingestion-Based Volume Behavior

Ingestion cost increases only if request volume, traces, logs, or metrics actually grow. Autoscaling alone does not trigger higher licensing unless it produces additional telemetry.

Predictability Differences

Host-based pricing makes infrastructure growth the primary forecast variable. Ingestion-based pricing requires modeling traffic patterns and signal emission rates instead of node counts.

Scenario 2: Log Volume Spike During an Incident

Host Pricing Stability

If host count remains unchanged during an incident, licensing cost remains stable. However, excessive log generation may go financially unnoticed in the short term.

Ingestion Billing Surge Risk

A sudden increase in log volume directly impacts ingestion spend. Without rate limiting or sampling controls, incident-driven verbosity can create measurable cost spikes.

Governance Controls

Ingestion-based models demand stronger telemetry governanc including sampling policies, log filtering, and retention discipline.

Host-based pricing feels simpler. You can emit noisy metrics or overly detailed logs and the invoice doesn’t immediately react. But the inefficiency is still there. It just shows up as slower queries, heavier storage, and harder troubleshooting.

Scenario 3: High-Cardinality Metrics

Visibility of Cost Drivers

In ingestion models, high-cardinality metrics immediately affect storage and query cost. Add user_id to a metric label or tenant_id. Or session_id. Suddenly one metric becomes thousands or millions of time series.

In an ingestion model, that explosion hits immediately. More series means more index work, more storage, more query cost. The spend moves with the mistake.

Hidden vs Explicit Cost Exposure

Under host-based pricing, cardinality increases may degrade performance or storage efficiency without directly altering licensing cost. The inefficiency is operational, not financial.

Impact on Query Performance

High-cardinality signals affect query latency in both models. The difference is whether cost exposure makes the tradeoff visible and measurable.

Scenario 4: Multi-Region Expansion

Host Duplication Impact

Expanding to additional regions typically requires duplicating infrastructure. Host-based pricing scales with each replicated node, regardless of traffic distribution.

Ingestion Growth Tied to Traffic

In ingestion models, cost scales with regional traffic volume rather than mere infrastructure presence. Idle redundancy does not automatically inflate licensing.

Forecasting Difficulty

Host-based forecasting relies on infrastructure planning. Ingestion forecasting depends on traffic modeling, retention strategy, and signal discipline. Each requires different financial controls.

Side-by-Side Cost Behavior Comparison Table

| Scenario | Host-Based Impact | Ingestion-Based Impact | Cost Predictability | Operational Risk |

| Autoscaling | Linear cost surge | Volume-based scaling | Medium | Medium |

| Incident log spike | Stable | Potential surge | Low | High |

| High-cardinality metrics | Hidden | Explicit increase | Medium | Medium |

| Multi-region expansion | Fixed duplication | Traffic-driven | Medium | Low |

Forecasting and Financial Predictability

Linear vs Nonlinear Cost Curves

Host-based pricing generally produces linear cost curves. As node count increases, spend increases proportionally. Forecasting depends primarily on infrastructure planning cycles and capacity models.

Ingestion-based pricing produces behavior-driven curves. Cost scales with request volume, trace density, log emission, retention, and cardinality. The curve is elastic and may not correlate directly with infrastructure size. Forecasting requires traffic modeling rather than hardware modeling.

The difference is not predictability versus unpredictability. It is infrastructure-linear versus workload-sensitive cost behavior.

Who Controls Observability Cost?

Infrastructure Team

- Keeps track of the number of nodes

- Controls policies for autoscaling

Application Team

- Controls how much information is logged

- Sets the cardinality of a metric

- Plans out tracing tools

Platform Engineering

- Has control over telemetry pipelines

- Uses sampling and filtering

- Sets retention policies

Budgeting Complexity in Each Model

Host-based pricing simplifies budgeting when infrastructure growth is slow and predictable. Cost centers align with clusters or environments. However, inefficiencies in telemetry design may remain financially invisible.

Ingestion-based pricing increases budgeting sensitivity to workload volatility. Finance teams must account for traffic seasonality, product launches, and incident-driven signal bursts. The benefit is cost transparency; the tradeoff is modeling complexity.

Cost Elasticity vs Cost Opacity

Host-based pricing offers relative stability but can obscure the relationship between signal quality and spend. Inefficient telemetry may not surface as a direct financial signal.

Ingestion-based pricing introduces elasticity. Spend expands or contracts with runtime behavior. The model exposes signal discipline decisions in real time but requires governance maturity.

For technical leadership, the core question is not which model is cheaper. It is which cost behavior aligns with organizational control boundaries and operational maturity.

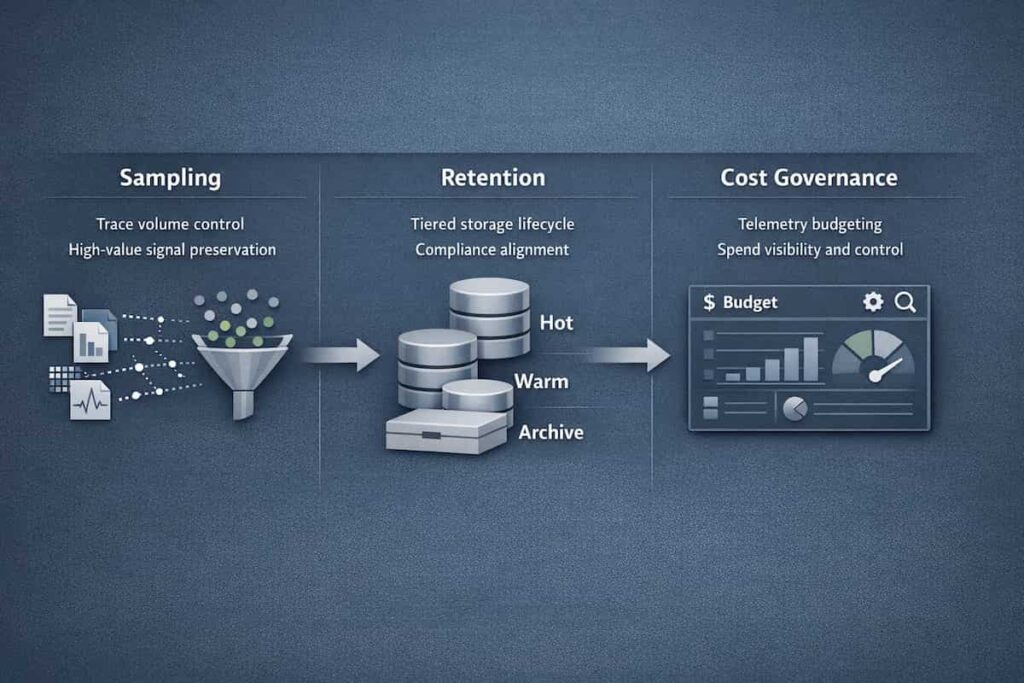

Sampling, Retention, and Cost Governance

Observability cost is rarely determined by pricing model alone. It is shaped by sampling strategy, retention policy, indexing decisions, and operational discipline. The effectiveness of these controls differs significantly between host-based and ingestion-based pricing.

Head Sampling vs Tail Sampling

Head sampling makes a keep/drop decision at the point of trace creation. It reduces ingestion volume early, lowering storage and processing cost, but risks discarding high-value traces during incidents.

Tail sampling evaluates traces after observing full execution context. It preserves anomalous or high-latency traces while discarding low-value ones. Tail strategies are computationally heavier but align cost with signal relevance rather than randomness.

In ingestion-based pricing, sampling directly affects cost exposure. In host-based models, sampling affects system performance and storage utilization more than licensing itself.

Adaptive and Dynamic Sampling

Adaptive sampling adjusts rates based on traffic patterns or error conditions. Dynamic policies may increase sampling during anomalies and reduce it during steady-state operation.

This approach introduces cost elasticity tied to system health. It requires governance maturity but enables cost to track operational risk rather than raw volume.

In host-based pricing, adaptive sampling primarily optimizes infrastructure usage. In ingestion-based pricing, it becomes a financial control mechanism.

Log Lifecycle Management

Logs are usually the biggest cost line in an observability stack. Not metrics. Not traces. Logs. Lifecycle management isn’t just storage policy. It’s engineering policy. Lifecycle management includes filtering, tiered storage, retention windows, and archival policies.

Under ingestion-based models, aggressive log filtering and structured logging discipline directly reduce spend. Under host-based models, excessive logging may degrade performance without immediately surfacing as licensing cost.

Log governance becomes a design decision, not a storage afterthought.

Best practices include:

- Short retention of debug logs

- Indexed error logs

- Archived info logs

Retention vs Indexing vs Rehydration Trade-Offs

Retention defines how long telemetry is stored. Indexing determines what is queryable in real time. Rehydration allows archived data to be restored for investigation.

Long retention with full indexing increases both cost and query overhead. Selective indexing with archival storage reduces active footprint but increases investigation latency.

In an ingestion model, these are direct cost decisions. Index more, pay more. Retain longer, pay more. The curve is visible. In host-based pricing, the financial impact may be indirect but still operationally significant.

Governance Levers Available in Each Pricing Model

- Host-Based Models: Control happens at the infrastructure layer. You resize clusters, consolidate environments, and anage node lifecycles. Telemetry discipline still matters, but it mostly affects performance and efficiency before it affects licensing.

- Ingestion-Based Models: Primary levers include sampling rate, log filtering, metric cardinality controls, retention policies, and indexing scope. Cost governance is tightly coupled to signal design and runtime behavior.

The structural difference is where accountability resides. Host-based pricing centralizes cost control around infrastructure. Ingestion-based pricing distributes responsibility across application, platform, and observability engineering.

Hybrid Pricing Models: The Hidden Complexity

A lot of vendors use more than one pricing model.

Host-Based APM + Ingestion-Based Logs

This creates:

- Dual cost drivers

- Different ways to optimize

- Finance confusion

User-Based or Event-Based RUM Additions

RUM usually charges by:

- Session

- User

- Event

This adds a third pricing axis.

Why Blended Models Create Billing Confusion

- More than one billing unit

- Separate cost dashboards

- Problems with aligning engineering and finance

Which Pricing Model Scales Better at Different Growth Stages?

Early-Stage Startup (Low Infra, High Velocity)

Best fit: Ingestion-based

- Small infrastructure footprint

- High speed of experimentation

- Early telemetry governance is easier

Mid-Size SaaS (Kubernetes Heavy, CI/CD Driven)

Best fit: Ingestion-based with governance

- Clusters that scale automatically

- Microservices spread out

- Need to lower the cost of telemetry

Enterprise (Multi-Region, Compliance, Long Retention)

Best fit: Hybrid or ingestion-first

- Needs for compliance retention

- On a huge scale

- Teams in charge of cost governance

Technical Evaluation Checklist Before Choosing a Pricing Model

Before choosing a pricing model, it is worth stepping back and evaluating the broader architectural fit of the platform itself. If you have not already done so, review this practical checklist for evaluating an observability platform for a structured framework covering telemetry behavior, sampling control, cost dynamics, and long-term ownership considerations. It provides the context needed to interpret pricing trade-offs correctly.

Before you make a decision, ask:

- Do you use aggressive autoscaling?

- Does your workload come in bursts or stay steady?

- Do you use metrics with a lot of different values?

- How strict are your rules for logging?

- Do you use OpenTelemetry pipelines?

- Do you need long retention windows?

- Who is responsible for the cost of telemetry within the company?

Where CubeAPM Fits in the Ingestion vs Host Debate

Observability tools generally follow one of two pricing paradigms:

- Host-based pricing: charged by number of hosts, containers, or agents

- Ingestion-based pricing: charged by volume of telemetry ingested

Host-based pricing was popular when monoliths dominated, but as microservices, Kubernetes, and ephemeral infrastructure scale, it becomes harder to predict and manage cost. Each additional node, container, or service adds incremental cost even if telemetry volume doesn’t increase proportionally.

In contrast, CubeAPM is built on a transparent per-GB ingestion model. You pay only for the telemetry you send, not for the number of hosts you run. There is no pricing based on number of users, retention duration, or support tiers. This removes infrastructure scale, seat expansion, and long-term data storage from the cost equation. Since retention is architecture-driven rather than license-driven, teams can keep data as long as their storage strategy allows, without facing vendor-imposed retention upgrades or add-on fees. This removes infrastructure scale from the cost equation.

Example: Host-Based vs Ingestion-Based Cost Dynamics

Pricing figures are based on publicly available pricing pages at the time of writing. Actual costs vary depending on region, contract terms, and feature selection.

Why Datadog Is Used in This Example

Datadog is used here because it represents a widely adopted host-based pricing model in the observability market. Its infrastructure and APM billing structure makes it a practical reference point when illustrating how host-based pricing compounds across workloads. This example is not an endorsement or critique, but a structural comparison of pricing models.

What Is a Mid-Sized Platform?

For this example, a mid-sized platform refers to a production SaaS environment running:

- 40–60 compute hosts across environments

- Multiple Kubernetes clusters

- 8–15 TB monthly telemetry ingestion

- Mix of infrastructure + APM + log monitoring

The 50-host / 10 TB scenario represents a common production footprint for a growing SaaS team operating at meaningful scale but not yet enterprise hyperscale.

| Component | Host-based Mode | Ingestion-Based Model |

| Infrastructure Cost | Scale Per Host | Scales Per GB Ingestted |

| APM cost | Separate per-host charge | Included in ingestion |

| Scaling impact | Cost increases linearly with hosts | Cost tied to telemetry volume |

| Cost predictability | Tied to cluster size | Tied to data volume |

Consider a mid-sized platform running:

Under Datadog’s Enterprise plan, pricing compounds across infrastructure and APM hosts.

Infrastructure monitoring: 50 × $23 = $1,150

APM hosts: 50 × $40 = $2,000

Data transfer: 10,000 GB × $0.10 = $1,000

Additional line items such as serverless monitoring, indexed spans, error tracking, and synthetics introduce further charges

In this 50-host / 10 TB scenario, the total monthly cost reaches approximately $4,894, as shown in the detailed comparison. Each additional host directly increases cost. When clusters scale, billing scales with them.

With CubeAPM’s ingestion-based pricing at $0.15 per GB, the same 10 TB workload translates to approximately $1,750 per month. There are no per-host infrastructure fees and no APM host multipliers. Cost is tied strictly to telemetry volume.

Flat Per-GB Ingestion Model

CubeAPM has clear, flat pricing of $0.15/GB for ingestion with no hidden multipliers.

This means:

- You are billed strictly on actual telemetry volume received

- No throughput ceilings tied to host count

- No add-on feature pack multipliers

- No cost jumps when containers scale up

Smart Sampling for Cost Control

CubeAPM dynamically reduces telemetry volume and prevents runaway metric cardinality. That’s why CubeAPM includes smart sampling aligned with its architecture.

Rather than static or random sampling, CubeAPM’s approach:

- Preserves high-value traces (errors, anomalies, latency outliers)

- Dynamically adjusts sampling rates based on traffic patterns

- Filters low-value telemetry using policy rules

- Controls metric cardinality to avoid runaway series explosions

These features ensure you retain the telemetry needed for confidence while reducing cost pressure.

Unlimited Retention Architecture

CubeAPM separates indexing from retention, which lets:

- Long-term storage for compliance: CubeAPM supports storing telemetry for regulatory and audit purposes.

- Keeping cold data for a low cost: Cold storage reduces storage expenses for rarely accessed data.

- Rehydration on demand: Archived telemetry can be reindexed for investigations without permanent indexing cost.

Multi-Agent and OpenTelemetry Ingestion Support

CubeAPM supports:

- Native agents

- Collectors for OpenTelemetry

- Pipelines that don’t depend on a single vendor

This makes sure that the software can be used on different systems and that you don’t get stuck with one vendor.

Vendor-Managed Self-Hosting for Cost Predictability

CubeAPM provides managed self-hosting to:

- Get rid of exit fees: Self-hosting avoids cloud egress charges from SaaS observability vendors.

- Keep storage costs low: Local or controlled storage lowers long-term telemetry expenses.

- Make sure the cost of telemetry matches the cost of infrastructure: Costs scale with owned infrastructure rather than unpredictable SaaS pricing.

Conclusion: Pricing Model Choice Is Really About Cost Behavior

Both host-based and ingestion-based pricing models for observability can grow. The main difference is how they grow.

- Prices based on the host scale with infrastructure units

- Pricing based on ingestion goes up with the amount of telemetry

It’s not about getting a better deal. It has to do with predictability, governance, and making sure that the architecture is in line.

Your observability pricing must match:

- Architecture

- Growth trajectory

- Engineering discipline

- Compliance requirements

Teams that understand how costs change early on don’t get hit with observability bill shocks later.

Disclaimer: The information in this article reflects the latest details available at the time of publication and may change as technologies and products evolve.

FAQs

1. Is ingestion-based pricing always cheaper than host-based pricing?

No. In environments where autoscaling is used, ingestion-based pricing can be cheaper, but it can be more expensive when telemetry spikes happen without governance.

2. How does Kubernetes autoscaling affect observability costs?

Autoscaling raises costs based on the number of hosts. Costs based on ingestion go up with the amount of telemetry, not the number of nodes.

3. Can sampling make ingestion pricing predictable?

Yes. Adaptive sampling, filtering, and aggregation make it much easier to guess how much ingestion will cost.

4. Why do observability bills spike during outages?

Incidents make a lot of logs, traces, and retries. Models that use ingestion charge for this surge in telemetry.

5. Which pricing model works best with OpenTelemetry?

OpenTelemetry works best with ingestion-based pricing because pipelines can filter and control the amount of telemetry that is ingested.