Distributed tracing is foundational to modern incident response, serving as the primary mechanism for isolating faults within complex, microservice-based architectures. However, a systemic vulnerability persists: telemetry pipelines frequently fail during peak volatility. When an incident occurs, teams rely on traces to perform root-cause analysis and map service dependencies in real time.

However, precisely when the system enters a degraded state, the most critical diagnostic data often fails to persist, creating visibility gaps that directly inflate Mean Time to Resolution (MTTR). This fragility stems from a core design mismatch: most tracing infrastructures are optimized for predictable, steady-state traffic. Incidents, however, introduce non-linear behavioral shifts. Recursive retry loops can exponentially increase span volume, while tail-latency amplification leads to memory pressure and buffer exhaustion at the agent level.

This article explains why that happens. Where traces actually disappear. And what teams who care about incident reliability do differently to make sure the data they need is still there when things go sideways.

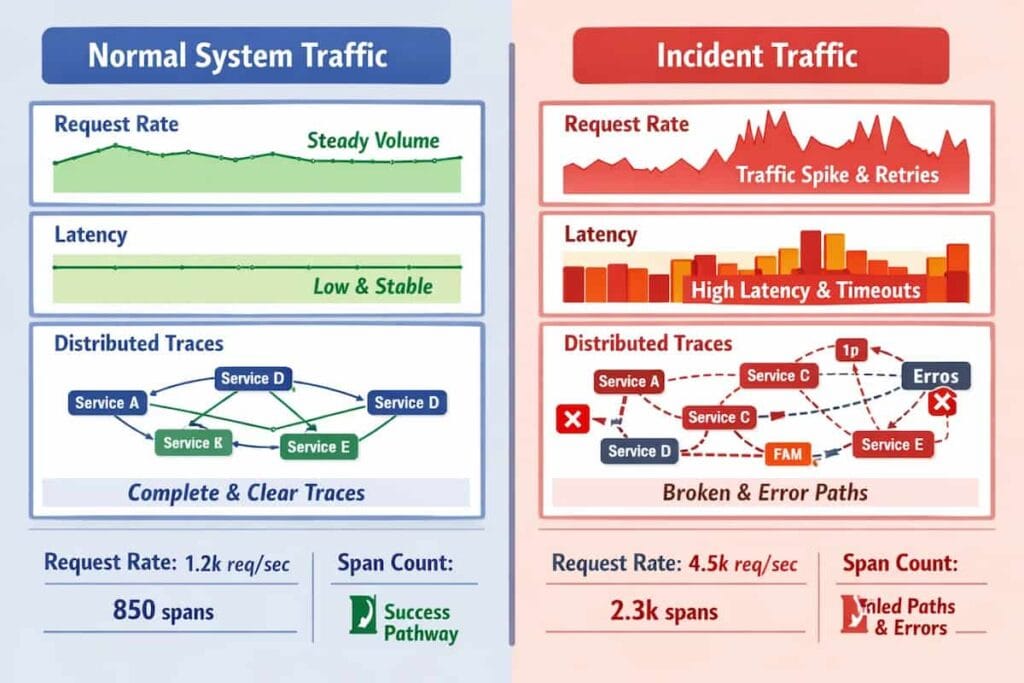

How Incident Traffic Is Different From Normal Traffic

Incident traffic differs from normal traffic by creating self-amplifying, high-volume stress that exposes hidden system weaknesses. Retries cause exponential load spikes, while increased fanout, error-path execution, and uneven latency spikes overwhelm tracing pipelines, often leading to total system failure.

Retries amplify request volume without warning

Under normal conditions, retries are uncommon and fairly predictable. Most systems see them occasionally, and capacity planning quietly accounts for them. Incidents change that. A single failed request can trigger multiple follow-ups, sometimes three or more, all hitting the system at once. What looks like one user action turns into a burst of traffic that didn’t exist a moment earlier.

Fanout increases trace size and depth

In healthy systems, requests follow a small number of well-understood paths. However, during incidents, requests that usually touch a few services suddenly fan out across many. Traces become longer and heavier, packed with spans that rarely appear during normal operation, at exactly the moment when the tracing pipeline is already under stress.

Error paths activate code that rarely runs

Under normal load, traffic mostly exercises fast, happy-path execution. The paths are optimized, measured, and understood. Error-handling code, by contrast, sits quietly in the background and rarely gets exercised in meaningful ways. During incidents traffic is forced through error handlers, circuit breakers, retry loops, and secondary failover logic that was never designed to run at full volume. The result is higher cardinality, heavier computation, and, in some systems, real pressure on indexing and storage layers that only becomes visible once the failure is already underway.

Latency spreads unevenly instead of degrading smoothly

Incidents typically produce non-uniform latency distributions, where certain execution branches hang indefinitely while others fail fast. This inconsistency causes asynchronous spans to arrive at the collector outside of the configured “look-back” window. When spans arrive too late to be correlated with their parent context, the tracing system produces fragmented, incomplete traces that lack the necessary context for root-cause analysis. Tracing pipelines experience pressure they never see in normal operation.

Tracing Pipelines Experience Unprecedented Backpressure

Telemetry infrastructure is generally dimensioned for predictable percentiles of normal operation. During high-volatility events, the simultaneous increase in volume, span depth, and metadata cardinality creates critical backpressure. When the pipeline reaches its saturation point, internal load-shedding mechanisms engage frequently, discarding the high-signal, error-heavy traces required for effective incident response.

What “Missing Traces” Actually Means

“Missing traces” is often used as a single diagnosis, but it actually refers to several distinct failure modes within the observability pipeline. These failures occur at different stages, require different fixes, and are frequently misinterpreted during incidents. Without separating them, teams tend to optimize the wrong layer and repeat the same trace gaps under pressure.

Traces That Were Never Recorded

This failure occurs at the earliest stage of the telemetry lifecycle, where the data is discarded before it ever leaves the application process.

- Sampling decisions drop traces at the SDK or agent: Most tracing implementations utilize head-based sampling to manage costs and overhead. In this model, the decision to record or discard a trace is made at the start of the request. If the sampling rate is too low, the system may discard the very requests that eventually encounter errors, as the “decision” occurred before the service logic hit a failure state.

- The trace is gone before the failure is visible: When an incident begins, the initial errors often occur on execution paths that were not previously identified as high-priority for sampling. Because the metadata indicating a failure (such as an error tag or a 500-series status code) is only attached at the end of a span, a head-based sampler has no way to “save” the trace in retrospect. The data is lost to the system before the incident is even detected by alerting engines.

Traces That Exist but Are Hard to Find (Context/Storage)

In many cases, the traces are actually recorded and make it to the backend, but they cannot be rendered correctly in the UI. The data exists. It just does not connect in the way engineers expect.

Common causes include

- Fragmented traces: Missing root spans or broken parent–child relationships make a trace look incomplete, with spans floating on their own as so-called orphaned spans. The execution happened, but the structure that explains it is gone.

- Context propagation failure: Trace headers, such as traceparent, are not passed consistently between services, which breaks the trace chain. This tends to surface at async boundaries, message queues, gateways, or background workers, where spans are captured correctly but cannot be stitched back into a single request flow.

- Configuration issues: The trace is there but filtered out by an incorrect time range, environment selection, or tenant setting, leading teams to assume data loss when the problem is actually how the data is being queried.

Why the Most Important Traces Get Lost During Incidents

Head-Based Sampling Drops Traces Too Early

Head-based sampling makes a decision at the start of a request, before its full behavior is known. Early spans often appear normal, especially during the initial phase of an incident. When failures or latency spikes emerge later in the execution path, the trace has already been discarded. As a result, the traces that would have provided the most insight are never captured.

Tail-Based Sampling Struggles Under Incident Load

Tail-based sampling relies on buffering spans and making decisions after more context is available. This approach improves fidelity in a steady state but becomes fragile under incident conditions. Spikes in traffic, larger traces, and longer-lived requests consume memory and CPU that are no longer available. As pressure increases, sampling policies degrade or fail, precisely when high-quality traces are most needed.

Tail-based sampling is effective only if the underlying infrastructure is overprovisioned to handle worst-case incident bursts. In practice, most teams provide steady-state or slightly elevated traffic. When an incident triggers a massive fan-out or a retry storm, the resulting telemetry volume exceeds the provisioned buffering capacity.

Collector Backpressure Causes Silent Data Loss

During incidents, exporters often become the bottleneck in the telemetry pipeline. As export latency increases, internal queues begin to fill. Once queue limits are reached, spans are dropped to protect the collector process. This data loss is frequently silent, with little indication that tracing fidelity has been reduced.

Collector Restarts Wipe Buffered Traces

Incidents rarely happen in isolation, as it often results in increasing the node pressure, activating autoscaling and pushing emergency changes. When collectors restart under these conditions, any spans held in memory buffers are lost. Even short-lived restarts can erase critical traces that were in flight at the time of failure.

Inconsistent Sampling Decisions Across Services

In distributed systems, sampling decisions are often made independently. Each service decides in isolation. During incidents, this lack of coordination becomes obvious, especially as traffic patterns shift and services begin to behave differently under load. Some components retain full traces, while others drop spans early or entirely, even though they are part of the same request.

Tracing Reliability Is a System Property

Debates about tracing focus on instrumentation and coverage and not critical aspects such as whether traces remain available under real incident conditions, when systems are under stress and assumptions break down.

Tracing reliability depends on more than enabling tracing in application code. It is shaped by multiple components working together:

- Trace availability is not guaranteed by “turning tracing on”: Enabling traces is not enough to guarantee trace availability. Instrumentation alone does not ensure that traces survive real incident conditions.

- Sampling, collectors, storage, and querying all matter: Sampling decisions, collector behavior, storage durability, and query paths all influence whether a trace is ultimately available when it is needed. A weakness at any point in that chain can erase critical context, even if every service is correctly instrumented.

- Reliability needs to be measured, not assumed: For teams that rely on tracing during incidents, reliability must be treated as an observable property of the system. It needs to be measured, monitored, and validated under load, rather than assumed based on steady-state behavior.

How Teams Stop Losing Critical Traces

Teams that retain useful traces during incidents design for failure, not steady state. The goal is not to collect more data at all times but to preserve the small set of traces that explain what actually went wrong.

Protect Error and Latency Outliers

During incidents, the most useful traces are also the least common. Errors and extreme latency outliers carry the strongest diagnostic signal, yet they are often dropped by generic sampling rules designed for steady traffic.

- Record extreme latency: A record of latency helps reveal issues such as retries and slowdowns. Retaining these traces preserves visibility into the long tail that defines many incidents.

- Always keep errors: Traces of errors should be recorded. Once they are deleted, there is no reliable way to reconstruct the sequence of events that led to the failure, even if metrics and logs still exist.

- Prioritize new endpoints and new dependencies: Often, new endpoints and dependencies behave differently when there is a surge in traffic. Elevating their priority helps surface regressions that standard sampling would otherwise miss.

Change Sampling Behavior During Incidents

Sampling strategies that work under normal conditions often fail during incidents. A surge in traffic usually means a change in sampling

- Trigger higher fidelity when alerts fire: Sampling should respond to system signals. When alerts indicate elevated error rates or latency, sampling should preserve more complete traces automatically.

- Focus on affected services, endpoints, or regions: Targeted increases limit cost and pressure on the pipeline. Focusing only on impacted areas preserves critical traces without overwhelming collectors.

Make the Collector Hard to Break

Even well-designed sampling cannot help if the telemetry pipeline fails under load. The collector should be designed to withstand a surge in traffic.

- Tune queues and batch sizes for spikes: Collectors are commonly configured with default settings that work well with stable and low telemetry. During incidents, telemetry volume increases suddenly, and exporters slow down as systems come under pressure. When this happens, queues fill faster than they can drain, and memory usage rises quickly.

- Set memory limits: Clear memory limits are not a tuning detail. It is essential in preventing uncontrolled data growth and reduces the chance of cascading restarts when traffic peaks and the system is already under pressure.

A Practical Checklist for Incident Runbooks

Before an incident escalates, the observability pipeline itself needs to be trusted. That trust does not come from dashboards or assumptions made during normal operation. It comes from a small set of technical checks that are verified ahead of time and revisited when systems are under stress.

- Verify SDK sampling behavior: It is vital to check whether traces are being sampled at the SDK or agent level. In many systems, sampling decisions are made early, before a request has clearly failed or slowed down, which means the most useful traces may never leave the application.

- Check exporter health: Often, exporters become a major obstacle during incidents. Retries and slow exports can overwhelm the pipeline and trigger upstream drops, even when collectors appear responsive.

- Check collector queue depth and restarts: Look for sustained queue growth, recent restarts, or signs of span drops in logs and metrics. These are strong indicators that the pipeline is under pressure rather than misconfigured.

- Validate trace completeness: Determine whether traces are missing entirely or arriving partially. Fragmented traces, missing parent spans, or broken context propagation point to inconsistent sampling or propagation issues rather than ingestion failures.

- Confirm query scope and time range: Incorrect environments, tenants, or overly narrow time windows regularly cause teams to assume traces are missing when they are simply filtered out.

Why CubeAPM Fits Incident-First Tracing

Smart Sampling Approach

CubeAPM uses context-aware Smart Sampling, where sampling decisions are informed by request behavior rather than fixed rates. Smart Sampling looks at what a request actually does, not just how often it occurs. Errors, extreme latency, and unusual execution paths are treated differently from routine traffic. Traces that explain failures are kept. Repetitive background requests are dropped before they add noise.

This becomes critical during incidents. As systems get noisy and traffic patterns shift, Smart Sampling shifts with them. Instead of relying on rates tuned for calm conditions, it focuses on preserving the traces that show where and why things are breaking.

Predictable cost without sacrificing critical traces

CubeAPM offers an ingestion-based pricing of $0.15/GB. Because low-signal data is filtered out while high-value traces are preserved, teams avoid paying to ingest large volumes of noise during incidents. What remains is a smaller, more useful dataset that still explains failures when they happen.

Conclusion: Trace Loss Is a Design Problem, Not Bad Luck

Traces are not lost because tracing is unreliable by nature. They are lost because most systems are designed around calm, predictable conditions and quietly assume that those conditions will hold during failures.

Incidents break those assumptions. Sampling decisions made too early, fragile pipelines, and under-provisioned collectors are exposed exactly when traffic becomes noisy and behavior diverges from the norm. The result is missing or incomplete traces at the moment they are most needed.

Reliable tracing during incidents does not come from collecting more data. It comes from intentional design choices that prioritize high-signal traces, adapt to changing conditions, and treat the telemetry pipeline as critical infrastructure. Teams that plan for failure are the ones that keep the traces that matter when things go wrong.

Disclaimer: The information in this article reflects the latest details available at the time of publication and may change as technologies and products evolve.

FAQs

1. Why do traces disappear only during traffic spikes?

Traffic spikes change more than volume. Retries increase, traces become larger, and exporters slow down as downstream systems come under pressure. These conditions expose limits in sampling rules, collector capacity, and buffering that are rarely hit during steady-state operation, leading to dropped or incomplete traces.

2. What’s the difference between missing traces and missing spans?

A missing trace usually means the request was never recorded or was dropped entirely somewhere in the pipeline. Nothing exists to inspect. Missing spans are different. The trace is there, but parts of it are gone. Parent spans may be missing. Context may be broken. The trace exists, but it cannot fully explain what happened.

3. Is tail-based sampling always better for incidents?

No. It depends on how much pressure the system is under. Tail-based sampling can preserve more context because decisions are made later, but that comes at a cost. It requires buffering, memory, and CPU. During incidents, those resources are often the first to become constrained.

4. How can teams measure trace completeness?

There is no single metric, but there are practical signals. Teams can look for traces missing expected parent spans, unusually short traces for known request paths, or sudden increases in fragmented traces during incidents. Comparing what should exist to what actually arrives often reveals whether completeness is degrading under load.

5. What should be monitored on the tracing pipeline itself?

The pipeline needs observability, too. Queue depth, span drop counts, exporter latency, retry behavior, and restart frequency all provide early warning signals. These indicators usually degrade before engineers notice missing traces in the UI.