Modern systems rarely fail in clean, predictable ways. Instead, they degrade gradually, a checkout slows down, a background job backs up, or a dependency times out in one region but not another. Traditional monitoring often shows that something is wrong, but not why it is happening.

Observability exists to close this gap. It is the ability to understand the internal state of a system by examining its outputs, especially when failures are unexpected and questions were not known in advance. In practice, observability is less about collecting more data and more about preserving context across services, requests, and time.

This article defines observability in practical engineering terms. It explains what observability is by connecting telemetry signals to shared context, instrumentation to incident workflows, and distributed architectures such as microservices, Kubernetes, and event-driven systems. The objective is not more telemetry. It is deeper understanding.

What Observability Means in Practice

Observability is the ability to infer a system’s internal state from the telemetry it emits, especially when failures are unexpected and predefined monitoring questions fall short. It relies on correlated signals such as metrics, logs, traces, and contextual metadata to reconstruct causal paths across distributed components, allowing engineers to explain not just what failed, but why it failed and how the system reached that state.

Observability matters when systems behave in ways you didn’t anticipate. It is the ability to explain unknown unknowns during real incidents. Not just detect that something is wrong, but understand why it is wrong, even when the failure mode was never anticipated.

Observability is more than just monitoring. It is what lets you understand behavior you didn’t expect. When systems misbehave, dashboards and alerts often fall short. Engineers need ways to follow the story behind the signals.

Observability as the Ability to Explain Unknown Unknowns During Real Incidents

Observability helps teams make sense of problems that were not planned for. It connects events across services. It shows how one issue can ripple through a system. And it allows engineers to piece together what actually happened.

Monitoring vs Observability

Monitoring: Known Questions, Thresholds, Health Checks, SLO Alerting

It works well for known failure modes, uptime drops, error rates rise, latency breaches and SLO. The system tells you something moved outside the lines you drew. But if the failure does not match those predefined conditions, monitoring has little else to say.

Observability: Investigation, Causal Paths, Emergent Failure Modes, High-Cardinality Drilldowns

Instead of asking whether a threshold was crossed, you explore how a request moved through the system, which dependency slowed down, and why only certain tenants or regions were affected. It allows you to slice across high-cardinality dimensions and follow cause-and-effect across services.

A Simple Example: “CPU Is High” vs “Why Checkout Latency Spiked Only for One Region and One Payment Path”

Monitoring tells you the CPU is high on the checkout service. That is useful, but shallow. Observability lets you discover that checkout latency spiked only in one region, only for mobile users, and only when they selected a specific payment provider. From there, you can trace the exact request path, correlate it with a recent deployment or configuration change, and identify the real bottleneck.

In practice, teams don’t adopt observability to collect more data. They adopt it because traditional monitoring fails precisely when systems behave in unexpected ways during partial outages, regional failures, and complex dependency interactions.

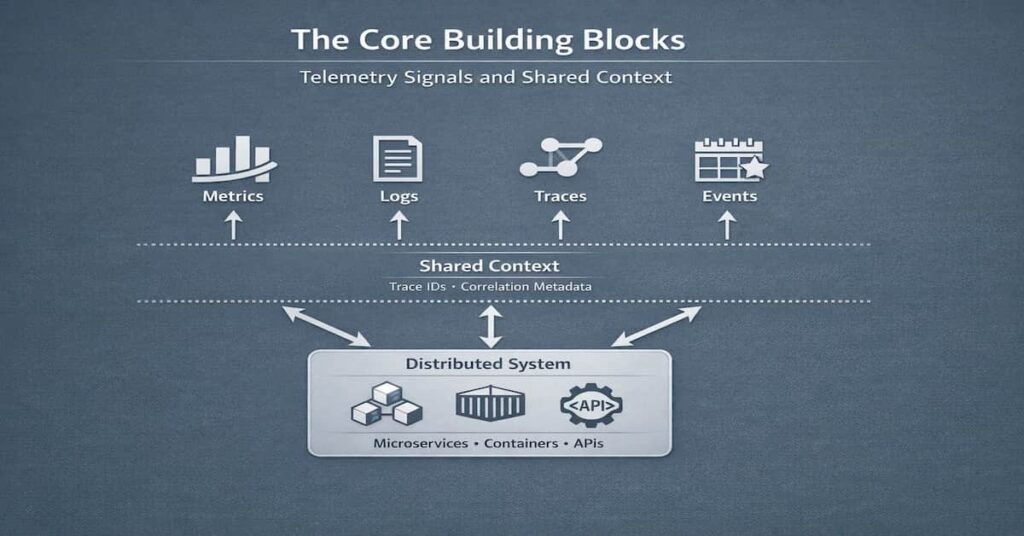

The Core Building Blocks: Telemetry Signals and Shared Context

Every observability strategy rests on signals. Without them, there is nothing to measure, search, or trace. But signals alone do not create understanding. Many teams learn this the hard way as systems scale; they collect everything, yet still struggle to answer simple questions under pressure.

To see why, we need to look at the core building blocks and how they actually work together.

Metrics, Logs, Traces (And Why They Are Necessary but Not Sufficient)

Metrics show trends over time. Logs capture discrete events. Traces follow a request across services.

Each serves a purpose. Metrics are fast and efficient for detection. Logs provide detail. Traces reveal relationships. But none of them, on their own, explains a distributed system. Without a way to connect them, they remain isolated views of the same reality.

Context Is the Multiplier

What turns raw telemetry into something useful is shared context. This is where many implementations either succeed or quietly fall apart.

- Trace and Span IDs: Trace and span IDs link units of work across service boundaries. They allow engineers to follow a single request as it moves through APIs, queues, and databases. Without consistent propagation, that path breaks.

- Correlation IDs: Correlation IDs connect logs, metrics, and traces tied to the same transaction or workflow. They make cross-signal investigation possible instead of forcing manual guesswork.

- Consistent Service Naming: Service names seem trivial until they are inconsistent. Slight differences across environments create confusion and fragment queries. At scale, naming discipline becomes operational hygiene.

- Tags and Attributes: Tags and attributes add dimensionality, region, endpoint, tenant, feature flag. They enable deep drilldowns. But uncontrolled high-cardinality fields can explode storage costs and degrade query performance. Precision matters.

Telemetry Without Shared Context Is Just Data

Teams often discover this after growth. Telemetry volume increases. Dashboards multiply. Costs rise.

Yet during an incident, engineers still ask the same question: “How is this connected?” Without shared identifiers and consistent structure, signals cannot be correlated. They sit side by side but never truly meet.

At scale, context is not an enhancement. It is the difference between data and understanding.

Logs, Metrics, Traces: What Each Is Best At

Logs, metrics, and traces are often grouped together. That is useful, but it can also blur their differences. Each signal answers a different type of question. Knowing where one shines and where it does not makes investigations faster and far less frustrating.

It helps to look at them individually before seeing how they converge during an incident.

Metrics: Trends, SLOs, Capacity, Fast Detection (Time Series Strengths)

Metrics are optimized for time. They compress large volumes of behavior into numerical trends you can query quickly.

They are ideal for tracking SLOs, monitoring error rates, watching latency percentiles, and planning capacity. When something drifts from baseline, metrics show it fast. For example, a spike in p95 latency can cause a steady climb in memory usage and a sudden drop in request throughput.

Logs: Forensic Detail, Error Narratives, Audit Trails

Logs capture discrete events. A failed database query. An authorization error. A configuration change. They provide narrative context, the kind of detail metrics intentionally discarded.

They are essential for forensic analysis and compliance trails. However, search-only logging becomes painful at scale. If you do not know what string to search for, or if fields are inconsistent, investigation slows down quickly. Logs need structure and correlation to stay useful.

Traces: Causal Paths Across Services, Critical Path, Dependency Visibility

Traces show movement. One request enters an API gateway, calls two downstream services, waits on a database, and returns a response. That sequence becomes visible.

They expose the critical path, the spans that dominate latency, and make service dependencies explicit. In distributed systems, this view is often the missing piece between “latency is high” and “this dependency is slow.”

How They Work Together During an Incident (A Step-by-Step Pivot Flow)

Incidents rarely start with logs or traces. They usually start with a metric. An SLO breach. A latency spike. An alert fires.

From that point, engineers shift focus. They examine the metric across regions, endpoints, and other dimensions to narrow down the source of the issue. They open traces for slow requests and inspect the critical path. Then they drop into logs for specific spans or services to examine error messages and context.

Beyond the “Three Pillars”

Logs, metrics, and traces form the foundation. But they do not tell the whole story. Many search results stop there, as if observability ends with three data types. In practice, mature systems rely on additional signals, the ones that often surface only after teams hit scaling limits or performance ceilings.

These signals fill the blind spots the “three pillars” leave behind.

Profiles: CPU, Memory, Lock Contention, Flame Graphs

Profiling answers a different class of question. Not “Which service is slow?” but “What is the code doing while it is slow?”

CPU profiles reveal hot functions. Memory profiles expose allocation pressure and leaks. Lock contention shows where threads wait instead of work. Flame graphs make these patterns visible in seconds.

Without profiling, APM tools can show latency but miss the structural inefficiencies underneath. Some of the most expensive performance problems hide at this layer.

Real User Monitoring (RUM): Front-End Latency, Core Web Vitals, Client Errors

Backend metrics may look healthy while users experience delay. That gap matters.

RUM captures what real browsers and devices observe page load times, interaction delays, client-side exceptions, and geographic differences. Core Web Vitals bring measurable standards to that experience.

Synthetics: Proactive Checks, Canaries, Multi-Region Verification

Synthetics simulate behavior before users complain. They run controlled transactions against APIs, login flows, or checkout paths.

Because they are predictable, they provide a clean baseline. When they fail across multiple regions, the signal is stronger. When only one region degrades, the comparison becomes diagnostic.

Events and Deployments: Change Intelligence (Deploy Markers, Feature Flags, Config Drift)

Most incidents follow change. A deployment. A feature flag rollout. A configuration update.

Change intelligence places those events directly on timelines and dashboards. Rather than guess what changed, engineers can directly see the impact of deployments and feature flags. Engineers no longer have to guess what changed. Deployments, feature flags, and configuration updates are visible in the timeline. Speculation drops.

Topology and Dependency Mapping: Service Graphs as an Investigation Accelerator

As systems grow, mental models quickly fail. Dependencies multiply. Unexpected calls appear. Service graphs make these relationships visible. They highlight traffic flow, upstream and downstream services, and hidden interactions. During an incident, these maps guide engineers directly to the most likely sources of failure. Dependencies multiply.

Service graphs and topology maps externalize those relationships. They show upstream and downstream connections, traffic patterns, and unexpected calls between services. During an incident, that visual map can narrow the search space quickly.

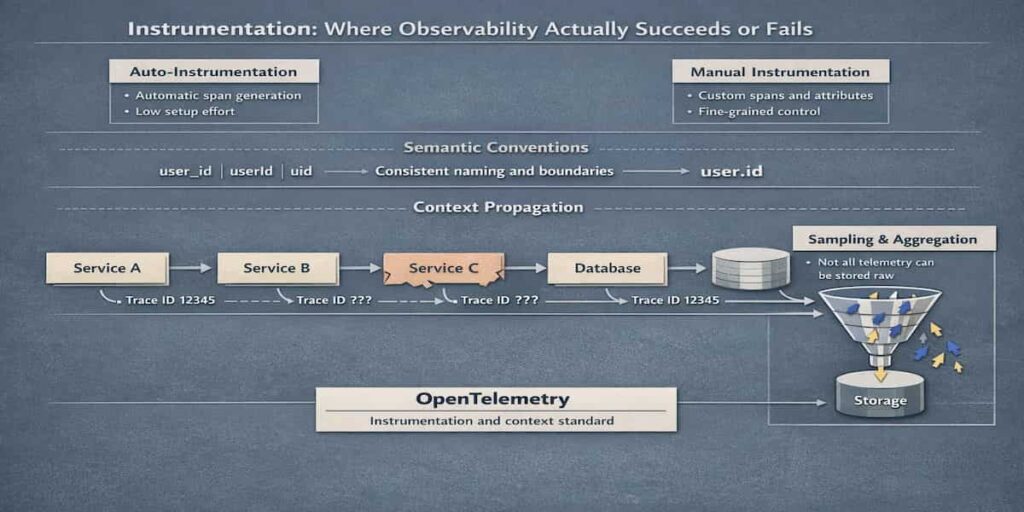

Instrumentation: Where Observability Succeeds or Fails

Instrumentation is where observability shows its true impact. Get it wrong, and signals become fragmented, leaving teams in the dark. How and what you choose to instrument shapes whether insights are possible. The points below cover the key decisions that make observability work, or fail.

Auto-Instrumentation vs Manual Instrumentation

Auto-instrumentation is quick. It captures common frameworks without much setup. When done manually, instrumentation demands more work. But it gives precise control over critical or custom workflows. The best setups combine both approaches.

The “Semantic Conventions” Problem

Consistency matters. One service calls it userId, another uid. Small differences break correlation. Using clear naming rules for spans, metrics, and attributes keeps signals linked. It makes data easier to follow and understand across services.

Context Propagation in Distributed Systems

Traces depend on context. Lost trace headers or broken IDs prevent following a request across services. Even one missing span can hide the root cause of a failure.

Sampling and Aggregation Realities

High-volume systems cannot keep everything in raw form. Sampling reduces storage. Aggregation highlights trends. Too much sampling hides rare but critical failures. Observability is a balance between insight, cost, and fidelity.

OpenTelemetry: The New Default

In many setups, OpenTelemetry ends up being the practical choice. Not because it is flashy, but because it solves a simple problem: collecting metrics, logs, and traces without juggling disconnected tools. Everything flows through one pipeline. That alone removes a lot of unnecessary friction. It reduces vendor lock-in. It also brings order to naming and ensures trace context doesn’t disappear as requests move between services. For many modern architectures, it’s no longer optional; it’s simply where observability begins.

Data Quality: The Hidden Foundation

Observability does not usually fail because tools are missing. It fails because the data cannot be trusted. Dashboards look polished. Queries return results. But when numbers conflict or traces are incomplete, engineers hesitate. And once trust erodes, adoption slows down quickly.

Cardinality Management: High-Cardinality Is Powerful, Ungoverned Is Costly

High-cardinality works in different dimensions. Fields like user ID, request ID, or tenant identifiers make it possible to narrow an issue down to one customer or even one transaction. That level of granularity is powerful.

But without guardrails, cardinality grows fast. Storage costs increase. Queries slow down. Some metrics systems begin to drop data silently. The goal is not to avoid high-cardinality entirely, it is to apply it deliberately, where it answers real questions.

Metrics Types (Counters, Gauges, Histograms) and Common Mistakes

Metric semantics matter. A counter should only increase. A gauge reflects a current value. A histogram captures distributions, such as latency percentiles.

Using the wrong type distorts reality. Resetting counters incorrectly, averaging latencies without distributions, or treating gauges as cumulative metrics leads to misleading dashboards. These mistakes are subtle. They often go unnoticed until an incident exposes them.

Log Quality: Structured vs Unstructured, PII Leakage, Inconsistent Fields

Plain text logs are easy to read when traffic is low. At scale, though, they become hard to search and even harder to analyze consistently. When logs follow a clear structure, predictable keys, defined fields, stable formats filtering and aggregating them becomes far more practical.

There is also a risk dimension. Logs frequently capture payload fragments, identifiers, or personal data. Without review processes and redaction policies, sensitive information spreads quickly across storage systems and teams.

Trace Completeness: Missing Spans, Broken Propagation, Inconsistent Sampling

A trace is only as useful as its continuity. If context propagation fails between services, spans disappear. The path looks shorter than it actually was.

Sampling introduces another layer of complexity. If upstream and downstream services sample differently, traces fragment. Engineers end up analyzing partial stories and drawing incomplete conclusions.

Practical “Definition of Done” for Instrumentation

Instrumentation is not complete when data appears in a dashboard. It is complete when signals are consistent, correlated, and aligned with real operational questions.

A practical definition of done includes: agreed naming conventions, validated metric types, verified trace propagation, structured logs, and documented cardinality boundaries. Without these checks, observability becomes decorative rather than dependable.

Cost, Retention, and Scalability Trade-offs

Cost and retention challenges are not advanced observability problems they surface as soon as telemetry volume grows beyond a single service or environment.

Observability generates value, but it also generates data. The more services, the higher the traffic, the more metrics, logs, and traces accumulate. That growth creates three intertwined challenges: cost, retention, and scalability. Storing everything indefinitely is expensive. Querying massive datasets slows down investigations. And without a plan, scaling telemetry across multiple services becomes unmanageable.

Observability is not just a visibility problem. It is a data volume problem. Let’s break down where that pressure actually comes from.

Cost Drivers That Show Up Later

Some cost drivers are obvious. Others creep in quietly.

- Cardinality Explosions: High-cardinality dimensions, user IDs, request IDs, session tokens, are powerful for debugging. They let you isolate behavior at a very fine level. But when left unchecked, they multiply metric series exponentially. Query performance drops. Storage costs rise. What was once a helpful dimension becomes an expensive liability.

- Verbose Logs During Incidents: During outages, teams often increase log verbosity. It feels necessary. More detail might mean faster answers. The side effect is predictable: ingestion spikes. Storage grows. And sometimes, the extra logs add noise rather than clarity. Logging strategy under stress needs guardrails.

- Trace Volume from Chatty Services: Highly distributed systems generate a lot of spans. A single request can trigger dozens of downstream calls. Now multiply that by traffic. Without sampling or filtering, trace volume grows rapidly. Costs follow.

Retention Strategy by Signal and Use Case

Not all telemetry needs the same lifespan. Treating it as such creates unnecessary expense.

- Fast Hot Storage vs Cold Archives: Recent data must be fast to query. Engineers investigating an active incident cannot wait minutes for results. That is hot storage. Older data is different. It supports audits, compliance, or long-term analysis. Cold storage is cheaper, slower, and often sufficient.;

- Aggregates vs Raw: Raw data provides detail. Aggregates provide trends. You may not need every individual trace from six months ago, but you likely need latency distributions and error-rate summaries. Keeping aggregates longer while expiring raw data is often a sensible compromise.

Sampling Strategies at a High Level (Head vs Tail, and When Each Fits)

Sampling is not optional at scale. It is a design decision. Head sampling decides early, often at the start of a request, whether to keep a trace. It is simple and predictable but may miss rare failures.

Tail sampling decides after seeing the outcome. It can retain slow or error-heavy traces while discarding healthy ones. It is more complex, but often more aligned with real debugging needs.

Neither is universally correct. The right choice depends on traffic patterns and the types of questions you expect to ask.

A Pragmatic Rule: Optimize for Questions You Actually Need to Answer Under Pressure

It is tempting to collect everything. Storage is cheaper than missing a root cause, until it isn’t.

A more sustainable approach is to design observability around the questions you must answer quickly during incidents. What do you need to see in the first ten minutes? What level of detail is essential? What can be summarized?

Cost, retention, and scalability are not separate from observability strategy. They are part of it. If you ignore them early, they will shape your decisions later, usually at the worst possible time.

Security, Privacy, and Governance

Governance issues in observability rarely appear because teams planned for them. They appear because telemetry quietly accumulates identifiers, payload fragments, and user context long before access controls and retention policies are defined.

Observability data feels operational. In reality, it often mirrors production traffic. That shift changes the risk profile.

Why Telemetry Becomes Sensitive Data (Identifiers, Payload Fragments, User Attributes)

Logs may capture email addresses. Traces may include request parameters.

Individually, these fields seem harmless. When put together, they can reconstruct user behavior, expose business transactions, or leak regulated information. Telemetry is not just system data. It is frequently user-adjacent data.

Data Minimization: What Not to Emit

The simplest safeguard is restraint. Not every request payload needs to be logged. Not every attribute needs to be attached as a tag.

Instrumentation should answer a question. If a field does not improve debugging or reliability, it likely does not belong in telemetry. Emitting less reduces both risk and cost.

Access Controls, Audit Trails, and Least Privilege for Observability Platforms

Observability platforms often become some of the most widely accessed systems in an organization. Engineers, SREs, support teams sometimes even product staff rely on them.

That makes access control essential. Role-based permissions, audit logging, and least-privilege policies prevent observability data from turning into an unmonitored data lake. Visibility should not mean unrestricted access.

Redaction, Tokenization, and Handling Regulated Environments

Simply relying on passive controls does not meet compliance requirements. Sensitive data should be masked or removed before it reaches the system. Tokenization can also keep raw values safe.

The approach may differ, but the principle remains the same: observability must respect the same compliance boundaries as production systems.

Telemetry is powerful. It also carries responsibility. Without governance, the very signals meant to improve reliability can introduce risk.

Common Anti-Patterns

Most observability problems are not technical limitations. They are design choices that seemed reasonable at the time. Over time, those choices compound. Costs rise. Signal quality drops. Trust erodes.

These anti-patterns show up across teams, regardless of tooling.

“Collect Everything” Without a Question Model

It sounds safe. Store all the logs. Keep every trace. Tag every field.

But data without intent quickly becomes noise. If you cannot describe the questions you need to answer during an incident, collecting more telemetry will not help. It only increases storage bills and query latency.

Start with questions. Then instrument deliberately.

“Dashboards as Truth” Without Validating Data Quality

Dashboards feel authoritative. Clean graphs. Sharp percentiles. Clear labels.

But dashboards are only as reliable as the data behind them. Incorrect metric types, missing spans, or inconsistent tags quietly distort reality. Over time, teams begin to distrust the numbers. That loss of trust is difficult to recover.

Validate instrumentation. Audit assumptions. Treat dashboards as views, not facts.

Alert Floods and Threshold Obsession

Too many alerts create background noise. Engineers stop reacting. Important signals get buried.

Static thresholds often fail in dynamic systems. Traffic shifts. Usage patterns change. A fixed limit that worked six months ago becomes meaningless. Instead of obsessing over thresholds, anchor alerts to service-level objectives and user impact. Fewer alerts. Higher signal.

Tool Sprawl Without Consistent Instrumentation

Adding more tools does not fix inconsistent data. One team uses custom tags. Another uses different service names. Traces do not propagate across boundaries.

The result is fragmentation. Multiple dashboards. Conflicting views. Extra cost. Consistency in instrumentation matters more than the number of platforms in use.

Treating Observability as an Ops-Only Function

When observability lives only in operations, it becomes reactive. Developers ship code without instrumentation in mind. Context gets lost.

Observability works best when it is shared ownership. Developers, platform engineers, and SREs define conventions together. Signals improve. Incidents become easier to explain. Observability is not a layer added at the end. It is part of how systems are built.

A Practical Adoption Roadmap

Observability does not appear fully formed. It evolves. Most teams move through stages, sometimes intentionally, sometimes by accident. The difference between maturity levels is not tooling; it is how deliberately signals, context, and workflows are designed.

What follows is a practical progression. Not theoretical. This is how adoption tends to unfold in real engineering organizations.

Stage 1: Baseline Monitoring and SLOs (Fast Detection)

Everything starts with knowing when something is off. Before adding complexity, teams need clear service-level objectives and a small set of meaningful signals, latency, traffic, errors, and saturation usually cover the basics. The point is not to fill dashboards with charts, but to connect alerts directly to user impact. If no user would notice the issue, the alert probably needs rethinking.

At this stage, dashboards multiply quickly. They look reassuring. The common failure here is false confidence, green dashboards masking blind spots because instrumentation is shallow or narrowly scoped. Detection improves, but diagnosis is still slow.

Common Failure at This Stage

- False Confidence from Green Dashboards: Teams assume visibility equals understanding. But when instrumentation is shallow or scoped too narrowly, dashboards can stay green while real user impact goes unnoticed. Detection exists, but diagnosis remains fragile.

Stage 2: Consistent Instrumentation and Correlation (Fast Diagnosis)

At this point, the conversation changes. It is no longer just about spotting anomalies. The real question becomes what caused them, and where to start looking.

That usually means improving trace coverage, structuring logs properly, standardizing service names, and making sure correlation IDs actually propagate across boundaries. When this is done well, engineers can move from a metric to a trace, then into logs, without stitching the story together manually. Speed improves. So does confidence in the diagnosis.

The typical failure at this stage is inconsistency. One team instruments thoroughly. Another uses different attribute names. Traces break at service boundaries. Correlation weakens. The data exists, but it does not connect cleanly.

Common Failure at This Stage

- Inconsistent Instrumentation and Broken Correlation: Different naming conventions, incomplete trace propagation, and uneven logging practices weaken correlation. The data exists, but it does not connect cleanly across service boundaries.

Stage 3: Proactive Reliability Engineering (Trend-Driven Improvements)

When incidents stop feeling chaotic, attention moves forward. Trends begin to matter more than single events. They study latency distributions, dependency performance, and capacity patterns. Observability becomes an input to architectural decisions, not just incident response.

Here, the failure mode shifts. Costs rise. High-cardinality tags, verbose logs, and large trace volumes introduce unexpected spend. Without governance, maturity becomes expensive.

Common Failure at This Stage

- Telemetry Growth Without Cost Governance: High-cardinality attributes, verbose logging, and aggressive trace retention introduce unexpected spend. Without sampling discipline and retention strategy, maturity becomes expensive.

Stage 4: Continuous Verification and Automated Remediation (Where Appropriate)

Over time, the role of observability changes. It stops being something you only look at after a problem and starts shaping what the system does next. In more advanced setups, an SLO breach doesn’t just raise an alert, it can automatically roll a deployment back.

That said, automation only works when the underlying signals are reliable. If telemetry is inconsistent or poorly instrumented, teams pause, and for good reason. The real risk at this stage is not lack of automation, but too much of it, driven by signals that no one fully trusts.

Common Failure at This Stage

- Automation Built on Untrusted Signals: Automated rollbacks or remediation only work when signals are reliable. If telemetry quality is uneven, teams hesitate to trust automation, or worse, disable it after false triggers.

What to Do in the First 30 Days vs First 90 Days

The first 30 days should focus on clarity, not coverage. Define SLOs. Instrument critical paths. Standardize naming and basic correlation. Avoid over-engineering.

Around the 90-day mark, the focus usually shifts to depth and discipline. Trace coverage should extend across more services, instrumentation patterns need to be consistent, and cost controls become part of the conversation. That often means adjusting sampling rates and defining clear data retention rules so telemetry stays useful without becoming expensive. Review data quality. Align alerting with real user impact.

Maturity is less about adding more signals and more about tightening feedback loops. Each stage builds on the previous one. Skipping steps usually shows up later, in blind spots, in cost spikes, or in automation that no one fully trusts.

Putting Observability into Practice with CubeAPM

Observability maturity does not come from collecting more data. It comes from disciplined instrumentation, shared context, controlled telemetry growth, and fast investigative workflows.

CubeAPM is designed around these engineering realities.

Instrumentation and Context by Default

CubeAPM is OpenTelemetry native. Instrumentation quality determines everything downstream.

It supports:

- Native OpenTelemetry ingestion for metrics, logs, and traces

- Consistent semantic conventions

- Automatic context propagation across services

- Preservation of trace and span relationships end to end

This prevents the common maturity failure of inconsistent instrumentation that breaks correlation.

Telemetry without shared context becomes noise. CubeAPM preserves that context across the system.

Investigation, Not Just Dashboards

Dashboards answer known questions. Incidents rarely follow known patterns.

During a real outage, teams need to pivot quickly:

- From a metric anomaly to the trace that caused it

- From a slow span to the downstream dependency

- From an error cluster to the exact deployment that introduced it

CubeAPM keeps those pivots inside a single workflow. You are not exporting screenshots between tools. You are following context.

That difference shows up in mean time to resolution.

Keeping Telemetry from Becoming a Cost Problem

Telemetry volume grows faster than most teams expect. Especially in Kubernetes and microservices environments.

Verbose logs during incidents. High cardinality tags. Chatty services emitting traces at full fidelity.

Without guardrails, cost becomes the limiting factor.

CubeAPM includes sampling controls, retention configuration by signal, and predictable ingestion based pricing. The goal is simple: keep the data that helps you answer real questions under pressure. Do not keep everything by default.

Observability should scale with the system, not surprise the budget.

Governance Is Not Optional

Telemetry quietly accumulates identifiers, request payload fragments, and user context. That risk does not show up on day one. It builds over time.

CubeAPM supports a deployment model where customers retain full control of their data while operational complexity is handled by the CubeAPM team.

In practice, that means:

- The platform runs in the customer’s environment

- Data stays within the customer’s infrastructure

- Storage, retention, and access policies remain under customer control

- Platform upgrades, maintenance, and operational management are handled by CubeAPM

This approach separates data ownership from operational burden.

Security teams keep control over where telemetry lives and who can access it. Engineering teams avoid the overhead of maintaining and upgrading another complex system.

Conclusion: Observability as an Engineering Discipline

Observability is not something you bolt on after deployment. It is a design decision. The way services emit signals, propagate context, and define boundaries determines whether a system will be understandable later. Treating observability as a feature leads to patchwork dashboards and reactive fixes. Treating it as an engineering discipline shapes architecture from the start.

There is also a shift that happens over time. Early efforts focus on visibility, more charts, more logs, more alerts. That phase feels productive. But visibility alone does not explain behavior. Understanding comes from correlation, clean instrumentation, and the ability to move from a symptom to a cause without guesswork. The difference is subtle, yet operationally significant.

How mature a team’s observability practices are has a direct effect on system resilience. Teams that can follow incidents recover quickly. Small issues stay small. Without that insight, they can spiral into major outages. Tools help, but discipline matters more. Resilient systems are not just running, they are understandable.

Disclaimer: The information in this article reflects the latest details available at the time of publication and may change as technologies and products evolve.

FAQs

1. What is observability in simple terms?

Observability refers to the ability to understand what is happening inside a system by examining its external signals.

2. Is observability just logs, metrics, and traces?

No. Those are foundational signals, but context, profiling, RUM, synthetics, and change intelligence are equally important.

3. What is the difference between monitoring and observability?

Monitoring detects known problems. Observability explains unexpected ones.

4. Do small teams need observability?

Yes. Even small distributed systems can fail in complex ways. The scale differs. The need does not.

5. How does OpenTelemetry relate to observability?

It relates to observability through the standardization of instrumentation and context propagation, forming a foundation for modern observability systems.